## Line Graphs: Answer Accuracy Across Layers in Llama-3 Models

### Overview

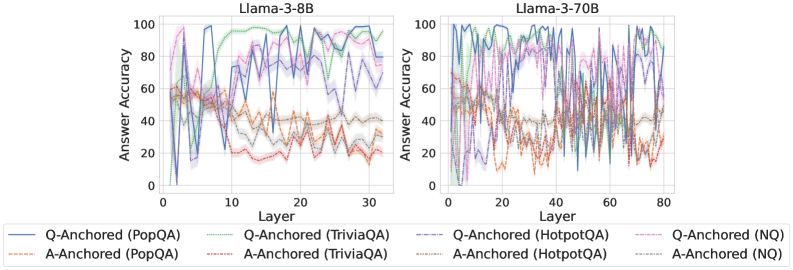

The image contains two side-by-side line graphs comparing answer accuracy across transformer model layers for different question-answering (QA) datasets. The left graph represents the Llama-3-8B model (30 layers), while the right graph represents the Llama-3-70B model (80 layers). Each graph shows multiple data series with distinct line styles and colors, representing different QA datasets and anchoring methods.

### Components/Axes

- **X-axis (Layer)**:

- Left graph: 0–30 (Llama-3-8B)

- Right graph: 0–80 (Llama-3-70B)

- **Y-axis (Answer Accuracy)**: 0–100% (both graphs)

- **Legends**:

- **Q-Anchored (PopQA)**: Solid blue line

- **A-Anchored (PopQA)**: Dashed orange line

- **Q-Anchored (TriviaQA)**: Solid green line

- **A-Anchored (TriviaQA)**: Dashed brown line

- **Q-Anchored (HotpotQA)**: Solid purple line

- **A-Anchored (HotpotQA)**: Dashed red line

- **Q-Anchored (NQ)**: Solid pink line

- **A-Anchored (NQ)**: Dashed gray line

### Detailed Analysis

#### Llama-3-8B (Left Graph)

- **Q-Anchored (PopQA)**: Starts at ~80% accuracy, dips to ~40% at layer 10, then fluctuates between 50–70%.

- **A-Anchored (PopQA)**: Begins at ~60%, drops to ~20% at layer 10, then stabilizes near 40–60%.

- **Q-Anchored (TriviaQA)**: Peaks at ~90% at layer 5, then declines to ~50% by layer 30.

- **A-Anchored (TriviaQA)**: Starts at ~70%, dips to ~30% at layer 15, then recovers to ~50–70%.

- **Q-Anchored (HotpotQA)**: Oscillates between 60–80%, with sharp drops at layers 10 and 25.

- **A-Anchored (HotpotQA)**: Starts at ~50%, fluctuates between 30–60%, with a notable spike at layer 20.

- **Q-Anchored (NQ)**: Peaks at ~75% at layer 15, then declines to ~40% by layer 30.

- **A-Anchored (NQ)**: Starts at ~55%, dips to ~25% at layer 10, then stabilizes near 40–60%.

#### Llama-3-70B (Right Graph)

- **Q-Anchored (PopQA)**: Starts at ~75%, dips to ~30% at layer 20, then fluctuates between 50–75%.

- **A-Anchored (PopQA)**: Begins at ~65%, drops to ~25% at layer 25, then stabilizes near 40–65%.

- **Q-Anchored (TriviaQA)**: Peaks at ~85% at layer 10, then declines to ~45% by layer 80.

- **A-Anchored (TriviaQA)**: Starts at ~75%, dips to ~35% at layer 30, then recovers to ~50–75%.

- **Q-Anchored (HotpotQA)**: Oscillates between 55–75%, with sharper drops at layers 40 and 60.

- **A-Anchored (HotpotQA)**: Starts at ~55%, fluctuates between 35–65%, with a spike at layer 50.

- **Q-Anchored (NQ)**: Peaks at ~70% at layer 30, then declines to ~35% by layer 80.

- **A-Anchored (NQ)**: Starts at ~50%, dips to ~20% at layer 20, then stabilizes near 40–60%.

### Key Observations

1. **Fluctuating Accuracy**: All datasets show significant variability across layers, with no consistent upward or downward trend.

2. **Q-Anchored vs. A-Anchored**: Q-Anchored methods generally outperform A-Anchored in early layers but exhibit similar variability in later layers.

3. **Model Size Impact**: The 70B model shows more pronounced fluctuations in later layers (e.g., layer 60–80) compared to the 8B model.

4. **Dataset-Specific Patterns**:

- **PopQA**: Sharp early-layer drops in both models.

- **TriviaQA**: High early peaks followed by declines.

- **HotpotQA**: Persistent mid-range fluctuations.

- **NQ**: Gradual decline in later layers.

### Interpretation

The data suggests that anchoring methods (Q-Anchored vs. A-Anchored) influence answer accuracy differently across datasets and model sizes. Q-Anchored methods often show stronger performance in early layers but fail to maintain consistency, while A-Anchored methods exhibit more stability in later layers. The Llama-3-70B model’s increased layer count amplifies variability, indicating that larger models may struggle with layer-specific task alignment. The fluctuations highlight the complexity of transformer architectures, where certain layers may specialize in specific tasks (e.g., TriviaQA’s early-layer peaks suggest specialization in factual recall). These patterns underscore the need for dataset-specific tuning and layer-wise analysis to optimize QA performance.