## Comparative Analysis of Large Language Model (LLM) Performance

### Overview

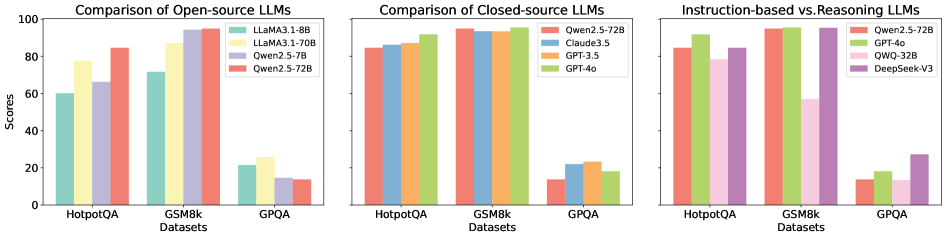

The image presents a composite of three bar charts comparing the performance of various Large Language Models (LLMs) across three benchmark datasets: HotpotQA, GSM8k, and GPQA. The charts are organized to compare different model categories: open-source, closed-source, and a final comparison between instruction-tuned and reasoning-focused models.

### Components/Axes

* **Chart Structure:** Three separate bar charts arranged horizontally.

* **Common X-Axis (All Charts):** Labeled "Datasets". The three categories are:

1. HotpotQA

2. GSM8k

3. GPQA

* **Common Y-Axis (All Charts):** Labeled "Scores". The scale runs from 0 to 100 in increments of 20.

* **Legends:** Each chart has its own legend positioned in the top-right corner of its respective plot area.

### Detailed Analysis

#### Chart 1: Comparison of Open-source LLMs

* **Legend (Top-Right):**

* Teal Bar: LLaMA3.1-8B

* Yellow Bar: LLaMA3.1-70B

* Purple Bar: Qwen2.5-7B

* Red Bar: Qwen2.5-72B

* **Data Points & Trends:**

* **HotpotQA:** Scores range from ~60 (LLaMA3.1-8B) to ~85 (Qwen2.5-72B). The trend is generally upward with model scale (8B < 70B < 72B), with Qwen2.5-7B (~65) performing slightly better than LLaMA3.1-8B.

* **GSM8k:** This is the highest-performing category for all models. Scores are tightly clustered between ~70 (LLaMA3.1-8B) and ~95 (Qwen2.5-72B). All models show strong performance here.

* **GPQA:** This is the lowest-performing category. Scores are significantly lower, ranging from ~15 (Qwen2.5-7B) to ~25 (LLaMA3.1-70B). The trend is less clear, with the largest model (Qwen2.5-72B) scoring ~15, similar to the smallest 7B model.

#### Chart 2: Comparison of Closed-source LLMs

* **Legend (Top-Right):**

* Red Bar: Qwen2.5-72B (Note: This model appears in both open-source and closed-source charts, suggesting it may be available under different licensing or access models).

* Blue Bar: Claude3.5

* Orange Bar: QWO-32B

* Green Bar: GPT-4o

* **Data Points & Trends:**

* **HotpotQA:** All models perform strongly, with scores between ~85 (Qwen2.5-72B) and ~90 (GPT-4o). Performance is very consistent across models.

* **GSM8k:** Again, the highest scores. All models are clustered near the top of the scale, between ~90 (Qwen2.5-72B) and ~95 (GPT-4o, Claude3.5).

* **GPQA:** Scores drop dramatically for all models, ranging from ~15 (Qwen2.5-72B) to ~25 (QWO-32B). This dataset is clearly the most challenging.

#### Chart 3: Instruction-based vs Reasoning LLMs

* **Legend (Top-Right):**

* Red Bar: Qwen2.5-72B

* Green Bar: GPT-4o

* Pink Bar: QWO-32B

* Purple Bar: DeepSeek-V3

* **Data Points & Trends:**

* **HotpotQA:** Scores are high, from ~80 (QWO-32B) to ~90 (GPT-4o, DeepSeek-V3).

* **GSM8k:** Scores are very high and tightly grouped, from ~85 (QWO-32B) to ~95 (DeepSeek-V3).

* **GPQA:** This chart shows the most significant variation. Qwen2.5-72B and QWO-32B score low (~15). GPT-4o scores moderately (~20). **DeepSeek-V3 is a clear outlier**, scoring approximately 30, which is notably higher than any other model on this dataset across all three charts.

### Key Observations

1. **Dataset Difficulty:** GPQA is consistently the most challenging benchmark for all models, with scores rarely exceeding 30. GSM8k is the easiest, with top models scoring near 95.

2. **Model Scale vs. Performance:** In the open-source chart, larger models (70B, 72B) generally outperform smaller ones (7B, 8B), but the advantage is not uniform across all tasks (e.g., GPQA).

3. **Closed-source Consistency:** Closed-source models (Claude3.5, GPT-4o) show very high and consistent performance on HotpotQA and GSM8k.

4. **Notable Outlier:** DeepSeek-V3 in the third chart demonstrates superior performance on the difficult GPQA benchmark compared to all other models shown.

5. **Model Overlap:** Qwen2.5-72B appears in all three charts, serving as a common reference point. Its performance is strong on GSM8k but weak on GPQA.

### Interpretation

This visualization suggests that while modern LLMs have achieved near-human performance on certain reasoning tasks (GSM8k), complex, multi-step reasoning or knowledge-intensive tasks (GPQA) remain a significant challenge. The data implies that simply increasing model scale (from 7B to 72B parameters) improves performance on some benchmarks more than others. The standout performance of DeepSeek-V3 on GPQA indicates that specific architectural or training innovations can yield disproportionate gains on the hardest tasks, potentially more so than raw scale alone. The comparison between instruction-based and reasoning-focused models (Chart 3) highlights that model specialization or training methodology is a critical factor for performance on specific types of problems.