TECHNICAL ASSET FINGERPRINT

ba11403d936c9f58abce3f67

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Chart: LLM Accuracy vs. Interactions

### Overview

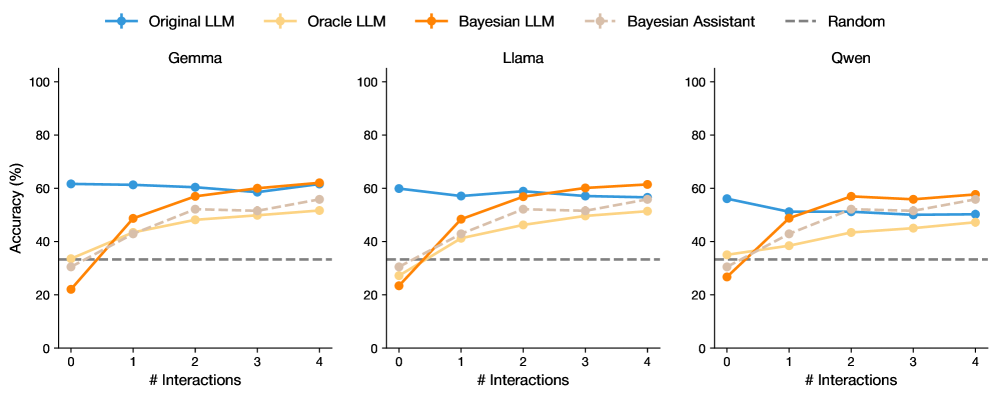

The image presents three line charts comparing the accuracy of different Language Learning Models (LLMs) across a varying number of interactions. The charts are titled "Gemma", "Llama", and "Qwen". Each chart displays the accuracy (in percentage) on the y-axis against the number of interactions on the x-axis. Five different LLM configurations are compared: "Original LLM", "Oracle LLM", "Bayesian LLM", "Bayesian Assistant", and a "Random" baseline.

### Components/Axes

* **X-axis:** "# Interactions" ranging from 0 to 4 in integer increments.

* **Y-axis:** "Accuracy (%)" ranging from 0 to 100 in increments of 20.

* **Titles:** "Gemma" (left), "Llama" (center), "Qwen" (right).

* **Legend (Top):**

* Blue: "Original LLM"

* Light Orange: "Oracle LLM"

* Orange: "Bayesian LLM"

* Light Gray: "Bayesian Assistant"

* Dashed Gray: "Random"

### Detailed Analysis

#### Gemma Chart (Left)

* **Original LLM (Blue):** Starts at approximately 62% accuracy and remains relatively constant, ending at approximately 59%.

* **Oracle LLM (Light Orange):** Starts at approximately 32% accuracy and increases to approximately 52% at 1 interaction, then increases gradually to approximately 55% at 4 interactions.

* **Bayesian LLM (Orange):** Starts at approximately 22% accuracy, increases sharply to approximately 48% at 1 interaction, and then increases to approximately 60% at 4 interactions.

* **Bayesian Assistant (Light Gray):** Starts at approximately 32% accuracy, increases to approximately 50% at 1 interaction, and then increases to approximately 54% at 4 interactions.

* **Random (Dashed Gray):** Constant at approximately 33% accuracy.

#### Llama Chart (Center)

* **Original LLM (Blue):** Starts at approximately 59% accuracy and remains relatively constant, ending at approximately 62%.

* **Oracle LLM (Light Orange):** Starts at approximately 30% accuracy and increases to approximately 45% at 1 interaction, then increases gradually to approximately 55% at 4 interactions.

* **Bayesian LLM (Orange):** Starts at approximately 22% accuracy, increases sharply to approximately 49% at 1 interaction, and then increases to approximately 65% at 4 interactions.

* **Bayesian Assistant (Light Gray):** Starts at approximately 30% accuracy, increases to approximately 42% at 1 interaction, and then increases to approximately 55% at 4 interactions.

* **Random (Dashed Gray):** Constant at approximately 33% accuracy.

#### Qwen Chart (Right)

* **Original LLM (Blue):** Starts at approximately 56% accuracy, decreases to approximately 50% at 1 interaction, and then increases slightly to approximately 51% at 4 interactions.

* **Oracle LLM (Light Orange):** Starts at approximately 30% accuracy and increases to approximately 40% at 1 interaction, then increases gradually to approximately 52% at 4 interactions.

* **Bayesian LLM (Orange):** Starts at approximately 28% accuracy, increases sharply to approximately 48% at 1 interaction, and then increases to approximately 58% at 4 interactions.

* **Bayesian Assistant (Light Gray):** Starts at approximately 35% accuracy, increases to approximately 45% at 1 interaction, and then increases to approximately 52% at 4 interactions.

* **Random (Dashed Gray):** Constant at approximately 33% accuracy.

### Key Observations

* The "Original LLM" generally maintains a relatively stable accuracy across all three charts, with a slight decrease in the "Qwen" chart.

* The "Bayesian LLM" shows the most significant improvement in accuracy with increasing interactions across all three charts.

* The "Oracle LLM" and "Bayesian Assistant" show similar trends, with moderate improvements in accuracy as the number of interactions increases.

* The "Random" baseline remains constant across all interaction levels and charts.

* The "Bayesian LLM" consistently outperforms the "Oracle LLM" and "Bayesian Assistant" after a few interactions.

### Interpretation

The data suggests that the "Bayesian LLM" benefits the most from increased interactions, indicating that it effectively learns and adapts to new information. The "Original LLM" appears to be less sensitive to the number of interactions, maintaining a relatively stable performance. The "Random" baseline provides a benchmark for comparison, highlighting the performance gain achieved by the other LLM configurations. The "Oracle LLM" and "Bayesian Assistant" show moderate improvements with interactions, suggesting they also benefit from learning but to a lesser extent than the "Bayesian LLM". The differences in performance across the "Gemma", "Llama", and "Qwen" charts indicate that the effectiveness of these LLM configurations may vary depending on the specific dataset or task.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Chart: LLM Accuracy vs. Interactions

### Overview

The image presents three line charts, each displaying the accuracy of different Large Language Models (LLMs) – Gemma, Llama, and Qwen – as a function of the number of interactions. Each chart compares the performance of "Original LLM", "Oracle LLM", "Bayesian LLM", "Bayesian Assistant", and a "Random" baseline. Accuracy is measured in percentage (%).

### Components/Axes

* **X-axis:** "# Interactions" ranging from 0 to 4.

* **Y-axis:** "Accuracy (%)" ranging from 0 to 100.

* **Legend:** Located at the top-right of each chart, containing the following labels and corresponding colors:

* Original LLM (Blue)

* Oracle LLM (Orange)

* Bayesian LLM (Purple)

* Bayesian Assistant (Light Orange/Peach)

* Random (Gray dashed line)

* **Chart Titles:** Gemma, Llama, and Qwen, positioned above each respective chart.

### Detailed Analysis or Content Details

**Gemma Chart:**

* **Original LLM (Blue):** Starts at approximately 58%, dips to around 54% at 1 interaction, then rises to approximately 60% at 2 interactions, and remains relatively stable around 58-60% for the remaining interactions.

* **Oracle LLM (Orange):** Starts at approximately 28%, increases steadily to around 52% at 4 interactions.

* **Bayesian LLM (Purple):** Starts at approximately 32%, increases to around 48% at 2 interactions, and plateaus around 50-52% for the remaining interactions.

* **Bayesian Assistant (Light Orange/Peach):** Starts at approximately 28%, increases to around 45% at 2 interactions, and plateaus around 45-48% for the remaining interactions.

* **Random (Gray dashed line):** Maintains a constant value of approximately 30% across all interactions.

**Llama Chart:**

* **Original LLM (Blue):** Starts at approximately 58%, dips to around 54% at 1 interaction, then rises to approximately 60% at 2 interactions, and remains relatively stable around 58-60% for the remaining interactions.

* **Oracle LLM (Orange):** Starts at approximately 28%, increases steadily to around 52% at 4 interactions.

* **Bayesian LLM (Purple):** Starts at approximately 32%, increases to around 48% at 2 interactions, and plateaus around 50-52% for the remaining interactions.

* **Bayesian Assistant (Light Orange/Peach):** Starts at approximately 28%, increases to around 45% at 2 interactions, and plateaus around 45-48% for the remaining interactions.

* **Random (Gray dashed line):** Maintains a constant value of approximately 30% across all interactions.

**Qwen Chart:**

* **Original LLM (Blue):** Starts at approximately 58%, dips to around 54% at 1 interaction, then rises to approximately 60% at 2 interactions, and remains relatively stable around 58-60% for the remaining interactions.

* **Oracle LLM (Orange):** Starts at approximately 28%, increases steadily to around 52% at 4 interactions.

* **Bayesian LLM (Purple):** Starts at approximately 32%, increases to around 48% at 2 interactions, and plateaus around 50-52% for the remaining interactions.

* **Bayesian Assistant (Light Orange/Peach):** Starts at approximately 28%, increases to around 45% at 2 interactions, and plateaus around 45-48% for the remaining interactions.

* **Random (Gray dashed line):** Maintains a constant value of approximately 30% across all interactions.

All three charts exhibit identical trends and approximate values for each LLM type.

### Key Observations

* The "Original LLM" consistently demonstrates the highest accuracy across all three models (Gemma, Llama, and Qwen), remaining above the "Random" baseline.

* The "Oracle LLM" shows a consistent increase in accuracy with more interactions, starting below the "Random" baseline and eventually surpassing it.

* Both "Bayesian LLM" and "Bayesian Assistant" show improvement with interactions, but their accuracy plateaus after 2 interactions.

* The "Random" baseline remains constant, providing a benchmark for performance.

* The three charts are nearly identical, suggesting the observed trends are consistent across the different LLMs.

### Interpretation

The data suggests that the "Original LLM" configuration performs best across all three models, indicating its inherent capabilities. The "Oracle LLM" benefits from increased interactions, suggesting it learns and improves with more data or feedback. The "Bayesian" approaches show initial improvement but reach a plateau, potentially indicating limitations in their learning mechanisms or the need for more complex models. The consistent performance of the "Random" baseline highlights the value of the LLMs in exceeding random chance. The near-identical trends across Gemma, Llama, and Qwen suggest a common underlying pattern in how these LLMs respond to interactions and learning strategies. The initial dip in accuracy for the "Original LLM" at 1 interaction could be due to a temporary disruption or adjustment period before the model stabilizes. The fact that all models outperform the random baseline indicates that they are all learning and improving, even if at different rates and to different extents.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Multi-Panel Line Chart: LLM Accuracy vs. Number of Interactions

### Overview

The image displays a set of three line charts arranged horizontally, comparing the performance of different Large Language Model (LLM) configurations and a baseline across three distinct base models: **Gemma**, **Llama**, and **Qwen**. The charts plot "Accuracy (%)" against the "# Interactions" (from 0 to 4). The primary comparison is between an "Original LLM," an "Oracle LLM," a "Bayesian LLM," a "Bayesian Assistant," and a "Random" baseline.

### Components/Axes

* **Legend:** Positioned at the top center of the entire figure. It defines five data series:

* `Original LLM`: Solid blue line with circular markers.

* `Oracle LLM`: Solid light orange line with circular markers.

* `Bayesian LLM`: Solid dark orange line with circular markers.

* `Bayesian Assistant`: Dashed beige line with circular markers.

* `Random`: Dashed gray line (no markers).

* **Subplot Titles:** Each of the three charts has a title centered above it: "Gemma" (left), "Llama" (center), "Qwen" (right).

* **Y-Axis (Common to all):** Labeled "Accuracy (%)". The scale runs from 0 to 100, with major tick marks at 0, 20, 40, 60, 80, and 100.

* **X-Axis (Common to all):** Labeled "# Interactions". The scale shows integer values from 0 to 4.

### Detailed Analysis

#### **Subplot 1: Gemma**

* **Original LLM (Blue):** Starts at ~62% accuracy at 0 interactions. The line is nearly flat, showing a very slight downward trend, ending at ~61% at 4 interactions.

* **Oracle LLM (Light Orange):** Starts at ~33% at 0 interactions. Shows a steady, moderate upward trend, reaching ~51% at 4 interactions.

* **Bayesian LLM (Dark Orange):** Starts the lowest at ~22% at 0 interactions. Exhibits the steepest upward slope, crossing the Oracle line between 1 and 2 interactions, and ends as the highest performer at ~62% at 4 interactions.

* **Bayesian Assistant (Beige, Dashed):** Starts at ~28% at 0 interactions. Follows a similar upward trajectory to the Bayesian LLM but remains slightly below it, ending at ~56% at 4 interactions.

* **Random (Gray, Dashed):** A flat horizontal line at approximately 33% accuracy across all interaction counts.

#### **Subplot 2: Llama**

* **Original LLM (Blue):** Starts at ~60% at 0 interactions. Shows a slight dip at 1 interaction (~57%) before recovering and stabilizing around ~59% from 2-4 interactions.

* **Oracle LLM (Light Orange):** Starts at ~33% at 0 interactions. Increases steadily to ~51% at 4 interactions.

* **Bayesian LLM (Dark Orange):** Starts at ~24% at 0 interactions. Rises sharply, surpassing the Oracle line after 1 interaction, and ends at ~61% at 4 interactions.

* **Bayesian Assistant (Beige, Dashed):** Starts at ~29% at 0 interactions. Increases steadily, tracking just below the Bayesian LLM, and ends at ~57% at 4 interactions.

* **Random (Gray, Dashed):** Flat line at ~33%.

#### **Subplot 3: Qwen**

* **Original LLM (Blue):** Starts at ~56% at 0 interactions. Shows a more pronounced decline than the other models, dropping to ~51% at 1 interaction and ending at ~50% at 4 interactions.

* **Oracle LLM (Light Orange):** Starts at ~34% at 0 interactions. Increases gradually to ~47% at 4 interactions.

* **Bayesian LLM (Dark Orange):** Starts at ~26% at 0 interactions. Rises steeply, crossing the Original LLM line between 1 and 2 interactions, and ends at ~58% at 4 interactions.

* **Bayesian Assistant (Beige, Dashed):** Starts at ~30% at 0 interactions. Follows an upward trend, ending at ~52% at 4 interactions.

* **Random (Gray, Dashed):** Flat line at ~33%.

### Key Observations

1. **Consistent Hierarchy at Start:** For all three base models (Gemma, Llama, Qwen), the performance order at 0 interactions is identical: Original LLM > Random ≈ Oracle LLM ≈ Bayesian Assistant > Bayesian LLM.

2. **Bayesian Methods Improve with Interactions:** Both the "Bayesian LLM" and "Bayesian Assistant" show strong, positive slopes, indicating significant accuracy gains with more interactions.

3. **Crossover Point:** The "Bayesian LLM" consistently starts as the worst performer but surpasses the "Oracle LLM" after 1-2 interactions and eventually surpasses the "Original LLM" for Gemma and Qwen, and nearly matches it for Llama.

4. **Original LLM Stability/Decline:** The "Original LLM" shows minimal improvement or a slight decline with more interactions, suggesting it does not benefit from the iterative process in this setup.

5. **Oracle as a Mid-Tier Benchmark:** The "Oracle LLM" provides a consistent, moderate improvement over the random baseline but is outperformed by the Bayesian methods after a few interactions.

6. **Random Baseline:** The flat "Random" line at ~33% suggests a 3-class classification problem where random guessing yields one-third accuracy.

### Interpretation

This data demonstrates the effectiveness of a **Bayesian iterative refinement approach** for improving LLM accuracy on a given task. The key insight is that while the base ("Original") LLM starts with high accuracy, it cannot improve further. In contrast, the Bayesian methods, which likely incorporate feedback or uncertainty from each interaction, start poorly but learn rapidly.

The "Oracle LLM" likely represents an idealized upper bound for a non-Bayesian iterative method, showing that some improvement is possible. However, the Bayesian approach's ability to surpass both the Oracle and the Original LLM after a few interactions highlights its superior efficiency in leveraging iterative feedback. The consistency of this pattern across three different base models (Gemma, Llama, Qwen) suggests the finding is robust and not model-specific. The "Bayesian Assistant" (dashed beige) performing slightly worse than the full "Bayesian LLM" may indicate it uses a less comprehensive update mechanism. The charts argue strongly for integrating Bayesian or similar uncertainty-aware, iterative frameworks when deploying LLMs in interactive settings where multiple rounds of refinement are possible.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Chart: Model Accuracy vs. Number of Interactions

### Overview

The image displays three subplots comparing the accuracy trends of five models (Original LLM, Oracle LLM, Bayesian LLM, Bayesian Assistant, and Random) across three datasets (Gemma, Llama, Owen). Accuracy (%) is plotted against the number of interactions (0–4), with distinct color-coded lines for each model.

---

### Components/Axes

- **X-axis**: Number of Interactions (0–4, integer steps)

- **Y-axis**: Accuracy (%) (0–100, linear scale)

- **Legend**: Located at the top, with color-coded labels:

- Blue: Original LLM

- Orange: Oracle LLM

- Brown: Bayesian LLM

- Gray: Bayesian Assistant

- Dashed Gray: Random

- **Subplots**: Three separate plots labeled "Gemma," "Llama," and "Owen" (left to right).

---

### Detailed Analysis

#### Gemma Subplot

- **Original LLM (Blue)**: Starts at ~60%, remains stable (~60–62%) across all interactions.

- **Oracle LLM (Orange)**: Begins at ~30%, rises steadily to ~60% by interaction 4.

- **Bayesian LLM (Brown)**: Starts at ~20%, increases sharply to ~60% by interaction 4.

- **Bayesian Assistant (Gray)**: Begins at ~30%, rises to ~55% by interaction 4.

- **Random (Dashed Gray)**: Flat at ~30% across all interactions.

#### Llama Subplot

- **Original LLM (Blue)**: Starts at ~60%, dips to ~55% at interaction 1, then stabilizes (~55–60%).

- **Oracle LLM (Orange)**: Begins at ~30%, rises to ~50% by interaction 4.

- **Bayesian LLM (Brown)**: Starts at ~20%, jumps to ~50% at interaction 2, remains stable.

- **Bayesian Assistant (Gray)**: Begins at ~30%, peaks at ~55% at interaction 3, then drops slightly.

- **Random (Dashed Gray)**: Flat at ~30%.

#### Owen Subplot

- **Original LLM (Blue)**: Starts at ~60%, drops to ~50% at interaction 1, then stabilizes (~50–55%).

- **Oracle LLM (Orange)**: Begins at ~30%, rises to ~45% by interaction 4.

- **Bayesian LLM (Brown)**: Starts at ~20%, jumps to ~55% at interaction 2, remains stable.

- **Bayesian Assistant (Gray)**: Begins at ~30%, peaks at ~55% at interaction 4.

- **Random (Dashed Gray)**: Flat at ~30%.

---

### Key Observations

1. **Bayesian Models Outperform**: Bayesian LLM and Bayesian Assistant consistently show the steepest improvement across all datasets, surpassing other models by interaction 2–4.

2. **Oracle LLM Improves Gradually**: Oracle LLM demonstrates steady gains but lags behind Bayesian models.

3. **Original LLM Stability**: Original LLM maintains relatively stable performance, with minor fluctuations.

4. **Random Baseline**: The Random model serves as a consistent lower bound (~30%) across all datasets.

5. **Dataset Variability**:

- Gemma shows the most pronounced improvement for Bayesian models.

- Owen exhibits the largest drop in Original LLM performance at interaction 1.

---

### Interpretation

The data suggests that **Bayesian methods (LLM and Assistant)** are highly effective at improving accuracy with increased interactions, outperforming non-Bayesian models. The Oracle LLM also benefits from interactions but to a lesser extent. The Original LLM’s performance is less sensitive to interaction count, while the Random model acts as a static baseline. Notably, the Bayesian Assistant’s peak at interaction 3 in Llama and interaction 4 in Owen indicates a delayed but significant improvement. These trends highlight the importance of interaction count in model refinement, particularly for Bayesian approaches.

DECODING INTELLIGENCE...