## Diagram: Taxonomy of Interpretability Approaches

### Overview

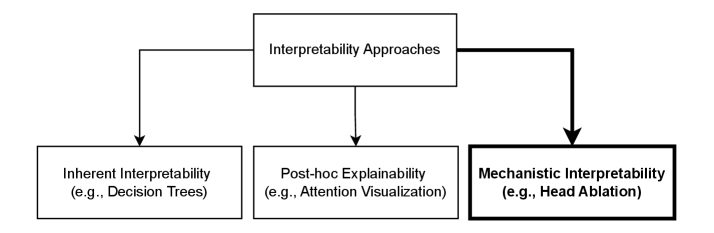

The image is a hierarchical flowchart diagram illustrating a classification system for "Interpretability Approaches" in the context of machine learning or AI systems. It presents a top-down structure with one primary category branching into three distinct sub-categories, each accompanied by a representative example.

### Components/Axes

The diagram consists of four rectangular boxes connected by directional arrows.

1. **Top-Level Box (Header):**

* **Label:** "Interpretability Approaches"

* **Position:** Centered at the top of the diagram.

* **Function:** Serves as the root or main category from which all other elements derive.

2. **Sub-Category Boxes (Main Content):** Three boxes are arranged horizontally below the header.

* **Left Box:**

* **Label:** "Inherent Interpretability"

* **Example Text:** "(e.g., Decision Trees)"

* **Center Box:**

* **Label:** "Post-hoc Explainability"

* **Example Text:** "(e.g., Attention Visualization)"

* **Right Box:**

* **Label:** "Mechanistic Interpretability"

* **Example Text:** "(e.g., Head Ablation)"

* **Visual Distinction:** This box has a significantly thicker black border compared to the others.

3. **Flow/Relationships:**

* Three solid black arrows originate from the bottom edge of the top "Interpretability Approaches" box.

* Each arrow points directly downward to one of the three sub-category boxes, indicating a direct "is-a" or "includes" relationship. The flow is strictly top-down and non-recursive.

### Detailed Analysis

The diagram defines a clear taxonomy:

* **Inherent Interpretability:** Refers to models that are transparent by design. The example given is "Decision Trees," whose logic can be directly inspected.

* **Post-hoc Explainability:** Refers to methods applied *after* a model has made a prediction to explain it. The example is "Attention Visualization," commonly used in transformer models to see which input parts the model focused on.

* **Mechanistic Interpretability:** Refers to reverse-engineering the internal mechanisms of a model to understand *how* it computes its outputs. The example is "Head Ablation," a technique where specific components (like attention heads) are disabled to observe the effect on model behavior. The thick border on this box visually emphasizes it, possibly indicating it as a focal point, a more advanced approach, or the specific topic of the surrounding document from which this image was taken.

### Key Observations

1. **Visual Emphasis:** The "Mechanistic Interpretability" box is the only element with a bold border, drawing immediate attention and suggesting it is the most important or relevant category in the current context.

2. **Structural Simplicity:** The diagram uses a simple, clean tree structure with no cross-connections or cycles, presenting the categories as distinct and non-overlapping.

3. **Example-Driven:** Each category is concretely defined not just by its name but by a canonical example, aiding in immediate understanding.

### Interpretation

This diagram provides a foundational framework for understanding the field of AI interpretability. It categorizes approaches based on their fundamental philosophy:

* **Inherent Interpretability** prioritizes using simple, transparent models from the outset.

* **Post-hoc Explainability** accepts complex "black-box" models and seeks to explain their decisions after the fact.

* **Mechanistic Interpretability** aims for a deeper, causal understanding of the model's internal computations, moving beyond correlation to mechanism.

The relationship is hierarchical: "Interpretability Approaches" is the broad field, which is then subdivided into these three primary methodologies. The emphasis on **Mechanistic Interpretability** suggests the source material likely argues for or focuses on this approach as particularly valuable for achieving a robust, scientific understanding of model behavior, as opposed to merely providing plausible explanations (a common critique of some post-hoc methods). The diagram effectively maps the conceptual landscape, showing that these are complementary strategies within the larger goal of making AI systems understandable.