## Diagram: Interpretability Approaches

### Overview

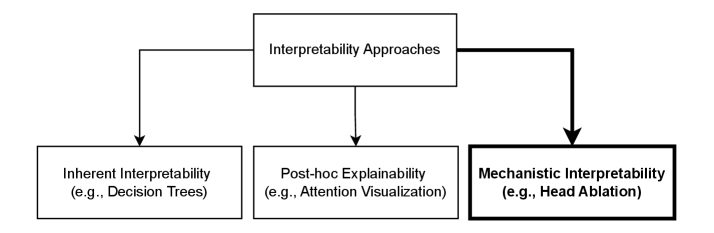

The image is a diagram illustrating different approaches to interpretability. It shows a hierarchy with "Interpretability Approaches" at the top, branching down to three categories: "Inherent Interpretability," "Post-hoc Explainability," and "Mechanistic Interpretability." Examples are provided for each category.

### Components/Axes

* **Top Box:** "Interpretability Approaches"

* **Left Box:** "Inherent Interpretability (e.g., Decision Trees)"

* **Middle Box:** "Post-hoc Explainability (e.g., Attention Visualization)"

* **Right Box:** "Mechanistic Interpretability (e.g., Head Ablation)"

* **Arrows:** Indicate the flow from the top box to the three categories below. The arrow leading to "Mechanistic Interpretability" is thicker than the other two. The box around "Mechanistic Interpretability" is also thicker.

### Detailed Analysis

* **Interpretability Approaches:** This is the main category, positioned at the top of the diagram.

* **Inherent Interpretability:** Located on the left, this approach is exemplified by "Decision Trees."

* **Post-hoc Explainability:** Situated in the middle, this approach is exemplified by "Attention Visualization."

* **Mechanistic Interpretability:** Located on the right, this approach is exemplified by "Head Ablation." The box and arrow leading to this category are emphasized with a thicker line.

### Key Observations

* The diagram presents a classification of interpretability approaches.

* The emphasis on "Mechanistic Interpretability" suggests its importance or distinctiveness compared to the other two approaches.

### Interpretation

The diagram illustrates a categorization of methods used to understand and interpret machine learning models. "Interpretability Approaches" is the overarching concept, which is then divided into three distinct categories: "Inherent Interpretability," "Post-hoc Explainability," and "Mechanistic Interpretability."

* **Inherent Interpretability** refers to models that are inherently easy to understand due to their structure (e.g., Decision Trees).

* **Post-hoc Explainability** involves techniques applied after a model is trained to explain its behavior (e.g., Attention Visualization).

* **Mechanistic Interpretability** (emphasized in the diagram) likely represents a more in-depth approach, possibly involving understanding the internal mechanisms of the model (e.g., Head Ablation).

The emphasis on "Mechanistic Interpretability" suggests that it may be a more recent or particularly important area of research in the field of interpretability. The diagram highlights the different ways in which we can approach the challenge of understanding how and why machine learning models make their decisions.