\n

## Diagram: Interpretability Approaches

### Overview

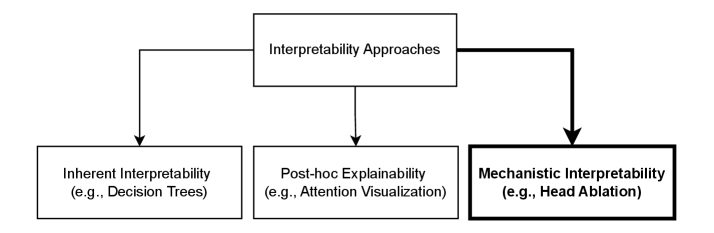

The image is a diagram illustrating three main approaches to interpretability in machine learning or artificial intelligence. It depicts a hierarchical structure with "Interpretability Approaches" as the root node, branching out into "Inherent Interpretability", "Post-hoc Explainability", and "Mechanistic Interpretability". Each of these branches includes an example in parentheses.

### Components/Axes

The diagram consists of three rectangular boxes connected by directed arrows.

* **Top Box:** "Interpretability Approaches" - positioned at the top-center of the image.

* **Left Box:** "Inherent Interpretability (e.g., Decision Trees)" - positioned at the bottom-left.

* **Center Box:** "Post-hoc Explainability (e.g., Attention Visualization)" - positioned at the bottom-center.

* **Right Box:** "Mechanistic Interpretability (e.g., Head Ablation)" - positioned at the bottom-right.

Arrows originate from the top box and point downwards towards each of the three bottom boxes, indicating a categorization or decomposition.

### Detailed Analysis or Content Details

The diagram presents a categorization of interpretability methods.

* **Interpretability Approaches:** This is the overarching category.

* **Inherent Interpretability:** This approach refers to models that are interpretable by design. The example given is Decision Trees.

* **Post-hoc Explainability:** This approach involves explaining the decisions of a model *after* it has been trained. The example given is Attention Visualization.

* **Mechanistic Interpretability:** This approach aims to understand the internal workings of a model, often by analyzing its components. The example given is Head Ablation.

### Key Observations

The diagram highlights that interpretability can be achieved through different strategies, ranging from building inherently interpretable models to explaining or dissecting existing complex models. The examples provided suggest a spectrum of complexity and effort involved in each approach.

### Interpretation

The diagram suggests a framework for understanding how to approach the problem of model interpretability. It implies that there isn't a single "best" approach, but rather a choice to be made based on the specific model, application, and desired level of understanding.

* **Inherent Interpretability** is the most straightforward, but may come at the cost of model performance.

* **Post-hoc Explainability** offers a compromise, allowing for the use of complex models while still providing some insight into their behavior.

* **Mechanistic Interpretability** is the most challenging, but potentially the most rewarding, as it aims to reveal the fundamental principles governing the model's decisions.

The diagram doesn't provide quantitative data or specific performance metrics. It is a conceptual illustration of different interpretability strategies. The choice of examples (Decision Trees, Attention Visualization, Head Ablation) suggests a focus on machine learning models, particularly those used in natural language processing or computer vision.