## Flowchart: Interpretability Approaches

### Overview

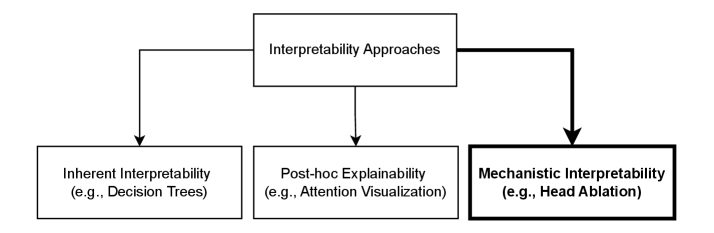

The diagram illustrates a hierarchical structure of interpretability approaches in machine learning, branching from a central node into three distinct categories. Each category includes examples of specific techniques.

### Components/Axes

- **Central Node**: "Interpretability Approaches" (bold, centered at the top).

- **Three Branches**:

1. **Left Branch**: "Inherent Interpretability (e.g., Decision Trees)".

2. **Middle Branch**: "Post-hoc Explainability (e.g., Attention Visualization)".

3. **Right Branch**: "Mechanistic Interpretability (e.g., Head Ablation)".

### Detailed Analysis

- **Inherent Interpretability**:

- Label: "Inherent Interpretability".

- Example: "Decision Trees" (italicized, in parentheses).

- **Post-hoc Explainability**:

- Label: "Post-hoc Explainability".

- Example: "Attention Visualization" (italicized, in parentheses).

- **Mechanistic Interpretability**:

- Label: "Mechanistic Interpretability".

- Example: "Head Ablation" (italicized, in parentheses).

### Key Observations

- The diagram categorizes interpretability methods into three mutually exclusive groups.

- Each category includes a concrete example (e.g., "Decision Trees" for Inherent Interpretability).

- Arrows connect the central node to all three subcategories, emphasizing their relationship to the overarching concept.

### Interpretation

This flowchart highlights the taxonomy of interpretability approaches, distinguishing between:

1. **Inherent Interpretability**: Models designed to be interpretable by design (e.g., Decision Trees).

2. **Post-hoc Explainability**: Techniques applied after model training to explain outputs (e.g., Attention Visualization).

3. **Mechanistic Interpretability**: Methods focused on understanding internal model mechanisms (e.g., Head Ablation).

The structure suggests a progression from broad conceptual categories to specific technical implementations, emphasizing the diversity of strategies for achieving model transparency. The use of examples grounds abstract concepts in real-world applications, aiding practitioners in selecting appropriate methods based on their interpretability needs.