## Line Charts: Average Probability by Layer for Llama and Gemma Models

### Overview

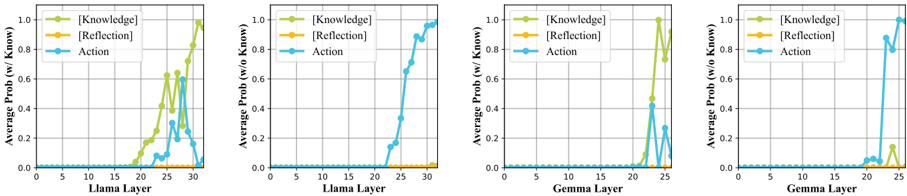

The image displays four line charts arranged horizontally, comparing the average probability of three categories—`[Knowledge]`, `[Reflection]`, and `Action`—across the layers of two different language models (Llama and Gemma). The charts are divided into two conditions: with knowledge (`w/ Know.`) and without knowledge (`w/o Know.`). The data suggests an analysis of how internal model representations shift across layers depending on the presence of external knowledge.

### Components/Axes

* **Chart Layout:** Four separate line charts in a 1x4 horizontal grid.

* **Common Legend:** Present in the top-left corner of each chart.

* `[Knowledge]`: Green line with circular markers.

* `[Reflection]`: Orange line with circular markers.

* `Action`: Blue line with circular markers.

* **X-Axes:**

* Charts 1 & 2: `Llama Layer`, scale from 0 to 30.

* Charts 3 & 4: `Gemma Layer`, scale from 0 to 25.

* **Y-Axes:**

* Charts 1 & 3: `Average Prob (w/ Know.)`, scale from 0.0 to 1.0.

* Charts 2 & 4: `Average Prob (w/o Know.)`, scale from 0.0 to 1.0.

* **Grid:** Light gray grid lines are present in the background of each plot.

### Detailed Analysis

**Chart 1: Llama Layer (With Knowledge)**

* **Trend Verification:**

* `[Knowledge]` (Green): Starts near zero, begins a steep ascent around layer 18, peaks sharply between layers 24-26 (reaching ~0.8-0.9), then declines.

* `[Reflection]` (Orange): Remains flat and near zero (≈0.0) across all layers.

* `Action` (Blue): Remains near zero until layer 20, shows a small peak around layer 25 (≈0.3), then returns to near zero.

* **Key Data Points (Approximate):**

* `[Knowledge]` Peak: ~0.85 at Layer 25.

* `Action` Peak: ~0.3 at Layer 25.

**Chart 2: Llama Layer (Without Knowledge)**

* **Trend Verification:**

* `[Knowledge]` (Green): Remains flat and near zero across all layers.

* `[Reflection]` (Orange): Remains flat and near zero across all layers.

* `Action` (Blue): Begins a very sharp, near-linear increase starting around layer 20, approaching 1.0 by layer 30.

* **Key Data Points (Approximate):**

* `Action` at Layer 30: ~0.98.

**Chart 3: Gemma Layer (With Knowledge)**

* **Trend Verification:**

* `[Knowledge]` (Green): Remains near zero until layer 18, spikes dramatically to a peak at layer 22 (≈1.0), then drops sharply.

* `[Reflection]` (Orange): Remains flat and near zero across all layers.

* `Action` (Blue): Shows a minor peak coinciding with the `[Knowledge]` peak at layer 22 (≈0.4), otherwise remains low.

* **Key Data Points (Approximate):**

* `[Knowledge]` Peak: ~1.0 at Layer 22.

* `Action` Peak: ~0.4 at Layer 22.

**Chart 4: Gemma Layer (Without Knowledge)**

* **Trend Verification:**

* `[Knowledge]` (Green): Remains near zero, with a very small, brief increase around layer 24 (≈0.1).

* `[Reflection]` (Orange): Remains flat and near zero across all layers.

* `Action` (Blue): Begins a sharp increase around layer 20, rising to near 1.0 by layer 25.

* **Key Data Points (Approximate):**

* `Action` at Layer 25: ~0.95.

### Key Observations

1. **Condition-Dependent Dominance:** The dominant category shifts completely based on the "with/without knowledge" condition. `[Knowledge]` probability is high only in the "w/ Know." condition, while `Action` probability becomes dominant in the "w/o Know." condition.

2. **Layer-Specific Activation:** For both models, the significant probability shifts occur in the later layers (approximately layers 20-30 for Llama, 18-25 for Gemma).

3. **Model Similarity:** Llama and Gemma exhibit qualitatively similar patterns, though Gemma's transitions appear sharper and occur at slightly earlier layer numbers.

4. **Reflection's Role:** The `[Reflection]` category shows negligible average probability across all layers and conditions in this visualization.

### Interpretation

This data visualizes a potential mechanistic difference in how language models process tasks with and without access to external knowledge.

* **With Knowledge:** The model's internal state (high `[Knowledge]` probability in middle-to-late layers) suggests it is actively retrieving or processing the provided knowledge. The subsequent drop may indicate this information is being integrated or transformed for output generation.

* **Without Knowledge:** The model bypasses the `[Knowledge]` pathway entirely. Instead, the `Action` probability surges in the final layers, suggesting the model relies on its parametric knowledge to directly generate an action or response.

* **Peircean Reading:** The charts depict a sign process. The `[Knowledge]` peak is an *icon* of the input knowledge being represented internally. The `Action` surge is an *index* of the model's final output generation. The stark contrast between conditions shows the model's internal "reasoning" pathway is fundamentally altered by the presence of an external knowledge source. The absence of `[Reflection]` suggests this specific analysis did not capture a distinct, measurable phase of self-referential processing in the models' average behavior.