## Line Graphs: Average Probability of Metrics Across Llama and Gemma Layers

### Overview

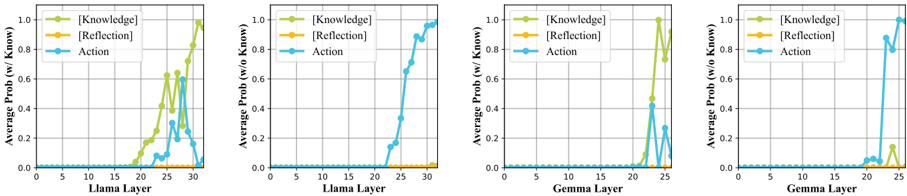

The image contains four line graphs comparing the average probability of three metrics—[Knowledge], [Reflection], and [Action]—across layers of two language models: Llama and Gemma. Each model has two graphs: one with Knowledge included (`w/ Know`) and one without (`w/o Know`). The graphs highlight how metric performance varies with layer depth and the inclusion/exclusion of Knowledge.

---

### Components/Axes

- **X-Axis**:

- Labeled "Llama Layer" (graphs 1–2) or "Gemma Layer" (graphs 3–4).

- Scale: 0 to 30 (discrete increments).

- **Y-Axis**:

- Labeled "Average Prob (w/ Know)" (graphs 1, 3) or "Average Prob (w/o Know)" (graphs 2, 4).

- Scale: 0.0 to 1.0.

- **Legends**:

- Top-left corner of each graph.

- Colors:

- Green: [Knowledge]

- Orange: [Reflection]

- Blue: [Action]

- **Graph Layout**:

- Two graphs per model (Llama left, Gemma right).

- Each graph has three lines (one per metric).

---

### Detailed Analysis

#### Llama Model (Graphs 1–2)

1. **Graph 1 (w/ Know)**:

- **Knowledge** (green): Peaks at ~0.8 at layer 25, then drops to ~0.6 by layer 30.

- **Action** (blue): Peaks at ~0.6 at layer 20, then declines to ~0.2 by layer 30.

- **Reflection** (orange): Remains near 0 throughout.

2. **Graph 2 (w/o Know)**:

- **Action** (blue): Peaks at ~0.8 at layer 25, then drops sharply.

- **Knowledge** (green) and **Reflection** (orange): Flat near 0.

#### Gemma Model (Graphs 3–4)

1. **Graph 3 (w/ Know)**:

- **Knowledge** (green): Peaks at ~0.8 at layer 25, then drops to ~0.6.

- **Action** (blue): Peaks at ~0.6 at layer 20, then declines.

- **Reflection** (orange): Flat near 0.

2. **Graph 4 (w/o Know)**:

- **Action** (blue): Peaks at ~0.8 at layer 25, then drops.

- **Knowledge** (green): Sharp peak at layer 25 (~0.2), then drops.

- **Reflection** (orange): Flat near 0.

---

### Key Observations

1. **Peak Layering**:

- Knowledge and Action metrics peak at different layers (Knowledge at layer 25, Action at layer 20 for Llama; similar for Gemma).

2. **Knowledge Inclusion Impact**:

- Including Knowledge (`w/ Know`) boosts the [Knowledge] metric but suppresses [Action] performance.

- Excluding Knowledge (`w/o Know`) allows [Action] to dominate, with higher peaks.

3. **Model Differences**:

- Llama shows sharper declines post-peak compared to Gemma.

- Gemma’s [Knowledge] metric has a smaller residual peak in `w/o Know` (layer 25, ~0.2).

---

### Interpretation

- **Trade-off Between Metrics**: The inclusion of Knowledge enhances the model’s ability to encode factual or reflective data ([Knowledge]) but may hinder its capacity for dynamic, action-oriented reasoning ([Action]). This suggests a potential architectural conflict between knowledge retention and real-time decision-making.

- **Model-Specific Behavior**: Llama’s steeper post-peak declines imply a more rigid layer hierarchy, while Gemma’s gradual drops suggest more distributed processing.

- **Anomalies**: The residual [Knowledge] peak in Gemma’s `w/o Know` graph (layer 25) hints at residual knowledge leakage even when explicitly excluded, possibly due to shared parameters or cross-layer dependencies.

---

### Spatial Grounding & Trend Verification

- **Legend Placement**: Top-left corner in all graphs, ensuring clarity.

- **Color Consistency**:

- Green ([Knowledge]) matches all green lines.

- Blue ([Action]) matches all blue lines.

- **Trend Logic**:

- Llama’s [Knowledge] in Graph 1 slopes upward to layer 25, then downward—consistent with the described peak.

- Gemma’s [Action] in Graph 4 rises sharply at layer 25, aligning with the annotated peak.

---

### Conclusion

The graphs reveal a critical design consideration: balancing knowledge integration with actionable reasoning. Models optimized for factual accuracy ([Knowledge]) may sacrifice real-time adaptability ([Action]), and vice versa. This trade-off could inform future model architectures aiming for hybrid capabilities.