\n

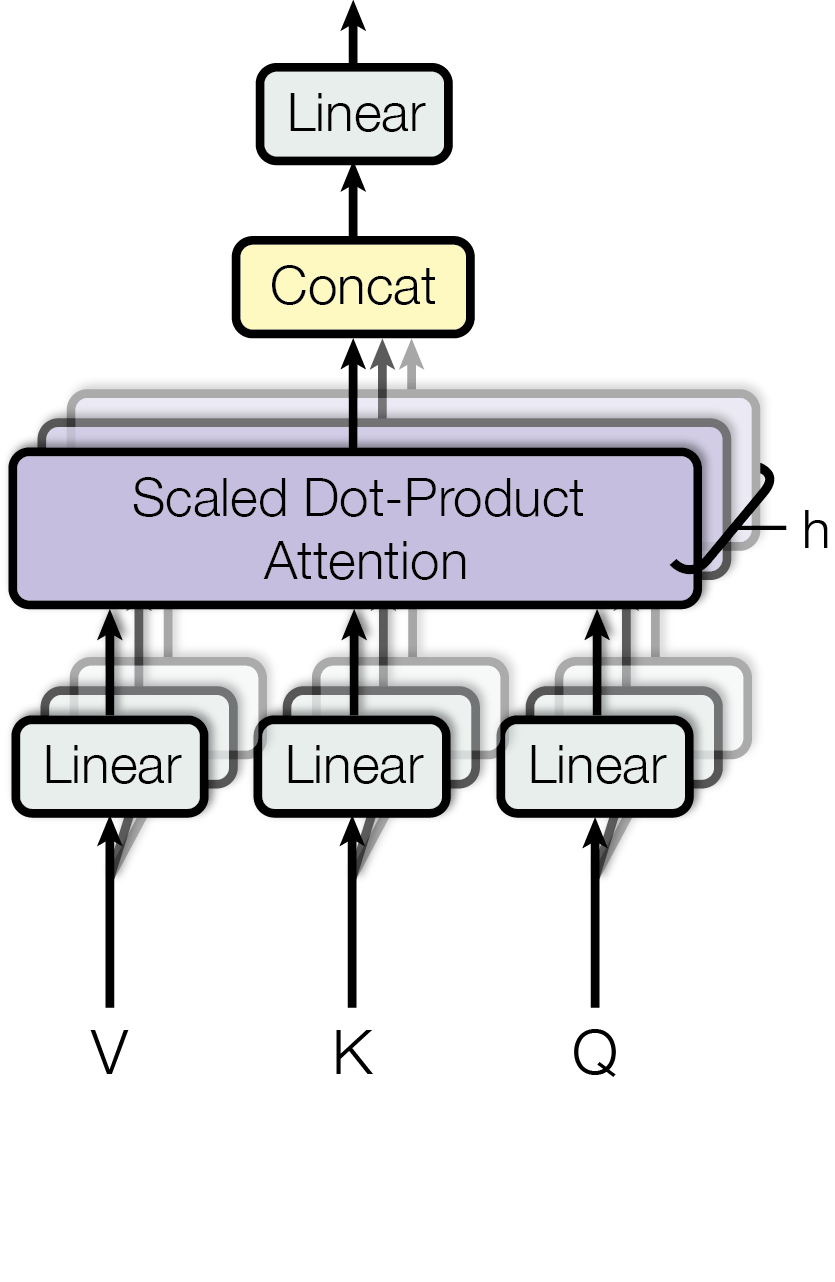

## Diagram: Multi-Head Attention Mechanism (Transformer Architecture)

### Overview

This image is a technical diagram illustrating the architecture of the Multi-Head Attention mechanism, a core component of the Transformer neural network model. It depicts the flow of data through parallel attention heads and the subsequent combination of their outputs. The diagram is presented on a light gray background with black outlines and text.

### Components/Axes

The diagram is structured as a data flow graph with the following labeled components, arranged from bottom to top:

**Inputs (Bottom):**

* **V**: Value input vector/matrix.

* **K**: Key input vector/matrix.

* **Q**: Query input vector/matrix.

**Processing Layers (Middle):**

* **Linear**: Three separate, parallel "Linear" transformation blocks. Each receives one of the inputs (V, K, Q). The diagram shows stacked, semi-transparent layers behind each primary "Linear" box, visually representing multiple parallel heads (`h`).

* **Scaled Dot-Product Attention**: A central, prominent purple block. It receives the outputs from all the parallel "Linear" layers. A bracket labeled **`h`** on the right side of this block indicates that this operation is performed across `h` parallel attention heads.

* **Concat**: A yellow block positioned above the attention block. It receives the outputs from all `h` attention heads (indicated by multiple upward arrows) and concatenates them.

**Output (Top):**

* **Linear**: A final "Linear" transformation block that processes the concatenated output from the previous layer.

* An upward-pointing arrow from the final "Linear" block indicates the output of the entire Multi-Head Attention sub-layer.

### Detailed Analysis

The diagram details the precise data flow and transformation steps:

1. **Input Projection:** The input vectors **V**, **K**, and **Q** each feed into their own dedicated **Linear** layer. The stacked, shadowed boxes behind each "Linear" label indicate that this projection is not singular but is performed `h` times in parallel, once for each attention head. This creates `h` different sets of projected V, K, and Q vectors.

2. **Parallel Attention Calculation:** Each of the `h` sets of projected vectors is processed independently by the **Scaled Dot-Product Attention** mechanism. The bracket labeled **`h`** confirms this parallelism. The core operation within this block (not visually detailed) is: `Attention(Q, K, V) = softmax(QK^T / √d_k)V`.

3. **Output Aggregation:** The outputs from all `h` attention heads (each being a vector/matrix) are gathered by the **Concat** block. The multiple arrows entering this block from below represent the `h` separate outputs being combined into a single, larger vector/matrix.

4. **Final Projection:** The concatenated vector/matrix is passed through a final **Linear** layer. This layer projects the combined multi-head representation back to the model's expected dimensionality, producing the final output of the Multi-Head Attention sub-layer.

### Key Observations

* **Visualizing Parallelism:** The diagram's most salient feature is its use of stacked, semi-transparent layers behind the "Linear" and "Scaled Dot-Product Attention" components. This is a direct visual metaphor for the `h` parallel attention heads, making the "multi-head" concept explicit.

* **Spatial Flow:** The layout is strictly vertical, emphasizing a bottom-up data flow from inputs (V, K, Q) to the final output. The central placement of the "Scaled Dot-Product Attention" block highlights it as the core computational unit.

* **Color Coding:** A minimal color scheme is used for functional distinction: light purple for the core attention operation, pale yellow for the concatenation operation, and white for linear transformations.

* **Label Precision:** All text labels are clear, using a sans-serif font. The critical parameter `h` (number of heads) is explicitly labeled with a bracket, linking the visual metaphor to a concrete hyperparameter.

### Interpretation

This diagram is a canonical representation of the Multi-Head Attention mechanism introduced in the "Attention Is All You Need" paper (Vaswani et al., 2017). It demonstrates the architectural innovation that allows the Transformer model to jointly attend to information from different representation subspaces at different positions.

* **What it demonstrates:** The diagram shows how a single attention mechanism is decomposed into `h` parallel, independent "heads." Each head can learn to focus on different aspects of the input (e.g., syntactic relationships, semantic roles, long-range dependencies) simultaneously. The final linear layer learns to combine these diverse attentional perspectives.

* **Relationships:** The flow illustrates a "split-transform-merge" strategy. The input is split via linear projections into multiple subspaces (`h` heads), processed independently by the same attention function, and then merged via concatenation and a final linear projection. This is more efficient and expressive than applying a single, large attention mechanism.

* **Significance:** This parallel structure is key to the Transformer's performance and scalability. It allows for more nuanced understanding of sequences than single-head attention, as different heads can specialize. The diagram effectively communicates this complex, parallel computational graph in an intuitive, spatial format. The presence of `h` as a labeled parameter underscores that this is a configurable hyperparameter of the model.