\n

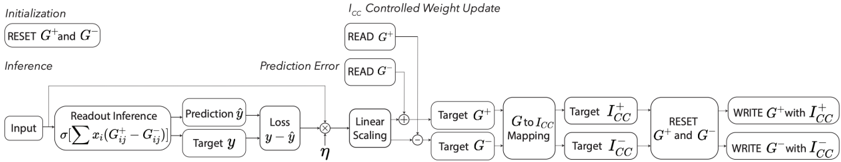

## Diagram: I<sub>CC</sub> Controlled Weight Update

### Overview

The image is a diagram illustrating the process of I<sub>CC</sub> (presumably, "Input-Controlled Computation") Controlled Weight Update. It depicts a feedback loop involving inference, prediction error calculation, and weight updates based on the error. The diagram is structured horizontally, with an "Initialization" section on the left and a "I<sub>CC</sub> Controlled Weight Update" section on the right. A central "Inference" pathway connects these two sections.

### Components/Axes

The diagram consists of several rectangular blocks representing operations or data storage. Key labels include:

* **Initialization:** "RESET G<sup>+</sup> and G<sup>-</sup>"

* **Inference:** "Input", "Readout Inference", "Prediction ŷ", "Loss", "Linear Scaling", "Target G<sup>+</sup>", "Target G<sup>-</sup>", "Target I<sub>CC</sub>"

* **I<sub>CC</sub> Controlled Weight Update:** "I<sub>CC</sub> Controlled Weight Update", "READ G<sup>+</sup>", "READ G<sup>-</sup>", "Prediction Error", "Target G<sup>+</sup> to Target I<sub>CC</sub> Mapping", "Target G<sup>-</sup> to Target I<sub>CC</sub> Mapping", "RESET G<sup>+</sup> and G<sup>-</sup>", "WRITE G<sup>+</sup> with I<sub>CC</sub><sup>+</sup>", "WRITE G<sup>-</sup> with I<sub>CC</sub><sup>-</sup>"

* **Mathematical Expressions:** Σ x<sub>i</sub>(G<sub>i</sub><sup>+</sup> - G<sub>i</sub><sup>-</sup>), ŷ - y, η

* **Connections:** Arrows indicate the flow of data and control signals.

### Detailed Analysis or Content Details

The diagram can be broken down into the following steps:

1. **Initialization:** The process begins with resetting the weights G<sup>+</sup> and G<sup>-</sup>.

2. **Inference:**

* An "Input" is fed into a "Readout Inference" block, which calculates a weighted sum: Σ x<sub>i</sub>(G<sub>i</sub><sup>+</sup> - G<sub>i</sub><sup>-</sup>).

* This results in a "Prediction" ŷ.

* A "Loss" is calculated as the difference between the prediction ŷ and the "Target" y (ŷ - y).

* The "Loss" is then subjected to "Linear Scaling" with a coefficient η.

3. **I<sub>CC</sub> Controlled Weight Update:**

* The scaled "Prediction Error" is used to compute "Target G<sup>+</sup>" and "Target G<sup>-</sup>".

* These targets are then mapped to "Target I<sub>CC</sub>" using "Target G<sup>+</sup> to Target I<sub>CC</sub> Mapping" and "Target G<sup>-</sup> to Target I<sub>CC</sub> Mapping".

* "READ G<sup>+</sup>" and "READ G<sup>-</sup>" are performed.

* Finally, the weights G<sup>+</sup> and G<sup>-</sup> are updated by writing I<sub>CC</sub><sup>+</sup> and I<sub>CC</sub><sup>-</sup> respectively.

* The process then loops back to the "Inference" stage after resetting G<sup>+</sup> and G<sup>-</sup>.

### Key Observations

The diagram highlights a closed-loop system where the prediction error drives the weight updates. The use of separate weights G<sup>+</sup> and G<sup>-</sup> suggests a mechanism for representing positive and negative influences or features. The "I<sub>CC</sub>" component appears to be a crucial element in controlling the weight update process, potentially providing a way to regulate the magnitude or direction of the updates.

### Interpretation

This diagram illustrates a learning algorithm, likely a form of gradient descent or a related optimization technique. The "I<sub>CC</sub>" component suggests a more sophisticated weight update rule than standard gradient descent, potentially incorporating input-dependent or context-aware adjustments. The feedback loop ensures that the weights are iteratively refined to minimize the prediction error. The separation of weights into G<sup>+</sup> and G<sup>-</sup> could represent a form of feature selection or a mechanism for handling opposing influences. The diagram suggests a system designed for adaptive learning, where the weight updates are dynamically adjusted based on the input and the prediction error. The use of "Target" values implies a supervised learning setting, where the algorithm is trained to match a desired output. The overall architecture suggests a system capable of learning complex relationships between inputs and outputs.