## Flowchart: Neural Correspondence Processing Pipeline

### Overview

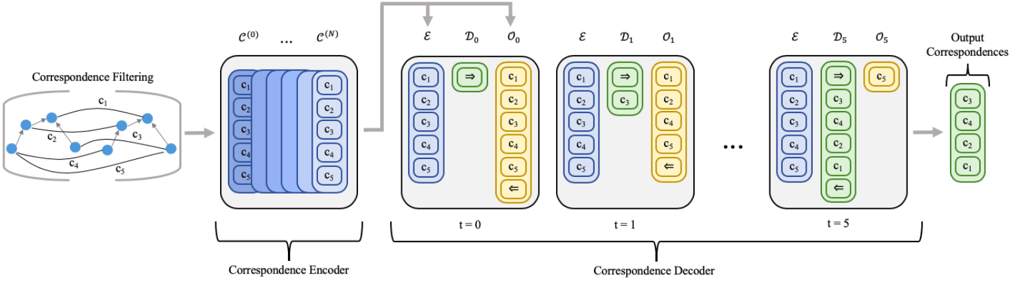

The diagram illustrates a neural network architecture for processing correspondences through three stages: filtering, encoding, and decoding. The pipeline includes temporal dynamics in the decoding phase and concludes with refined output correspondences.

### Components/Axes

1. **Correspondence Filtering**

- Input nodes: c₁, c₂, c₃, c₄, c₅

- Arrows represent correspondence relationships (labeled c₁–c₅)

- Output: Filtered correspondence set

2. **Correspondence Encoder**

- Input: Filtered correspondences

- Structure: 5xN matrix (rows: c₁–c₅, columns: C⁰–Cᴺ)

- Output: Encoded feature vectors

3. **Correspondence Decoder**

- Temporal dimension: t=0 to t=5

- Components per timestep:

- **Encoder (E)**: Blue blocks (c₁–c₅)

- **Decoder (Dₜ)**: Yellow blocks (c₁–c₅)

- **Output (Oₜ)**: Green blocks (c₁–c₅)

- Arrows between components:

- Green arrows with "⇒" (forward) and "⇐" (backward) symbols

- Final output: Refined correspondence set

4. **Output Correspondences**

- Final nodes: c₃, c₄, c₂, c₁ (reordered subset)

- Color: Green

### Detailed Analysis

- **Encoder**: Processes filtered correspondences into a latent space (C⁰–Cᴺ) with 5-dimensional features per correspondence.

- **Decoder Dynamics**:

- t=0: Initial encoding (blue) → Initial decoding (yellow)

- t=1–5: Iterative refinement with bidirectional interactions (green arrows)

- Each timestep shows progressive reordering of correspondence relationships

- **Output**: Final correspondence set shows permutation of original nodes (c₃→c₄→c₂→c₁)

### Key Observations

1. Temporal evolution in decoding phase shows progressive reordering of correspondences

2. Bidirectional arrows (⇒/⇐) suggest attention mechanisms or message passing

3. Output correspondence order differs from input (c₁→c₅ → c₃→c₄→c₂→c₁)

4. Color coding distinguishes input (blue), processing (yellow), and output (green)

### Interpretation

This architecture demonstrates a temporal neural network for correspondence refinement:

1. **Filtering Stage**: Initial relationship pruning/selection

2. **Encoding**: Dimensionality reduction into latent space

3. **Temporal Decoding**: Iterative refinement through bidirectional interactions

4. **Output**: Optimized correspondence set with reordered relationships

The green arrows' bidirectional nature implies the model learns both forward and inverse mappings between correspondences. The final output's reordered nodes suggest the network prioritizes certain relationships based on learned features. The temporal dimension (t=0–5) indicates multi-step processing for context-aware correspondence optimization.