TECHNICAL ASSET FINGERPRINT

bb71ac8fe387e0f852aa9969

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: Early-Stopping Drafting and Dynamic Verification

### Overview

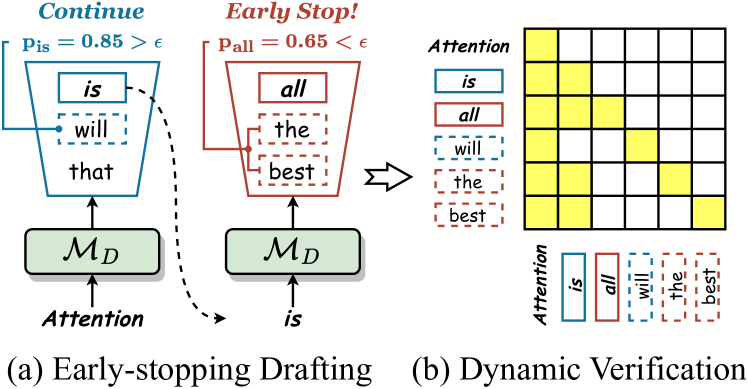

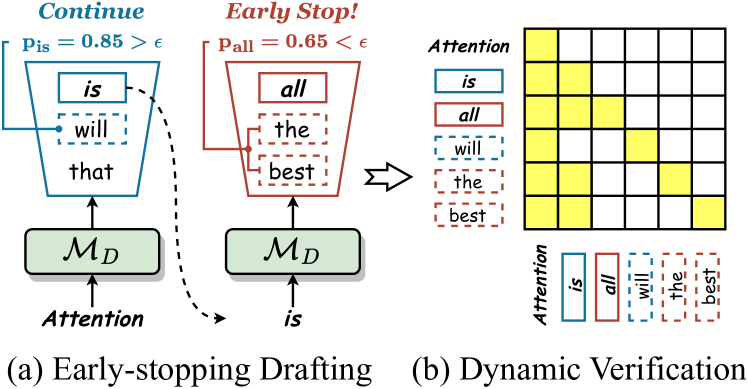

The image presents two diagrams illustrating "Early-stopping Drafting" and "Dynamic Verification" processes. The diagrams depict how attention mechanisms and probability thresholds influence the selection and verification of words in a sequence.

### Components/Axes

**Diagram (a): Early-stopping Drafting**

* **Title:** (a) Early-stopping Drafting

* **Top-Left Text:** Continue

* **Probability Condition:** p_is = 0.85 > ε

* **Input:** Attention (arrow pointing upwards to M_D)

* **Module:** M_D (green rounded rectangle)

* **Output Sequence (Blue):**

* "is" (solid blue rectangle)

* "will" (dashed blue rectangle)

* "that" (no rectangle)

* **Dashed Arrow:** A curved dashed arrow originates from the "is" rectangle and points downwards and rightwards towards the "is" input of diagram (b).

**Diagram (b): Dynamic Verification**

* **Title:** (b) Dynamic Verification

* **Top-Right Text:** Early Stop!

* **Probability Condition:** p_all = 0.65 < ε

* **Input:** is (arrow pointing upwards to M_D)

* **Module:** M_D (green rounded rectangle)

* **Output Sequence (Red):**

* "all" (solid red rectangle)

* "the" (dashed red rectangle)

* "best" (dashed red rectangle)

* **Arrow:** A right-pointing arrow connects the output sequence of diagram (a) to the attention matrix in diagram (b).

**Attention Matrix**

* A 6x6 grid representing an attention matrix.

* Rows correspond to the words "is", "all", "will", "the", "best".

* Columns correspond to the words "is", "all", "will", "the", "best".

* Cells are either yellow (indicating attention) or white (no attention).

**Attention Labels**

* **Vertical Attention Labels:** "Attention" written vertically.

* "is" (solid blue rectangle)

* "all" (solid red rectangle)

* "will" (dashed blue rectangle)

* "the" (dashed red rectangle)

* "best" (dashed red rectangle)

* **Horizontal Attention Labels:** "Attention" written horizontally.

* "is" (solid blue rectangle)

* "all" (solid red rectangle)

* "will" (dashed blue rectangle)

* "the" (dashed red rectangle)

* "best" (dashed red rectangle)

### Detailed Analysis

**Diagram (a): Early-stopping Drafting**

* The process starts with an "Attention" input to the module M_D.

* If the probability p_is for the word "is" is greater than a threshold ε (0.85 > ε), the process continues.

* The output sequence includes "is" (solid blue), "will" (dashed blue), and "that".

**Diagram (b): Dynamic Verification**

* The process starts with the word "is" as input to the module M_D.

* If the probability p_all for the word "all" is less than a threshold ε (0.65 < ε), the process stops early.

* The output sequence includes "all" (solid red), "the" (dashed red), and "best" (dashed red).

**Attention Matrix**

* The attention matrix shows the relationships between the words.

* The yellow cells indicate where attention is focused.

* Row 1 ("is"): Attends to column 1 ("is") and column 2 ("all").

* Row 2 ("all"): Attends to column 1 ("is") and column 2 ("all").

* Row 3 ("will"): Attends to column 3 ("will") and column 4 ("the").

* Row 4 ("the"): Attends to column 3 ("will") and column 4 ("the").

* Row 5 ("best"): Attends to column 5 ("best") and column 6 ("<end of sequence>").

### Key Observations

* The diagrams illustrate two different strategies for sequence generation: early-stopping and dynamic verification.

* Early-stopping is based on a probability threshold for a specific word ("is").

* Dynamic verification is based on a probability threshold for another word ("all").

* The attention matrix visualizes the relationships between the words in the sequence.

* Solid rectangles indicate words that are directly considered, while dashed rectangles indicate words that are potentially considered or predicted.

### Interpretation

The diagrams demonstrate how attention mechanisms and probability thresholds can be used to control the generation of sequences. Early-stopping allows the process to terminate if a certain condition is met, while dynamic verification allows the process to adapt based on the relationships between the words. The attention matrix provides a visual representation of these relationships, showing which words are most relevant to each other. The use of solid and dashed rectangles highlights the distinction between definite and potential word selections, adding a layer of nuance to the process. The probability thresholds (0.85 and 0.65) suggest a trade-off between accuracy and efficiency, where higher thresholds may lead to more accurate sequences but also require more computation.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Early-stopping Drafting and Dynamic Verification

### Overview

The image presents a diagram illustrating two stages of a process: "Early-stopping Drafting" (a) and "Dynamic Verification" (b). The diagram depicts a sequence of operations involving text processing, attention mechanisms, and decision-making based on probability thresholds. It appears to be a visual representation of a method for generating text, potentially within a machine learning context.

### Components/Axes

The diagram consists of two main sections, labeled (a) and (b). Each section contains visual elements representing text blocks, attention mechanisms, and a decision-making process.

* **Section (a): Early-stopping Drafting**

* Text Blocks: "is", "will", "that" (orange boxes), "all", "the", "best" (red boxes).

* Model: `M_D` (green rectangle)

* Attention: Indicated by arrows and the label "Attention".

* Probability Thresholds: `P_is = 0.85 > ε` (blue), `P_all = 0.65 < ε` (red). `ε` is a threshold value.

* Arrows: Representing flow and connections between components. A dashed arrow indicates a "Continue" path, while a solid arrow indicates an "Early Stop!" path.

* **Section (b): Dynamic Verification**

* Text Blocks: "is", "all", "will", "the", "best" (blue boxes with dashed outlines).

* Model: `M_D` (green rectangle)

* Attention: Indicated by arrows and the label "Attention".

* Attention Matrix: A grid of yellow squares representing an attention matrix.

### Detailed Analysis or Content Details

**Section (a): Early-stopping Drafting**

* The process begins with the model `M_D` receiving "Attention" input.

* The model generates the sequence "is", "will", "that".

* A probability `P_is` is calculated for "is", with a value of 0.85. This value is compared to a threshold `ε`. Since 0.85 > `ε`, the process continues.

* The model then generates the sequence "all", "the", "best".

* A probability `P_all` is calculated for "all", with a value of 0.65. This value is compared to the same threshold `ε`. Since 0.65 < `ε`, the process stops ("Early Stop!").

* The dashed arrow indicates the continuation path, while the solid arrow indicates the early stopping path.

**Section (b): Dynamic Verification**

* The model `M_D` receives "Attention" input and generates the sequence "is", "all", "will", "the", "best".

* The attention matrix is a 6x6 grid of yellow squares. The rows correspond to the words "is", "all", "will", "the", "best", and the columns likely represent the same words, indicating the attention weights between them.

* The text blocks are enclosed in dashed blue boxes.

### Key Observations

* The diagram illustrates a dynamic process where text generation can be stopped early based on a probability threshold.

* The attention mechanism plays a crucial role in both stages of the process.

* The attention matrix in Section (b) provides a visual representation of the relationships between different words in the generated sequence.

* The threshold `ε` is a key parameter that controls the trade-off between generation length and quality.

### Interpretation

The diagram depicts a method for efficient text generation that combines drafting and verification stages. The "Early-stopping Drafting" stage aims to quickly generate a draft sequence, while the "Dynamic Verification" stage refines the sequence and ensures its quality. The probability thresholds and attention mechanisms are used to make informed decisions about when to continue or stop the generation process.

The use of different colors (orange, red, blue) to highlight the text blocks likely indicates different stages or roles in the generation process. The attention matrix in Section (b) suggests that the model is able to focus on the most relevant parts of the generated sequence.

The diagram suggests a system that balances exploration (generating new text) with exploitation (verifying and refining existing text). The threshold `ε` allows for tuning the system's behavior based on the desired trade-off between these two objectives. The overall goal appears to be to generate high-quality text efficiently by stopping the generation process when the probability of generating meaningful content falls below a certain level.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Early-stopping Drafting and Dynamic Verification Process

### Overview

The image is a technical diagram illustrating a two-stage process for text generation or sequence modeling, likely in the context of large language models or speculative decoding. It is divided into two main panels: (a) Early-stopping Drafting on the left and (b) Dynamic Verification on the right. The diagram uses color-coding (blue and red) and dashed/solid lines to differentiate between two parallel processes or hypotheses.

### Components/Axes

**Panel (a) - Early-stopping Drafting:**

* **Left Trapezoid (Blue Outline):**

* **Header Label:** "Continue" (in blue, italicized).

* **Probability Statement:** `P_is = 0.85 > ε` (where ε is a threshold symbol).

* **Internal Text (Top to Bottom):** "is" (solid blue box), "will" (dashed blue box), "that" (plain text).

* **Input Arrow:** Labeled "Attention" pointing upward into a green box below.

* **Right Trapezoid (Red Outline):**

* **Header Label:** "Early Stop!" (in red, italicized).

* **Probability Statement:** `P_all = 0.65 < ε`.

* **Internal Text (Top to Bottom):** "all" (solid red box), "the" (dashed red box), "best" (dashed red box).

* **Input Arrow:** Labeled "is" pointing upward into a green box below.

* **Green Boxes:** Both trapezoids are fed from identical green rectangular boxes labeled `M_D` (likely a model or drafting module).

* **Flow Arrow:** A dashed black arrow curves from the "is" token in the left trapezoid to the input "is" of the right trapezoid, indicating a sequential or conditional relationship.

**Panel (b) - Dynamic Verification:**

* **Title:** "Dynamic Verification" (below the panel).

* **Attention Matrix:** A 5x5 grid (5 rows, 5 columns).

* **Row Labels (Left, Vertical):** "Attention" (title), followed by the tokens: "is" (blue box), "all" (red box), "will" (dashed blue box), "the" (dashed red box), "best" (dashed red box).

* **Column Labels (Bottom, Horizontal):** The same five tokens in the same order and styling as the row labels.

* **Matrix Content:** A grid where specific cells are filled with solid yellow, representing attention weights or alignments. The pattern is not a simple diagonal.

* **Legend/Key:** Located below the matrix, it explicitly maps the token styles:

* Solid blue box: "is"

* Solid red box: "all"

* Dashed blue box: "will"

* Dashed red box: "the"

* Dashed red box: "best"

### Detailed Analysis

**Process Flow (Panel a):**

1. The system appears to be drafting or predicting sequences of tokens ("is", "will", "that" vs. "all", "the", "best").

2. A probability score (`P`) is calculated for each draft sequence. The left sequence ("is"-led) has a high probability (`0.85`) exceeding a threshold `ε`, leading to a "Continue" decision.

3. The right sequence ("all"-led) has a lower probability (`0.65`) below `ε`, triggering an "Early Stop!" decision.

4. The dashed arrow suggests the "is" token from the continued draft is used as input to generate or evaluate the next potential sequence ("all", "the", "best").

**Attention Matrix Details (Panel b):**

The yellow-filled cells in the 5x5 grid indicate which tokens attend to which other tokens. Mapping the grid (Row, Column) with (1,1) as top-left:

* **Row 1 ("is"):** Attends to Column 1 ("is") and Column 2 ("all").

* **Row 2 ("all"):** Attends to Column 1 ("is"), Column 2 ("all"), and Column 3 ("will").

* **Row 3 ("will"):** Attends to Column 2 ("all") and Column 4 ("the").

* **Row 4 ("the"):** Attends to Column 3 ("will") and Column 5 ("best").

* **Row 5 ("best"):** Attends to Column 4 ("the") and Column 5 ("best").

### Key Observations

1. **Color-Coded Correspondence:** The blue/red and solid/dashed styling is consistently maintained between the draft sequences in panel (a) and the attention matrix labels in panel (b), creating a clear visual link.

2. **Probabilistic Gating:** The core mechanism is a probability threshold (`ε`) that decides whether to continue generating a sequence or to stop early, optimizing computational resources.

3. **Attention Pattern:** The attention matrix does not show a simple 1:1 alignment. Tokens attend to a small subset of others, primarily their immediate neighbors in the sequence and the key tokens ("is", "all") from the drafting stage. The "all" token (red) receives attention from four out of five tokens.

4. **Asymmetric Process:** The "Continue" path (blue) uses a generic "Attention" input, while the "Early Stop" path (red) is specifically triggered by the "is" token from the first path.

### Interpretation

This diagram illustrates an efficiency optimization technique for autoregressive text generation, such as **speculative decoding with early stopping**.

* **What it demonstrates:** The system runs two drafting processes in parallel or sequence. One is a high-confidence, continued draft. The other is a lower-confidence, alternative draft that is evaluated but terminated early if its probability is too low (`P_all < ε`). This avoids wasting computation on unlikely sequences.

* **Relationship between elements:** Panel (a) shows the *decision logic* based on sequence probability. Panel (b) shows the *underlying mechanism* (attention) that likely informs those probability calculations. The attention matrix reveals the model's focus during verification, showing how tokens relate to each other to compute the `P` scores.

* **Notable insight:** The "Early Stop!" condition (`P_all = 0.65`) is not extremely low, suggesting the threshold `ε` is set conservatively to prune only moderately unlikely paths. The attention pattern highlights "all" as a central token in the stopped sequence, which may be a key factor in its lower probability assessment compared to the "is"-led sequence. The process aims to maintain generation quality (`Continue` on high-probability paths) while improving speed (`Early Stop` on lower-probability paths).

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Early-stopping Drafting and Dynamic Verification Process

### Overview

The image depicts two interconnected diagrams illustrating a natural language processing (NLP) model's decision-making process. Diagram (a) shows an "Early-stopping Drafting" mechanism with probabilistic thresholds, while diagram (b) demonstrates "Dynamic Verification" through attention weight visualization. The system evaluates text generation at multiple stages, using attention mechanisms and probability calculations to determine continuation or termination of text production.

### Components/Axes

**Diagram (a): Early-stopping Drafting**

- **Funnel Structure**:

- Left branch: "Continue" path (blue)

- Right branch: "Early Stop!" path (red)

- **Key Elements**:

- Probability thresholds:

- `P_is = 0.85 > ε` (Continue condition)

- `P_all = 0.65 < ε` (Early Stop condition)

- Text components:

- "is", "will", "that" (Continue path)

- "all", "the", "best" (Early Stop path)

- Components:

- `M_D` (Model Decision node)

- "Attention" (Input/processing stage)

**Diagram (b): Dynamic Verification**

- **Attention Grid**:

- 5x5 matrix visualizing attention weights

- Words listed vertically: "is", "all", "will", "the", "best"

- Color coding:

- Yellow squares indicate attention weights

- Intensity varies by square darkness

- **Legend**:

- "Attention" label with yellow highlighting

### Detailed Analysis

**Diagram (a) Trends**:

1. Continue path (blue) has higher probability (0.85) than Early Stop path (0.65)

2. Text components differ between paths:

- Continue: "is", "will", "that"

- Early Stop: "all", "the", "best"

3. Both paths originate from `M_D` and "Attention" input

**Diagram (b) Trends**:

1. Attention weights show:

- Strongest focus on "is" (darkest yellow)

- Moderate attention to "all" and "will"

- Weakest attention to "best" (lightest yellow)

2. Grid structure suggests sequential attention pattern across words

### Key Observations

1. Probabilistic thresholding determines text generation continuation

2. Attention mechanism prioritizes different words based on context

3. Early-stopping occurs when continuation probability falls below 0.65

4. Attention weights correlate with text component importance

### Interpretation

This system demonstrates a hybrid approach to text generation:

1. **Probabilistic Control**: The model uses calculated probabilities (`P_is`, `P_all`) to decide between continuing text generation or terminating early, with ε representing a confidence threshold.

2. **Attention-Driven Verification**: The attention grid reveals how the model dynamically verifies text components, with stronger attention given to critical words like "is" and "all".

3. **Contextual Decision Making**: The divergence between Continue and Early Stop paths suggests the model evaluates different text segments independently, using attention weights to inform its decisions.

4. **Efficiency Optimization**: The early-stopping mechanism likely prevents unnecessary computation for low-confidence text segments, while maintaining quality through attention-based verification.

The diagrams collectively illustrate an adaptive text generation system that balances computational efficiency with semantic coherence through probabilistic thresholds and attention-based verification.

DECODING INTELLIGENCE...