## Diagram: Early-stopping Drafting and Dynamic Verification Process

### Overview

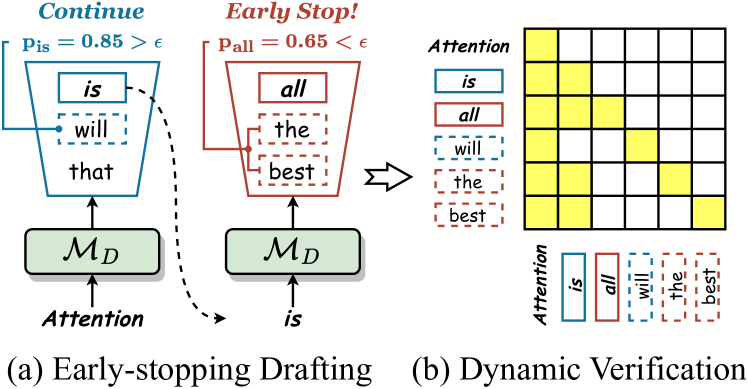

The image depicts two interconnected diagrams illustrating a natural language processing (NLP) model's decision-making process. Diagram (a) shows an "Early-stopping Drafting" mechanism with probabilistic thresholds, while diagram (b) demonstrates "Dynamic Verification" through attention weight visualization. The system evaluates text generation at multiple stages, using attention mechanisms and probability calculations to determine continuation or termination of text production.

### Components/Axes

**Diagram (a): Early-stopping Drafting**

- **Funnel Structure**:

- Left branch: "Continue" path (blue)

- Right branch: "Early Stop!" path (red)

- **Key Elements**:

- Probability thresholds:

- `P_is = 0.85 > ε` (Continue condition)

- `P_all = 0.65 < ε` (Early Stop condition)

- Text components:

- "is", "will", "that" (Continue path)

- "all", "the", "best" (Early Stop path)

- Components:

- `M_D` (Model Decision node)

- "Attention" (Input/processing stage)

**Diagram (b): Dynamic Verification**

- **Attention Grid**:

- 5x5 matrix visualizing attention weights

- Words listed vertically: "is", "all", "will", "the", "best"

- Color coding:

- Yellow squares indicate attention weights

- Intensity varies by square darkness

- **Legend**:

- "Attention" label with yellow highlighting

### Detailed Analysis

**Diagram (a) Trends**:

1. Continue path (blue) has higher probability (0.85) than Early Stop path (0.65)

2. Text components differ between paths:

- Continue: "is", "will", "that"

- Early Stop: "all", "the", "best"

3. Both paths originate from `M_D` and "Attention" input

**Diagram (b) Trends**:

1. Attention weights show:

- Strongest focus on "is" (darkest yellow)

- Moderate attention to "all" and "will"

- Weakest attention to "best" (lightest yellow)

2. Grid structure suggests sequential attention pattern across words

### Key Observations

1. Probabilistic thresholding determines text generation continuation

2. Attention mechanism prioritizes different words based on context

3. Early-stopping occurs when continuation probability falls below 0.65

4. Attention weights correlate with text component importance

### Interpretation

This system demonstrates a hybrid approach to text generation:

1. **Probabilistic Control**: The model uses calculated probabilities (`P_is`, `P_all`) to decide between continuing text generation or terminating early, with ε representing a confidence threshold.

2. **Attention-Driven Verification**: The attention grid reveals how the model dynamically verifies text components, with stronger attention given to critical words like "is" and "all".

3. **Contextual Decision Making**: The divergence between Continue and Early Stop paths suggests the model evaluates different text segments independently, using attention weights to inform its decisions.

4. **Efficiency Optimization**: The early-stopping mechanism likely prevents unnecessary computation for low-confidence text segments, while maintaining quality through attention-based verification.

The diagrams collectively illustrate an adaptive text generation system that balances computational efficiency with semantic coherence through probabilistic thresholds and attention-based verification.