## Diagram: Model Configuration Comparison

### Overview

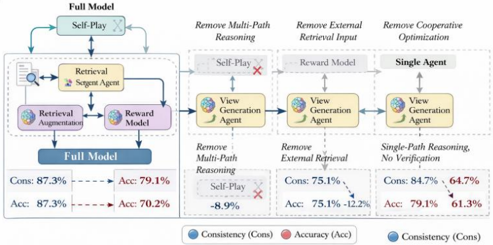

The diagram illustrates a comparative analysis of different model configurations derived from a "Full Model" framework. It evaluates the impact of removing specific components (e.g., Multi-Path Reasoning, External Retrieval Input, Cooperative Optimization) on two performance metrics: **Consistency (Cons)** and **Accuracy (Acc)**. The configurations are visualized as interconnected components with labeled metrics.

---

### Components/Axes

- **Legend**:

- Blue circles represent **Consistency (Cons)**.

- Red circles represent **Accuracy (Acc)**.

- **Main Configurations**:

1. **Full Model**: Includes all components (Self-Play, Retrieval, Reward Model, View Generation Agent).

2. **Self-Play**: Removes Multi-Path Reasoning.

3. **Reward Model**: Removes External Retrieval Input.

4. **Single Agent**: Removes Cooperative Optimization.

- **Key Components**:

- Self-Play

- Retrieval Augmentation

- Reward Model

- View Generation Agent

- Single-Path Reasoning

- Verification

---

### Detailed Analysis

#### Full Model

- **Consistency (Cons)**: 87.3% (blue dot).

- **Accuracy (Acc)**: 79.1% (red dot).

#### Self-Play (Remove Multi-Path Reasoning)

- **Consistency (Cons)**: 75.1% (blue dot).

- **Accuracy (Acc)**: 70.2% (red dot).

- **Trend**: Both metrics decline compared to the Full Model, with a sharper drop in accuracy (-8.9%).

#### Reward Model (Remove External Retrieval Input)

- **Consistency (Cons)**: 84.7% (blue dot).

- **Accuracy (Acc)**: 61.3% (red dot).

- **Trend**: Consistency remains high, but accuracy drops significantly (-17.8%).

#### Single Agent (Remove Cooperative Optimization)

- **Consistency (Cons)**: 84.7% (blue dot).

- **Accuracy (Acc)**: 64.7% (red dot).

- **Trend**: Consistency matches the Reward Model, but accuracy is slightly higher than the Reward Model (-14.4%).

---

### Key Observations

1. **Multi-Path Reasoning Impact**: Removing it (Self-Play) causes the largest accuracy drop (-8.9%), suggesting it is critical for precise predictions.

2. **External Retrieval Importance**: Removing it (Reward Model) preserves consistency but severely harms accuracy (-17.8%), indicating its role in data quality.

3. **Cooperative Optimization Trade-off**: Removing it (Single Agent) balances consistency (84.7%) and accuracy (64.7%), though both metrics lag behind the Full Model.

4. **Full Model Dominance**: Achieves the highest accuracy (79.1%) but has lower consistency (87.3%) compared to some simplified configurations.

---

### Interpretation

The diagram highlights trade-offs between model complexity and performance:

- **Accuracy vs. Consistency**: The Full Model prioritizes accuracy but sacrifices some consistency. Simplified models (e.g., Reward Model) retain consistency at the cost of accuracy.

- **Component Criticality**: Multi-Path Reasoning and External Retrieval are pivotal for accuracy and consistency, respectively. Their removal disproportionately impacts performance.

- **Practical Implications**: The Single Agent configuration offers a middle ground, potentially useful in resource-constrained scenarios where both metrics need balancing.

This analysis underscores the importance of component-specific contributions in model design, guiding decisions on which elements to retain or optimize based on application needs.