## Bar Chart: Estimated Annotation Cost Comparison

### Overview

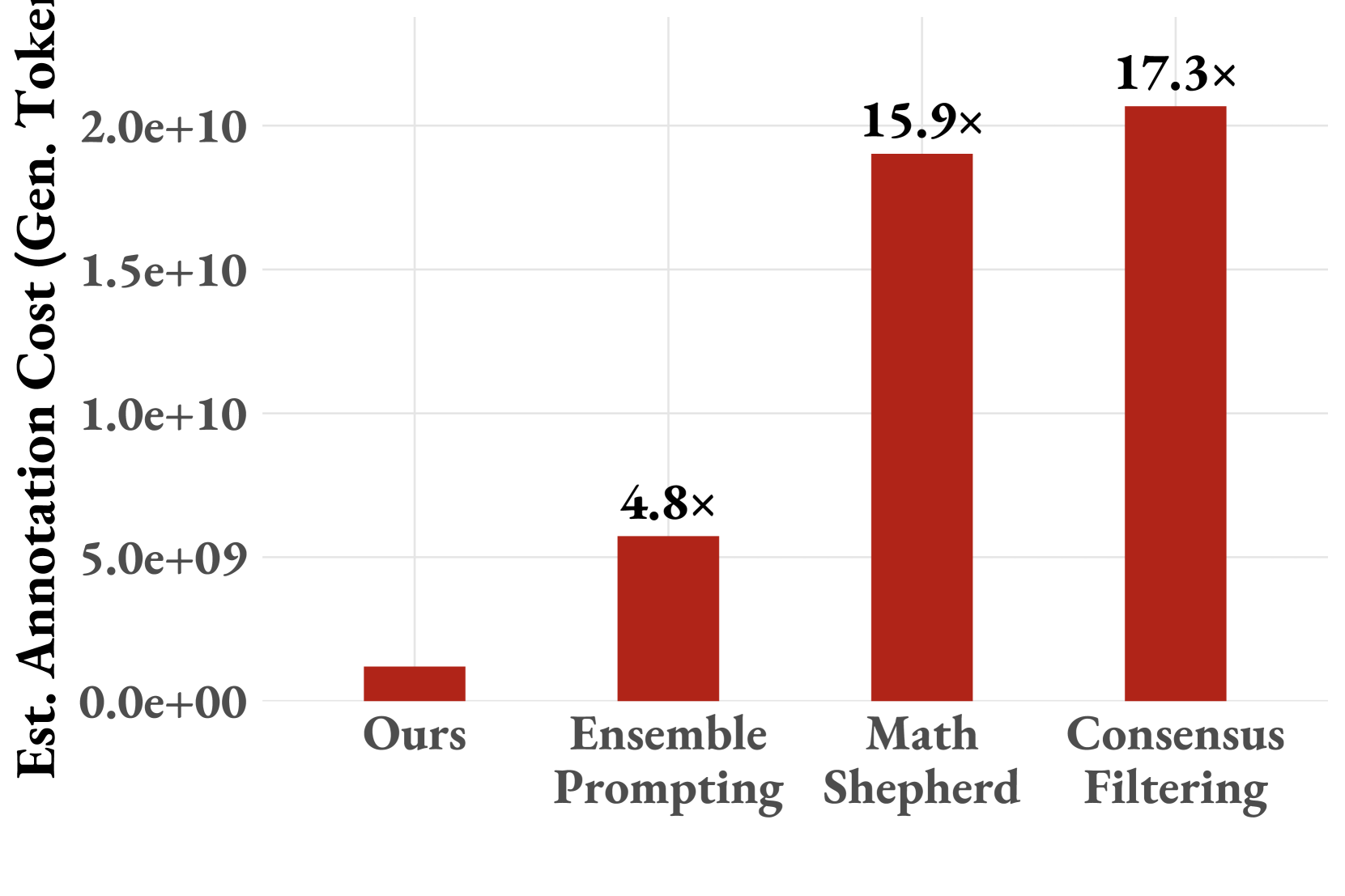

The image is a bar chart comparing the estimated annotation cost (in generated tokens) for different methods: "Ours", "Ensemble Prompting", "Math Shepherd", and "Consensus Filtering". The y-axis represents the estimated annotation cost, scaled in scientific notation. The chart uses red bars to represent the cost for each method, with numerical multipliers displayed above each bar indicating the relative cost compared to the "Ours" method.

### Components/Axes

* **X-axis:** Categorical axis representing different methods: "Ours", "Ensemble Prompting", "Math Shepherd", and "Consensus Filtering".

* **Y-axis:** Numerical axis representing "Est. Annotation Cost (Gen. Token)". The scale ranges from 0.0e+00 to 2.0e+10, with increments at 5.0e+09, 1.0e+10, and 1.5e+10.

* **Bars:** Red bars representing the estimated annotation cost for each method.

* **Multipliers:** Numerical values above each bar indicating the relative cost compared to the "Ours" method.

### Detailed Analysis

* **Ours:** The bar for "Ours" starts at approximately 0.0e+00 and extends to an estimated value of approximately 0.3e+09.

* **Ensemble Prompting:** The bar for "Ensemble Prompting" extends to approximately 4.8x the cost of "Ours".

* **Math Shepherd:** The bar for "Math Shepherd" extends to approximately 15.9x the cost of "Ours".

* **Consensus Filtering:** The bar for "Consensus Filtering" extends to approximately 17.3x the cost of "Ours".

### Key Observations

* The "Ours" method has the lowest estimated annotation cost.

* "Ensemble Prompting" has a significantly higher cost than "Ours", but lower than "Math Shepherd" and "Consensus Filtering".

* "Math Shepherd" and "Consensus Filtering" have the highest estimated annotation costs, with "Consensus Filtering" being slightly higher.

### Interpretation

The bar chart demonstrates that the "Ours" method is the most cost-effective in terms of estimated annotation cost (generated tokens) compared to the other methods. "Ensemble Prompting" offers a moderate increase in cost, while "Math Shepherd" and "Consensus Filtering" incur substantially higher costs. This suggests that the "Ours" method is a more efficient approach for annotation, potentially due to optimized token generation or reduced annotation requirements. The multipliers above each bar provide a clear comparison of the relative cost increase for each method compared to the baseline "Ours" method.