## Diagram: EpMAN System Architecture and Performance Comparison

### Overview

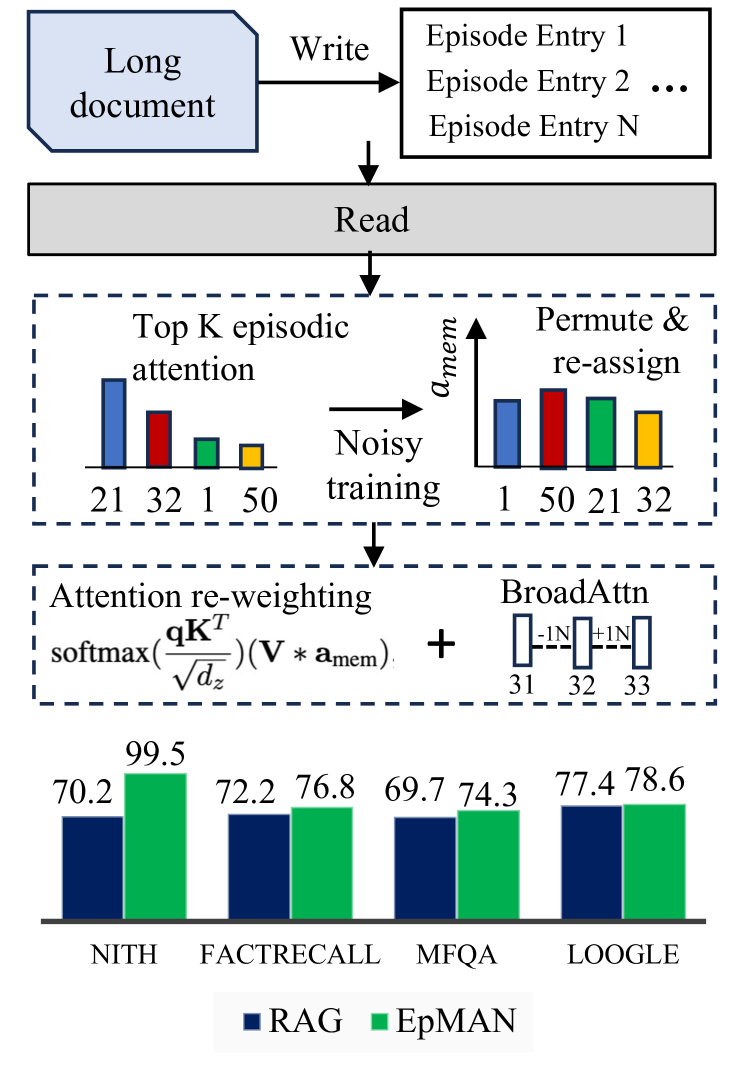

This image is a technical diagram illustrating the architecture and performance of a system called **EpMAN** (likely "Episodic Memory Attention Network"). It is a composite figure with three main sections: a top-level flowchart showing document processing, a middle section detailing an attention mechanism with training, and a bottom bar chart comparing the performance of EpMAN against a baseline (RAG) on four datasets.

### Components/Axes

The diagram is segmented into three horizontal regions:

1. **Top Region (Document Processing Flowchart):**

* **Component 1:** A blue, document-shaped box labeled "Long document".

* **Action Arrow:** An arrow labeled "Write" points from the document to a list.

* **Component 2:** A white box containing the text: "Episode Entry 1", "Episode Entry 2", "...", "Episode Entry N".

* **Action Arrow:** An arrow points downward from the episode list to a gray bar.

* **Component 3:** A gray horizontal bar labeled "Read".

2. **Middle Region (Attention Mechanism Diagram):**

* **Dashed Box 1 (Left):** Labeled "Top K episodic attention". Contains a bar chart with four bars of different heights and colors (blue, red, green, yellow). Below the bars are the indices: "21", "32", "1", "50".

* **Action Arrow:** An arrow labeled "Noisy training" points from the left box to the right box.

* **Dashed Box 2 (Right):** Labeled "Permute & re-assign". Contains a bar chart with the same four colored bars but in a different order and with a y-axis label "a_mem". The indices below are now: "1", "50", "21", "32".

* **Lower Dashed Box:** Contains two components connected by a plus sign (+).

* **Left Component:** Labeled "Attention re-weighting". Contains the mathematical formula: `softmax( (qK^T) / sqrt(d_z) ) (V * a_mem)`.

* **Right Component:** Labeled "BroadAttn". Shows three vertical bars with indices "31", "32", "33". Above the bars are annotations "-1N" and "+1N".

3. **Bottom Region (Performance Bar Chart):**

* **Chart Type:** Grouped bar chart.

* **X-axis (Categories):** Four dataset names: "NITH", "FACTRECALL", "MFQA", "LOOGLE".

* **Y-axis:** Not explicitly labeled with a title or scale, but numerical values are placed directly above each bar, indicating performance scores (likely accuracy or a similar metric).

* **Legend:** Located at the bottom center. A dark blue square is labeled "RAG". A green square is labeled "EpMAN".

* **Data Series:**

* **RAG (Dark Blue Bars):**

* NITH: 70.2

* FACTRECALL: 72.2

* MFQA: 69.7

* LOOGLE: 77.4

* **EpMAN (Green Bars):**

* NITH: 99.5

* FACTRECALL: 76.8

* MFQA: 74.3

* LOOGLE: 78.6

### Detailed Analysis

**1. Document Processing Flow:**

The process begins with a "Long document". A "Write" operation decomposes it into multiple "Episode Entry" items (1 through N). These entries are then processed by a "Read" operation.

**2. Episodic Attention & Training:**

The "Read" output feeds into an attention mechanism. Initially, "Top K episodic attention" selects specific entries (indices 21, 32, 1, 50) with varying attention weights (represented by bar heights: blue > red > green > yellow). During "Noisy training", these attention weights (`a_mem`) are permuted and reassigned to different entries (new order: 1, 50, 21, 32), likely to improve robustness.

**3. Attention Re-weighting & BroadAttn:**

The trained memory attention (`a_mem`) is used in an "Attention re-weighting" formula, which modifies the standard attention mechanism (`softmax(qK^T/√d_z)`) by element-wise multiplying the value matrix `V` with `a_mem`. This is combined with a "BroadAttn" component that appears to adjust representations for entries 31, 32, and 33 (with -1N and +1N annotations suggesting normalization or adjustment operations).

**4. Performance Comparison (Bar Chart):**

* **Spatial Grounding:** The legend is centered at the bottom. For each dataset category on the x-axis, the RAG (dark blue) bar is on the left, and the EpMAN (green) bar is on the right.

* **Trend Verification:** For all four datasets, the green EpMAN bar is taller than the blue RAG bar, indicating superior performance.

* **Data Points:**

* **NITH:** EpMAN (99.5) shows a massive ~29.3 point improvement over RAG (70.2).

* **FACTRECALL:** EpMAN (76.8) outperforms RAG (72.2) by ~4.6 points.

* **MFQA:** EpMAN (74.3) leads RAG (69.7) by ~4.6 points.

* **LOOGLE:** EpMAN (78.6) has a slight edge over RAG (77.4) of ~1.2 points.

### Key Observations

1. **Significant Performance Gain:** The most striking observation is the dramatic performance improvement of EpMAN over RAG on the **NITH** dataset (99.5 vs. 70.2).

2. **Consistent Superiority:** EpMAN outperforms RAG on all four presented benchmarks, though the margin varies significantly.

3. **Architectural Complexity:** The diagram suggests EpMAN's core innovation lies in its episodic memory write/read process and a specialized attention mechanism that re-weights attention using a trained memory vector (`a_mem`) and incorporates a "BroadAttn" module.

4. **Training Strategy:** The inclusion of "Noisy training" via permutation and re-assignment of attention weights is highlighted as a key step, likely for regularization or robustness.

### Interpretation

This diagram presents **EpMAN** as a novel retrieval-augmented generation (RAG) system designed to handle long documents by breaking them into episodic entries. Its core innovation is an **episodic memory-augmented attention mechanism**.

* **How it works:** Instead of standard attention, EpMAN uses a learned memory vector (`a_mem`) to re-weight the attention scores. This allows the model to dynamically emphasize or suppress information from specific episodic entries during the "read" phase. The "BroadAttn" component further refines this by applying localized adjustments.

* **Why it matters:** The performance chart provides empirical evidence that this architectural change leads to substantial improvements, particularly on the **NITH** benchmark. The huge gain on NITH suggests that EpMAN's memory mechanism is exceptionally well-suited for the type of reasoning or retrieval tasks required by that dataset, possibly involving precise recall of specific facts from long contexts.

* **Underlying Message:** The diagram argues that moving from a generic RAG approach to one with structured episodic memory and specialized attention re-weighting yields a more powerful and robust system for knowledge-intensive tasks. The "Noisy training" step is presented as a crucial technique for achieving this robustness. The varying performance gains across datasets indicate that the advantage of EpMAN is task-dependent, offering the most significant benefits where precise episodic recall is critical.