## Diagram: Episodic Memory Augmented Retrieval

### Overview

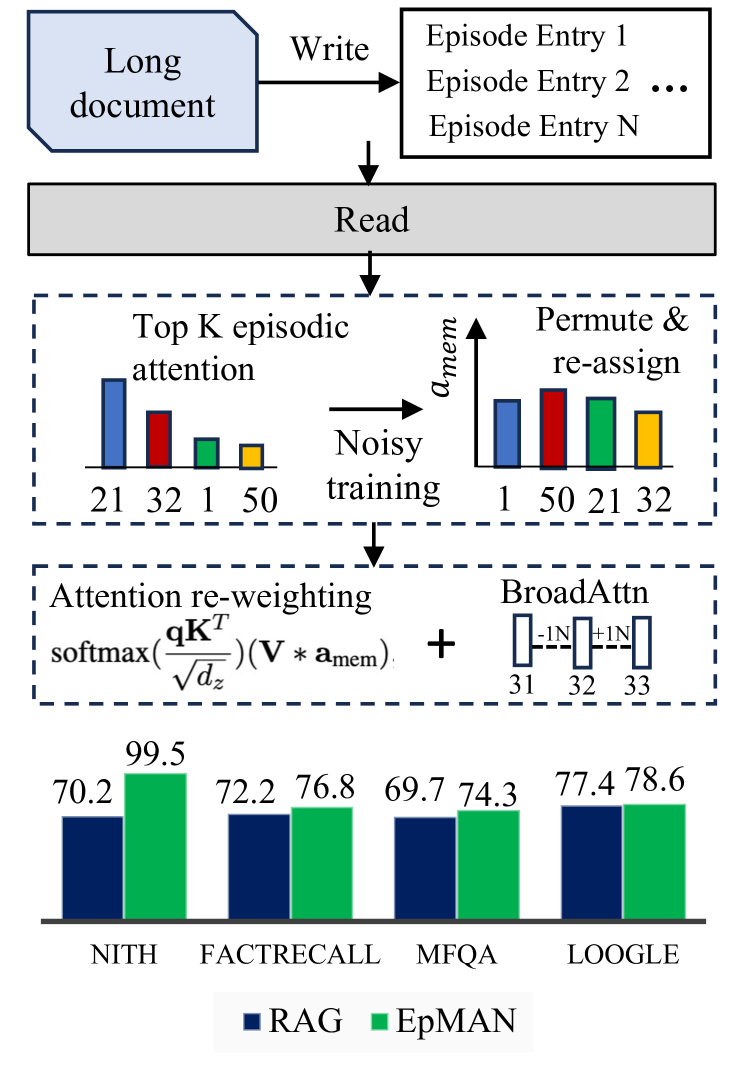

This diagram illustrates a process for augmenting retrieval with episodic memory, likely within a natural language processing or information retrieval system. The process involves writing information from a long document into episodic memory, reading from that memory, and then using attention mechanisms to re-weight and integrate the retrieved information. The diagram culminates in a bar chart comparing the performance of different methods.

### Components/Axes

The diagram consists of several key components:

* **Long Document:** The initial input source.

* **Write:** An arrow indicating the process of storing information.

* **Episodic Memory:** Represented as a series of "Episode Entry" blocks (1 to N).

* **Read:** An arrow indicating the process of retrieving information.

* **Top K episodic attention:** A bar chart with bars colored red, blue, orange, and yellow. The x-axis labels are 21, 32, 1, 50.

* **Permute & re-assign:** A bar chart with bars colored red, blue, orange, and yellow. The x-axis labels are 1, 50, 21, 32.

* **Noisy training:** A label connecting the two attention charts with an arrow.

* **Attention re-weighting:** A mathematical formula: `softmax(qK^T) / sqrt(d_z) * (V * a_mem)`.

* **BroadAttn:** A bar chart with bars colored dark gray, light gray, and light gray. The x-axis labels are 31, 32, 33.

* **Bar Chart (Performance Comparison):** This chart compares the performance of RAG and EpMAN across four datasets: NITH, FACTRECALL, MFQA, and LOOGLE. The y-axis represents a percentage score.

### Detailed Analysis or Content Details

**Episodic Attention Charts:**

* **Top K episodic attention:** The bars represent attention weights. The heights are approximately: Red: 21, Blue: 32, Orange: 1, Yellow: 50.

* **Permute & re-assign:** The bars represent attention weights after permutation. The heights are approximately: Red: 1, Orange: 50, Blue: 21, Yellow: 32.

**Attention Re-weighting Formula:**

* The formula indicates a softmax function applied to the product of query (q) and key (K) transposed, scaled by the square root of the dimension (d_z), and then multiplied by the product of value (V) and episodic memory attention (a_mem).

**BroadAttn Chart:**

* The bars represent attention weights. The heights are approximately: Dark Gray: 31, Light Gray: 32, Light Gray: 33.

**Performance Comparison Bar Chart:**

* **NITH:** RAG: ~70.2, EpMAN: ~99.5

* **FACTRECALL:** RAG: ~72.2, EpMAN: ~76.8

* **MFQA:** RAG: ~69.7, EpMAN: ~74.3

* **LOOGLE:** RAG: ~77.4, EpMAN: ~78.6

**Legend:**

* **RAG:** Dark Blue

* **EpMAN:** Green

### Key Observations

* EpMAN consistently outperforms RAG across all four datasets, with a particularly significant difference on the NITH dataset.

* The attention charts show a clear transformation of attention weights through the "Permute & re-assign" step.

* The BroadAttn chart shows a slight increase in attention weights from 31 to 33.

### Interpretation

The diagram illustrates a system designed to improve retrieval performance by incorporating episodic memory. The "Write" and "Read" steps represent the storage and retrieval of information from the long document. The "Top K episodic attention" and "Permute & re-assign" steps suggest a mechanism for focusing on relevant episodes and potentially mitigating the effects of noisy training data. The attention re-weighting formula indicates a standard attention mechanism used to integrate the retrieved information.

The performance comparison bar chart demonstrates that the EpMAN method consistently outperforms RAG, suggesting that the episodic memory augmentation is effective. The large performance gap on the NITH dataset may indicate that EpMAN is particularly well-suited for tasks requiring long-term memory or contextual understanding. The permutation step likely helps to improve the robustness of the attention mechanism by preventing it from becoming overly reliant on specific episodes. The BroadAttn component may be a further refinement of the attention mechanism.

The diagram suggests a sophisticated approach to information retrieval that leverages the benefits of both retrieval-augmented generation (RAG) and episodic memory. The system appears to be designed to address the limitations of traditional RAG methods by providing a more robust and context-aware retrieval mechanism.