TECHNICAL ASSET FINGERPRINT

bc4fb819ab91002031f5cf01

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

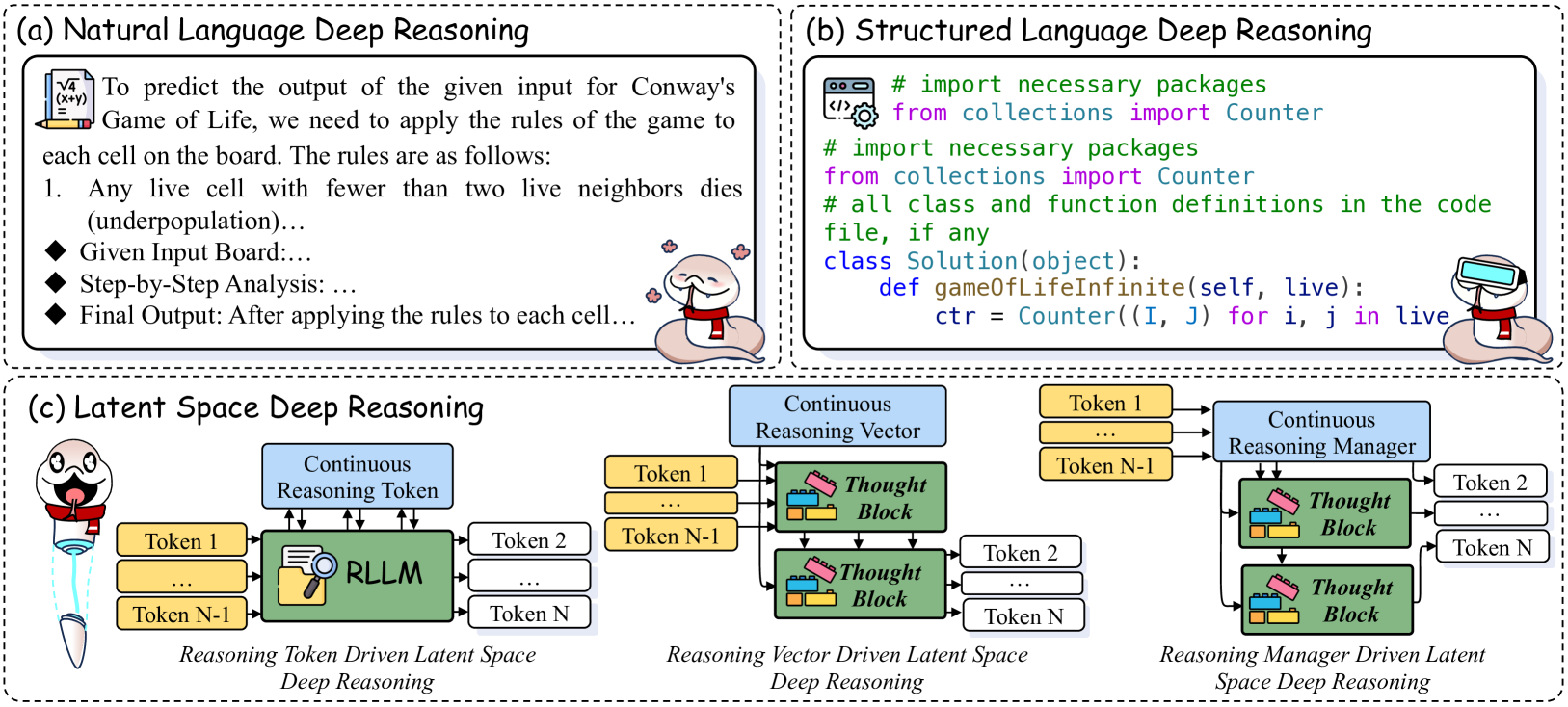

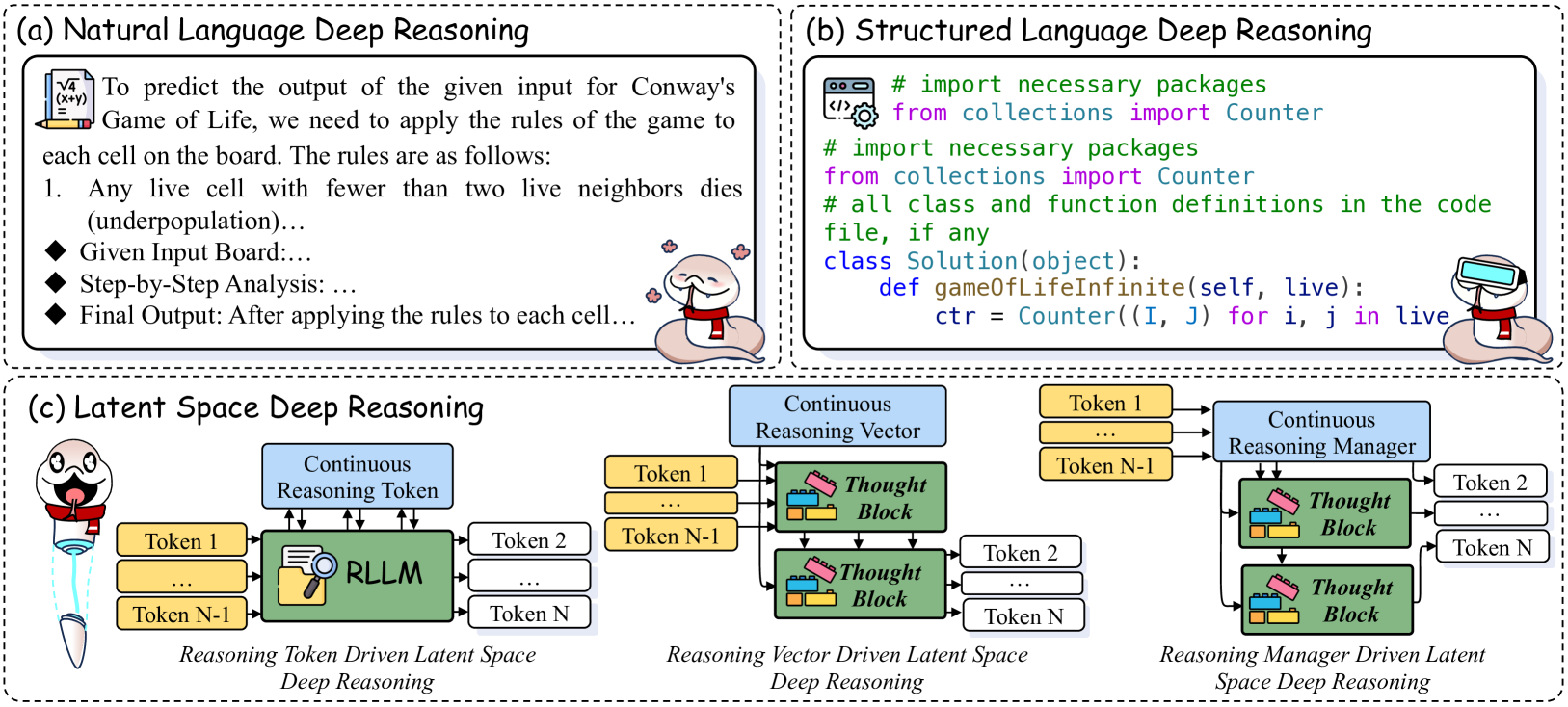

## Diagram: Deep Reasoning Approaches

### Overview

The image presents three different approaches to deep reasoning: Natural Language Deep Reasoning, Structured Language Deep Reasoning, and Latent Space Deep Reasoning. Each approach is illustrated with a diagram and a brief explanation.

### Components/Axes

* **(a) Natural Language Deep Reasoning:**

* Text describing the process of predicting the output of Conway's Game of Life using natural language rules.

* Steps outlined: Given Input Board, Step-by-Step Analysis, Final Output.

* **(b) Structured Language Deep Reasoning:**

* Code snippet (Python) demonstrating the implementation of Conway's Game of Life using the `Counter` object from the `collections` module.

* Class definition: `class Solution(object):` with a method `gameofLifeInfinite(self, live):`

* **(c) Latent Space Deep Reasoning:**

* Diagrams illustrating three variations: Reasoning Token Driven, Reasoning Vector Driven, and Reasoning Manager Driven.

* Components: Continuous Reasoning Token/Vector/Manager (blue), Tokens 1 to N (yellow), Thought Block (green with lego-like structure), RLLM (green with magnifying glass icon).

* Flow: Tokens feed into the Continuous Reasoning component, which then interacts with Thought Blocks to generate further tokens.

### Detailed Analysis

**(a) Natural Language Deep Reasoning:**

* The text describes the process of applying the rules of Conway's Game of Life to predict the output.

* The rules are implicitly stated, with the first rule being that any live cell with fewer than two live neighbors dies (underpopulation).

* The process involves a given input board, step-by-step analysis, and a final output.

**(b) Structured Language Deep Reasoning:**

* The code snippet imports the `Counter` object from the `collections` module.

* A class `Solution` is defined with a method `gameofLifeInfinite` that takes `self` and `live` as arguments.

* Inside the method, a `Counter` object `ctr` is initialized based on the input `live`.

**(c) Latent Space Deep Reasoning:**

* **Reasoning Token Driven Latent Space Deep Reasoning:**

* Tokens 1 to N-1 feed into the RLLM (Reasoning Language Model).

* The Continuous Reasoning Token also feeds into the RLLM.

* The RLLM outputs Tokens 2 to N.

* **Reasoning Vector Driven Latent Space Deep Reasoning:**

* Tokens 1 to N-1 feed into a Thought Block.

* The Continuous Reasoning Vector also feeds into the Thought Block.

* The Thought Block outputs Tokens 2 to N.

* **Reasoning Manager Driven Latent Space Deep Reasoning:**

* Tokens 1 to N-1 feed into a Thought Block.

* The Continuous Reasoning Manager also feeds into the Thought Block.

* The Thought Block outputs Tokens 2 to N.

### Key Observations

* The three approaches represent different levels of abstraction and formalization in deep reasoning.

* Natural language provides a human-readable description, while structured language offers a precise implementation.

* Latent space reasoning utilizes continuous representations and learned models (RLLM) to perform reasoning.

* The "Thought Block" appears to be a key component in the Latent Space Reasoning, acting as a processing unit for tokens and continuous reasoning vectors/managers.

### Interpretation

The image illustrates a progression from intuitive, human-understandable reasoning (Natural Language) to formalized, machine-executable reasoning (Structured Language) and finally to abstract, learned reasoning (Latent Space). The Latent Space approach suggests a move towards more complex and potentially more powerful reasoning systems that can operate on continuous representations and learn from data. The use of "Thought Blocks" implies a modular approach to reasoning, where individual blocks perform specific reasoning tasks. The different variations (Token, Vector, Manager driven) likely represent different ways of controlling or guiding the reasoning process within the latent space.

```

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Deep Reasoning Approaches

### Overview

The image presents a comparative diagram illustrating three different approaches to deep reasoning: Natural Language Deep Reasoning, Structured Language Deep Reasoning, and Latent Space Deep Reasoning. Each approach is depicted within a rectangular frame labeled (a), (b), and (c) respectively. The diagram uses visual metaphors (computer, code editor, brain) alongside textual descriptions to explain the process.

### Components/Axes

The diagram is divided into three main sections, each representing a different reasoning approach. Each section contains:

* A title indicating the reasoning approach.

* A textual description of the approach.

* A visual representation of the process.

* Illustrative icons representing input and output.

### Detailed Analysis or Content Details

**(a) Natural Language Deep Reasoning:**

* **Title:** Natural Language Deep Reasoning

* **Text:** "To predict the output of the given input for Conway's Game of Life, we need to apply the rules of the game to each cell on the board. The rules are as follows: 1. Any live cell with fewer than two live neighbors dies (underpopulation). Given Input Board:… Step-by-Step Analysis:… Final Output: After applying the rules to each cell…"

* **Visual:** A computer screen displaying a grid-like pattern (representing Conway's Game of Life board) with some cells highlighted. A brain icon is positioned above the screen, with curved lines suggesting a thought process connecting the brain to the screen.

* **Icons:** A computer monitor on the left, a brain icon in the center, and a robot head on the right.

**(b) Structured Language Deep Reasoning:**

* **Title:** Structured Language Deep Reasoning

* **Text:** "# import necessary packages from collections import Counter # import necessary packages from collections import Counter # all class and function definitions in the code file, if any class Solution(object): def gameOfLifeInfinite(self, live): ctr = Counter((I, J) for i, j in live"

* **Visual:** A code editor window displaying Python code related to Conway's Game of Life. A robot head is positioned to the right of the code editor.

* **Icons:** A code editor icon on the left, and a robot head on the right.

**(c) Latent Space Deep Reasoning:**

* **Title:** Latent Space Deep Reasoning

* **Visual:** This section is more complex, depicting a flow diagram.

* **Left:** A circular icon with a brain and gears, labeled "Continuous Reasoning Token". This feeds into a block labeled "RLLM". The RLLM block outputs "Token 1", "Token N-1", and "Token N".

* **Center:** "Reasoning Vector Driven Latent Space Deep Reasoning". "Token 1", "Token N-1", and "Token N" are input into "Thought Block" boxes.

* **Right:** "Reasoning Manager Driven Latent Space Deep Reasoning". "Token 1", "Token N-1", and "Token N" are input into a "Continuous Reasoning Manager" which outputs "Token 2", "Token N".

* **Labels:** "Reasoning Token Driven Latent Space Deep Reasoning", "Reasoning Vector Driven Latent Space Deep Reasoning", "Reasoning Manager Driven Latent Space Deep Reasoning".

### Key Observations

* The diagram highlights a progression from more explicit (natural language, structured code) to more implicit (latent space) reasoning approaches.

* The Latent Space approach is significantly more complex visually, suggesting a higher level of abstraction.

* All three approaches ultimately aim to solve the same problem (Conway's Game of Life), but utilize different methods.

* The use of robot head icons in (a) and (b) suggests an automated output or solution.

### Interpretation

The diagram illustrates different paradigms for achieving deep reasoning in artificial intelligence. Natural Language Deep Reasoning relies on human-readable rules and explanations. Structured Language Deep Reasoning uses formal code to implement the reasoning process. Latent Space Deep Reasoning, the most advanced approach, operates on abstract representations (tokens and vectors) within a latent space, potentially enabling more flexible and nuanced reasoning. The diagram suggests that as reasoning becomes more sophisticated, it moves away from explicit instructions and towards implicit, learned representations. The complexity of the Latent Space section indicates the challenges and potential of this approach. The common thread is Conway's Game of Life, used as a benchmark problem to demonstrate the capabilities of each reasoning method. The diagram doesn't provide specific data or numerical values, but rather a conceptual comparison of different AI reasoning strategies.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Three Approaches to Deep Reasoning

### Overview

The image is a composite diagram illustrating three distinct paradigms for "Deep Reasoning," likely in the context of artificial intelligence or computational problem-solving. It uses Conway's Game of Life as a unifying example to contrast the approaches. The diagram is divided into three main sections, labeled (a), (b), and (c), each with a distinct title and visual style.

### Components/Axes

The diagram is organized into three primary panels:

1. **Panel (a):** Titled "Natural Language Deep Reasoning." Contains a block of explanatory text and bullet points.

2. **Panel (b):** Titled "Structured Language Deep Reasoning." Contains a block of Python code.

3. **Panel (c):** Titled "Latent Space Deep Reasoning." Contains three sub-diagrams illustrating different internal architectures for reasoning.

Each panel features a small, similar cartoon character (a worm-like figure with a red scarf) in the bottom-right corner, acting as a mascot or visual anchor.

### Detailed Analysis

#### **Panel (a): Natural Language Deep Reasoning**

This section presents reasoning in human-readable, explanatory prose.

* **Text Transcription:**

> To predict the output of the given input for Conway's Game of Life, we need to apply the rules of the game to each cell on the board. The rules are as follows:

> 1. Any live cell with fewer than two live neighbors dies (underpopulation)...

> ◆ Given Input Board:...

> ◆ Step-by-Step Analysis: ...

> ◆ Final Output: After applying the rules to each cell...

* **Visual Elements:** A small icon of a notepad with a pencil is in the top-left corner of the text box.

#### **Panel (b): Structured Language Deep Reasoning**

This section presents reasoning in the form of executable code.

* **Code Transcription:**

```python

# import necessary packages

from collections import Counter

# import necessary packages

from collections import Counter

# all class and function definitions in the code file, if any

class Solution(object):

def gameOfLifeInfinite(self, live):

ctr = Counter((I, J) for i, j in live

```

* **Visual Elements:** A small icon of a code editor window (`</>`) with a gear is in the top-left corner of the code box. The code syntax is color-highlighted (e.g., `from`, `import`, `class`, `def` in blue/purple; strings in green).

#### **Panel (c): Latent Space Deep Reasoning**

This section illustrates three abstract, internal architectures for reasoning within a model's latent space. Each sub-diagram shows a flow from input tokens to output tokens via a specialized processing block.

**Sub-diagram 1 (Left): "Reasoning Token Driven Latent Space Deep Reasoning"**

* **Components & Flow:**

* **Input:** A stack of yellow boxes labeled "Token 1", "...", "Token N-1".

* **Processing:** These tokens feed into a central green block labeled "RLLM" (with a magnifying glass icon). Above this block is a blue box labeled "Continuous Reasoning Token," with arrows pointing down into the RLLM block.

* **Output:** The RLLM block outputs a stack of white boxes labeled "Token 2", "...", "Token N".

* **Caption:** "Reasoning Token Driven Latent Space Deep Reasoning" is written below the diagram.

**Sub-diagram 2 (Center): "Reasoning Vector Driven Latent Space Deep Reasoning"**

* **Components & Flow:**

* **Input:** A stack of yellow boxes labeled "Token 1", "...", "Token N-1".

* **Processing:** These tokens feed into a vertical stack of two green "Thought Block" icons (with Lego-like bricks). Above the top Thought Block is a blue box labeled "Continuous Reasoning Vector," with arrows pointing down into the block.

* **Output:** The bottom Thought Block outputs a stack of white boxes labeled "Token 2", "...", "Token N".

* **Caption:** "Reasoning Vector Driven Latent Space Deep Reasoning" is written below the diagram.

**Sub-diagram 3 (Right): "Reasoning Manager Driven Latent Space Deep Reasoning"**

* **Components & Flow:**

* **Input:** A stack of yellow boxes labeled "Token 1", "...", "Token N-1".

* **Processing:** These tokens feed into a blue box labeled "Continuous Reasoning Manager." This manager has arrows pointing down into a vertical stack of two green "Thought Block" icons.

* **Output:** The bottom Thought Block outputs a stack of white boxes labeled "Token 2", "...", "Token N".

* **Caption:** "Reasoning Manager Driven Latent Space Deep Reasoning" is written below the diagram.

### Key Observations

1. **Progression of Abstraction:** The panels show a clear progression from explicit, human-centric reasoning (natural language), to formal, machine-executable reasoning (code), to an internalized, sub-symbolic reasoning process (latent space).

2. **Common Problem:** All three approaches are framed as solutions to the same problem: implementing Conway's Game of Life.

3. **Latent Space Variants:** The three sub-diagrams in (c) propose different mechanisms for guiding latent space reasoning: via a special token, via a continuous vector, or via a managing module that controls thought blocks.

4. **Visual Consistency:** The use of consistent colors (yellow for input tokens, white for output tokens, green for processing blocks, blue for continuous reasoning elements) and the recurring cartoon character creates visual cohesion across the different concepts.

### Interpretation

This diagram serves as a conceptual taxonomy for how advanced AI systems might perform complex reasoning tasks.

* **Natural Language Reasoning (a)** mimics human explanation, emphasizing transparency and interpretability. It's suitable for communicating logic but may be inefficient for execution.

* **Structured Language Reasoning (b)** represents the current standard for precise, verifiable computation. It's executable but can lack the flexibility and intuitive leaps of human thought.

* **Latent Space Reasoning (c)** represents a frontier where reasoning occurs as a continuous, distributed process within a neural network's internal representations. The three variants explore how this process might be structured and controlled—either by injecting a reasoning signal (token/vector) or by using a dedicated manager module to orchestrate "thought" steps. This approach aims to combine the flexibility of neural networks with the structured, multi-step reasoning capabilities of symbolic systems.

The overarching message is that "deep reasoning" in AI is not a monolithic concept but can be implemented through fundamentally different paradigms, each with its own trade-offs between interpretability, precision, and computational efficiency. The progression from (a) to (c) suggests a movement towards more powerful, but less directly interpretable, forms of machine cognition.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart and Code Diagram: Deep Reasoning Architectures

### Overview

The image presents three distinct reasoning frameworks:

1. **Natural Language Deep Reasoning** (a)

2. **Structured Language Deep Reasoning** (b)

3. **Latent Space Deep Reasoning** (c)

Each section combines textual explanations with visual diagrams to illustrate computational reasoning processes.

---

### Components/Axes

#### (a) Natural Language Deep Reasoning

- **Textual Content**:

- Rules for Conway's Game of Life:

1. Underpopulation: Live cell with <2 neighbors dies.

2. Survival: Live cell with 2-3 neighbors survives.

3. Reproduction: Dead cell with 3 neighbors becomes alive.

- Workflow:

- Input Board → Step-by-Step Analysis → Final Output

- **Visual Elements**:

- Flowchart with arrows connecting "Input Board" → "Step-by-Step Analysis" → "Final Output".

- Icons: Pencil (input), magnifying glass (analysis), checkered flag (output).

#### (b) Structured Language Deep Reasoning

- **Textual Content**:

- Python code snippet for Game of Life:

```python

from collections import Counter

class Solution:

def gameOfLifeInfinite(self, live):

ctr = Counter((i, j) for i, j in live)

```

- Keywords: `import`, `class`, `def`, `Counter`, `for` loops.

- **Visual Elements**:

- Code syntax highlighting:

- Green: Comments (`# import...`)

- Purple: Keywords (`from`, `class`, `def`)

- Blue: Variables (`ctr`, `live`)

#### (c) Latent Space Deep Reasoning

- **Textual Content**:

- Three reasoning paradigms:

1. **Reasoning Token Driven Latent Space**

2. **Reasoning Vector Driven Latent Space**

3. **Reasoning Manager Driven Latent Space**

- **Visual Elements**:

- **Thought Blocks**: Green rectangles with pink "thought" icons.

- **Continuous Reasoning Vectors**: Blue arrows.

- **Token Flow**: Yellow rectangles labeled "Token 1" to "Token N".

- **RLLM**: Green rectangle with magnifying glass icon.

---

### Detailed Analysis

#### (a) Natural Language Deep Reasoning

- **Rules**:

- Underpopulation: "Any live cell with fewer than two live neighbors dies."

- Survival: Implied by "2-3 neighbors" (not explicitly stated but inferred).

- Reproduction: "Dead cell with 3 neighbors becomes alive."

- **Workflow**:

- Input Board (initial state) → Apply rules iteratively → Final Output (next state).

#### (b) Structured Language Deep Reasoning

- **Code Structure**:

- Imports: `collections.Counter` for neighbor counting.

- Class `Solution`: Encapsulates the game logic.

- Method `gameOfLifeInfinite`: Processes live cell coordinates.

- **Key Components**:

- `ctr = Counter((i, j) for i, j in live)`: Counts live cell positions.

#### (c) Latent Space Deep Reasoning

- **Architectures**:

1. **Token Driven**: Tokens (1 to N) feed into RLLM for reasoning.

2. **Vector Driven**: Continuous vectors route through "Thought Blocks" to generate tokens.

3. **Manager Driven**: Central "Reasoning Manager" orchestrates token flow between Thought Blocks.

---

### Key Observations

1. **Natural Language**: Explicitly defines Game of Life rules but omits survival condition.

2. **Code**: Uses Python’s `Counter` for efficient neighbor tracking.

3. **Latent Space**:

- Token-driven models prioritize sequential reasoning.

- Vector-driven models emphasize continuous state transitions.

- Manager-driven models introduce hierarchical control.

---

### Interpretation

- **Natural Language**: Demonstrates rule-based reasoning but lacks implementation details.

- **Structured Language**: Translates rules into executable code, highlighting computational efficiency.

- **Latent Space**: Illustrates how deep learning models abstract reasoning into tokens, vectors, or managed workflows.

- **Missing Data**: Survival condition in (a) is inferred but not explicitly stated.

- **Design Insight**: The three latent space paradigms suggest a progression from basic token processing to advanced hierarchical reasoning.

This breakdown reveals how reasoning frameworks evolve from rule-based logic to abstract, scalable architectures.

DECODING INTELLIGENCE...