## Diagram: Deep Reasoning Approaches

### Overview

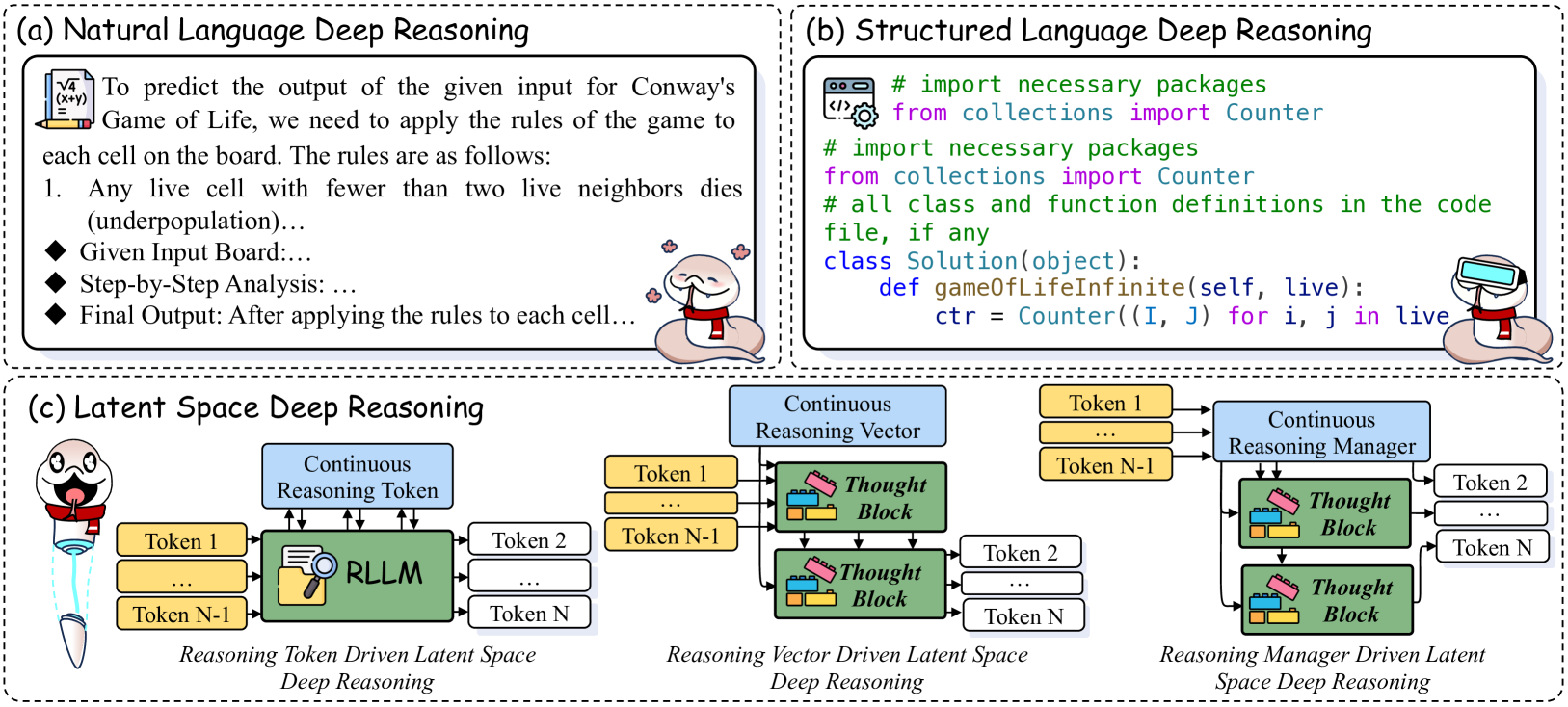

The image presents three different approaches to deep reasoning: Natural Language Deep Reasoning, Structured Language Deep Reasoning, and Latent Space Deep Reasoning. Each approach is illustrated with a diagram and a brief explanation.

### Components/Axes

* **(a) Natural Language Deep Reasoning:**

* Text describing the process of predicting the output of Conway's Game of Life using natural language rules.

* Steps outlined: Given Input Board, Step-by-Step Analysis, Final Output.

* **(b) Structured Language Deep Reasoning:**

* Code snippet (Python) demonstrating the implementation of Conway's Game of Life using the `Counter` object from the `collections` module.

* Class definition: `class Solution(object):` with a method `gameofLifeInfinite(self, live):`

* **(c) Latent Space Deep Reasoning:**

* Diagrams illustrating three variations: Reasoning Token Driven, Reasoning Vector Driven, and Reasoning Manager Driven.

* Components: Continuous Reasoning Token/Vector/Manager (blue), Tokens 1 to N (yellow), Thought Block (green with lego-like structure), RLLM (green with magnifying glass icon).

* Flow: Tokens feed into the Continuous Reasoning component, which then interacts with Thought Blocks to generate further tokens.

### Detailed Analysis

**(a) Natural Language Deep Reasoning:**

* The text describes the process of applying the rules of Conway's Game of Life to predict the output.

* The rules are implicitly stated, with the first rule being that any live cell with fewer than two live neighbors dies (underpopulation).

* The process involves a given input board, step-by-step analysis, and a final output.

**(b) Structured Language Deep Reasoning:**

* The code snippet imports the `Counter` object from the `collections` module.

* A class `Solution` is defined with a method `gameofLifeInfinite` that takes `self` and `live` as arguments.

* Inside the method, a `Counter` object `ctr` is initialized based on the input `live`.

**(c) Latent Space Deep Reasoning:**

* **Reasoning Token Driven Latent Space Deep Reasoning:**

* Tokens 1 to N-1 feed into the RLLM (Reasoning Language Model).

* The Continuous Reasoning Token also feeds into the RLLM.

* The RLLM outputs Tokens 2 to N.

* **Reasoning Vector Driven Latent Space Deep Reasoning:**

* Tokens 1 to N-1 feed into a Thought Block.

* The Continuous Reasoning Vector also feeds into the Thought Block.

* The Thought Block outputs Tokens 2 to N.

* **Reasoning Manager Driven Latent Space Deep Reasoning:**

* Tokens 1 to N-1 feed into a Thought Block.

* The Continuous Reasoning Manager also feeds into the Thought Block.

* The Thought Block outputs Tokens 2 to N.

### Key Observations

* The three approaches represent different levels of abstraction and formalization in deep reasoning.

* Natural language provides a human-readable description, while structured language offers a precise implementation.

* Latent space reasoning utilizes continuous representations and learned models (RLLM) to perform reasoning.

* The "Thought Block" appears to be a key component in the Latent Space Reasoning, acting as a processing unit for tokens and continuous reasoning vectors/managers.

### Interpretation

The image illustrates a progression from intuitive, human-understandable reasoning (Natural Language) to formalized, machine-executable reasoning (Structured Language) and finally to abstract, learned reasoning (Latent Space). The Latent Space approach suggests a move towards more complex and potentially more powerful reasoning systems that can operate on continuous representations and learn from data. The use of "Thought Blocks" implies a modular approach to reasoning, where individual blocks perform specific reasoning tasks. The different variations (Token, Vector, Manager driven) likely represent different ways of controlling or guiding the reasoning process within the latent space.

```