## Bar Charts: VRAM Usage and Accuracy Comparison Across Model Sizes

### Overview

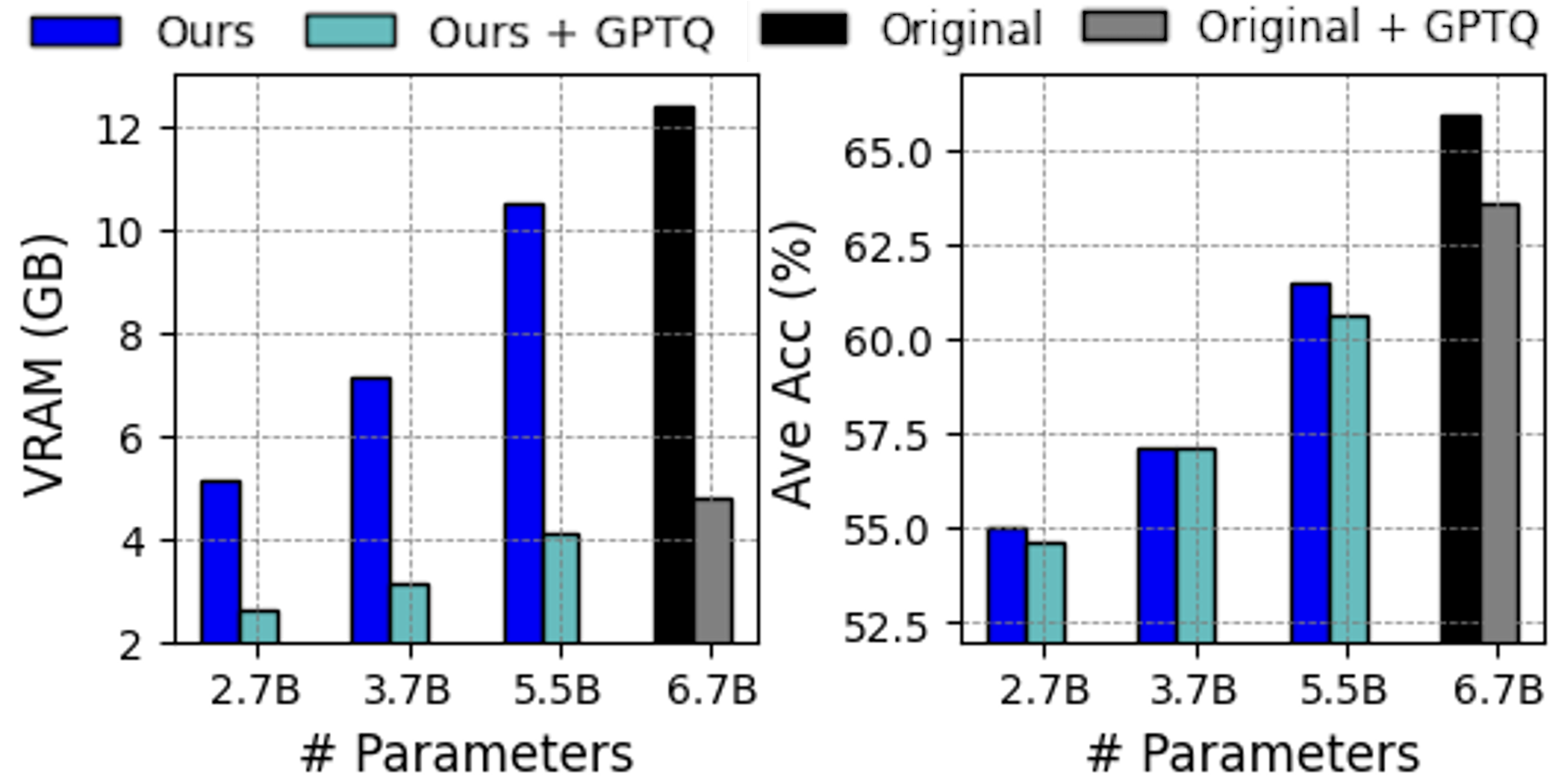

The image contains two side-by-side bar charts comparing model performance metrics (VRAM usage and accuracy) across four parameter sizes (2.7B, 3.7B, 5.5B, 6.7B). Each chart uses four color-coded categories: "Ours" (blue), "Ours + GPTQ" (teal), "Original" (black), and "Original + GPTQ" (gray). The charts demonstrate trade-offs between resource efficiency and performance.

### Components/Axes

**Left Chart (VRAM Usage in GB):**

- **X-axis**: Model parameter sizes (2.7B, 3.7B, 5.5B, 6.7B)

- **Y-axis**: VRAM usage (GB), ranging from 2 to 12

- **Legend**:

- Blue = "Ours"

- Teal = "Ours + GPTQ"

- Black = "Original"

- Gray = "Original + GPTQ"

- **Legend Position**: Top of chart

**Right Chart (Average Accuracy %):**

- **X-axis**: Same parameter sizes as left chart

- **Y-axis**: Accuracy (%), ranging from 52.5 to 65

- **Legend**: Same color coding as left chart

- **Legend Position**: Top of chart

### Detailed Analysis

**VRAM Usage (Left Chart):**

- **2.7B Parameters**:

- Blue ("Ours"): ~5.2GB

- Teal ("Ours + GPTQ"): ~2.5GB

- Black ("Original"): ~10.5GB

- Gray ("Original + GPTQ"): ~4.7GB

- **3.7B Parameters**:

- Blue: ~7.2GB

- Teal: ~3.1GB

- Black: ~11.8GB

- Gray: ~4.9GB

- **5.5B Parameters**:

- Blue: ~10.6GB

- Teal: ~4.1GB

- Black: ~12.4GB

- Gray: ~5.1GB

- **6.7B Parameters**:

- Blue: ~12.4GB

- Teal: ~4.8GB

- Black: ~12.4GB

- Gray: ~5.1GB

**Accuracy (Right Chart):**

- **2.7B Parameters**:

- Blue: ~55.1%

- Teal: ~54.7%

- Black: ~57.8%

- Gray: ~56.9%

- **3.7B Parameters**:

- Blue: ~57.3%

- Teal: ~57.2%

- Black: ~59.5%

- Gray: ~57.4%

- **5.5B Parameters**:

- Blue: ~63.2%

- Teal: ~61.3%

- Black: ~66.1%

- Gray: ~63.5%

- **6.7B Parameters**:

- Blue: ~63.2%

- Teal: ~61.3%

- Black: ~66.1%

- Gray: ~63.5%

### Key Observations

1. **VRAM Trends**:

- "Ours" (blue) shows linear VRAM growth with parameter size (5.2GB → 12.4GB).

- "Original" (black) maintains high VRAM (~10.5GB–12.4GB) across all sizes.

- "Ours + GPTQ" (teal) consistently uses the least VRAM (2.5GB–4.8GB).

- "Original + GPTQ" (gray) reduces VRAM by ~50% compared to "Original" but remains higher than "Ours + GPTQ".

2. **Accuracy Trends**:

- "Ours" (blue) improves accuracy from 55.1% to 63.2% as parameters increase.

- "Original" (black) achieves the highest accuracy (66.1% at 5.5B/6.7B).

- "Ours + GPTQ" (teal) shows minimal accuracy gains (54.7% → 61.3%).

- "Original + GPTQ" (gray) maintains ~63.5% accuracy at larger sizes.

### Interpretation

The data reveals a trade-off between resource efficiency and performance:

- **"Ours" models** prioritize accuracy growth with parameter size but require significant VRAM.

- **GPTQ quantization** reduces VRAM usage by ~50% for both "Ours" and "Original" models but sacrifices ~2–3% accuracy.

- The "Original" model achieves the highest accuracy but at the cost of high VRAM consumption.

- At 6.7B parameters, "Ours" matches "Original" in VRAM (12.4GB) but lags in accuracy (63.2% vs. 66.1%).

This suggests that "Ours" offers a scalable architecture for accuracy-focused applications, while GPTQ provides a lightweight alternative for resource-constrained environments. The "Original" model remains optimal for accuracy-critical tasks despite its resource demands.