## Diagram: Neural Network Layer Hyperparameter Flowchart

### Overview

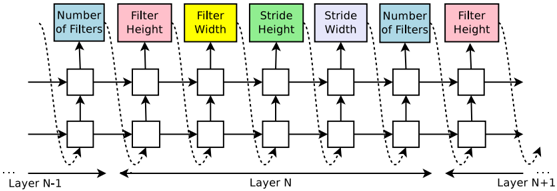

The image is a technical diagram illustrating the flow and hyperparameter configuration across three consecutive layers (N-1, N, N+1) of a neural network, likely a Convolutional Neural Network (CNN). It uses a block-and-arrow flowchart style to show how specific parameters are applied within "Layer N," with connections to preceding and succeeding layers.

### Components/Axes

**Top Legend/Parameter Labels (Centered at Top):**

A row of colored rectangular boxes, each containing a hyperparameter name. From left to right:

1. **Blue Box:** "Number of Filters"

2. **Pink Box:** "Filter Height"

3. **Yellow Box:** "Filter Width"

4. **Green Box:** "Stride Height"

5. **Purple Box:** "Stride Width"

6. **Blue Box:** "Number of Filters" (Repeated)

7. **Pink Box:** "Filter Height" (Repeated)

**Main Diagram Structure:**

* **Three Horizontal Sections:** Labeled at the bottom as "Layer N-1" (left), "Layer N" (center), and "Layer N+1" (right).

* **Layer N Core:** Contains two parallel rows of white rectangular blocks. Each row has four blocks. Arrows connect these blocks vertically (up and down) and horizontally (left to right).

* **Flow Arrows:**

* Solid horizontal arrows point from left to right, indicating the primary data flow from Layer N-1 into Layer N, and from Layer N out to Layer N+1.

* Dotted curved lines connect the top parameter boxes to specific blocks within Layer N, indicating which hyperparameter controls which operation.

* Vertical arrows within Layer N connect the two rows of blocks, suggesting an internal data exchange or parallel processing path.

### Detailed Analysis

**Spatial Grounding & Component Isolation:**

* **Header Region (Top):** Contains the seven colored hyperparameter labels. The first "Number of Filters" (blue) and "Filter Height" (pink) are linked via dotted lines to the first two blocks in the **top row** of Layer N. The "Filter Width" (yellow), "Stride Height" (green), and "Stride Width" (purple) are linked to the third and fourth blocks in the **top row**. The repeated "Number of Filters" (blue) and "Filter Height" (pink) are linked to the first two blocks in the **bottom row** of Layer N.

* **Main Chart Region (Center):** Layer N is the focal point. The diagram suggests that Layer N performs operations defined by the listed hyperparameters. The two parallel rows may represent different channels, feature maps, or a split in processing. The vertical arrows between rows indicate interaction.

* **Footer Region (Bottom):** Clearly demarcates the sequential layer structure: `Layer N-1 → Layer N → Layer N+1`.

**Transcribed Text (All text in the image is in English):**

* "Number of Filters"

* "Filter Height"

* "Filter Width"

* "Stride Height"

* "Stride Width"

* "Layer N-1"

* "Layer N"

* "Layer N+1"

### Key Observations

1. **Parameter Repetition:** The hyperparameters "Number of Filters" and "Filter Height" are specified twice, each time linked to a different row of blocks within Layer N. This could indicate these parameters are applied independently to two parallel streams or subsets of operations within the same layer.

2. **Asymmetric Detail:** "Filter Width," "Stride Height," and "Stride Width" are only linked to the top row of blocks in Layer N. This implies the bottom row may not use these parameters, or they are implicitly shared, which is an unusual and noteworthy design choice.

3. **Flow Complexity:** The internal vertical arrows within Layer N show a more complex data flow than a simple sequential pass, hinting at operations like concatenation, element-wise addition, or cross-channel communication.

### Interpretation

This diagram is a schematic for configuring a convolutional layer (Layer N) within a deep learning model. It visually maps abstract hyperparameters to specific computational units (the white blocks).

* **What it demonstrates:** It shows that Layer N is not a monolithic operation but is composed of multiple sub-operations (represented by blocks) whose behavior is governed by distinct hyperparameters. The split into two rows with different parameter linkages suggests a **multi-branch** or **multi-stream** architecture within a single layer, a technique used in advanced CNN designs (e.g., Inception modules, grouped convolutions) to increase representational power or efficiency.

* **Relationships:** The hyperparameters at the top are the "control knobs" for the layer. The arrows define the data dependency: input comes from the previous layer (N-1), is processed by the configured blocks in Layer N according to those knobs, and the result is passed to the next layer (N+1).

* **Notable Anomaly:** The selective application of parameters (e.g., "Filter Width" only to the top row) is the most critical observation. In a standard convolutional layer, parameters like filter size and stride are uniform. This diagram explicitly breaks that uniformity, indicating a specialized, non-standard layer design where different branches process information with different spatial configurations. This could be for capturing multi-scale features or optimizing computational load.