## Diagram: Neural Network Layer Architecture

### Overview

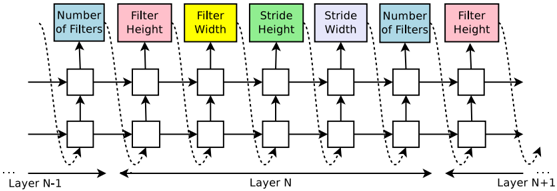

The image depicts a schematic representation of a neural network layer transformation process. It illustrates the flow of data and parameters between adjacent layers (Layer N-1 → Layer N → Layer N+1) with explicit annotations for key architectural components. The diagram uses color-coded boxes to represent different hyperparameters and directional arrows to show data flow.

### Components/Axes

1. **Horizontal Flow**:

- Left-to-right progression from Layer N-1 to Layer N+1

- Dashed arrows indicate parameter inheritance between layers

- Solid arrows show data transformation flow

2. **Parameter Boxes** (color-coded):

- **Blue**: "Number of Filters" (appears at Layer N-1 and Layer N+1)

- **Pink**: "Filter Height" (Layer N-1 and Layer N+1)

- **Yellow**: "Filter Width" (Layer N-1 and Layer N+1)

- **Green**: "Stride Height" (Layer N)

- **Purple**: "Stride Width" (Layer N)

3. **Layer Structure**:

- Layer N-1: Input layer with filter parameters

- Layer N: Intermediate layer with stride parameters

- Layer N+1: Output layer with inherited filter parameters

### Detailed Analysis

- **Filter Parameters**:

- Filter dimensions (height/width) remain consistent between Layer N-1 and N+1

- Stride parameters (height/width) are only specified for Layer N

- Number of filters appears to be preserved across layers (blue boxes)

- **Spatial Relationships**:

- Parameter boxes are vertically stacked in the order:

1. Number of Filters

2. Filter Height

3. Filter Width

4. Stride Height

5. Stride Width

6. Number of Filters

7. Filter Height

- Arrows connect boxes in a cascading pattern, suggesting hierarchical dependencies

### Key Observations

1. **Consistency in Filter Parameters**: Filter dimensions (height/width) are maintained across non-adjacent layers (N-1 and N+1)

2. **Stride Isolation**: Stride parameters only appear in the intermediate layer (N), suggesting they govern downsampling/upsampling operations

3. **Filter Count Preservation**: The number of filters remains constant between input and output layers, implying no dimensional reduction in feature maps

4. **Dashed vs Solid Arrows**: Dashed arrows indicate parameter inheritance, while solid arrows show active data transformation

### Interpretation

This diagram illustrates the fundamental operations in convolutional neural network (CNN) architecture:

1. **Filter Application**: The number, height, and width of filters determine feature extraction capabilities

2. **Stride Control**: Stride parameters in Layer N control spatial downsampling/upsampling between layers

3. **Layer Transformation**: The flow shows how input features (Layer N-1) are transformed through convolutional operations (Layer N) to produce output features (Layer N+1)

4. **Architectural Constraints**: The preservation of filter count and dimensions suggests this represents a standard convolutional block without pooling or stride-based dimensionality changes

The diagram emphasizes the importance of filter configuration in maintaining feature map dimensions while allowing spatial manipulation through stride parameters. The color-coding helps distinguish between static filter properties (blue/yellow/pink) and dynamic stride controls (green/purple).