## Diagram: Neural Network Adaptation Strategy

### Overview

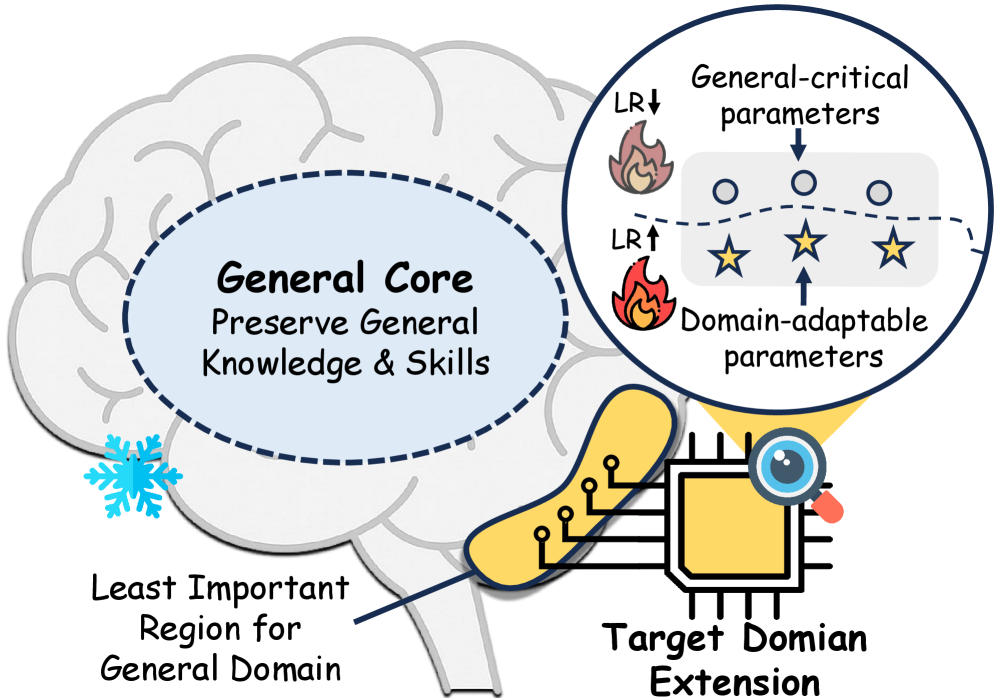

The image is a conceptual diagram illustrating a method for adapting a pre-trained artificial neural network (represented as a brain) to a new target domain while preserving its general knowledge and skills. It uses visual metaphors to explain a selective parameter tuning strategy.

### Components & Labels

The diagram consists of several interconnected visual elements with the following text labels:

1. **Central Brain Illustration:**

* A large, stylized brain outline forms the background.

* **Dashed Oval Region (Center-Left):** Labeled "**General Core**" with the sub-text "**Preserve General Knowledge & Skills**". This region is enclosed by a dashed blue oval.

* **Snowflake Icon (Bottom-Left of Core):** A blue snowflake is placed adjacent to the "General Core," symbolizing that this region is "frozen" or kept static during adaptation.

2. **Magnified Parameter View (Top-Right Circle):**

* A circular callout, connected by a dashed line to the "General Core," shows a detailed view of model parameters.

* **Top Row:** Three circles labeled "**General-critical parameters**". An arrow points down to them from the label.

* **Bottom Row:** Three star icons labeled "**Domain-adaptable parameters**". An arrow points up to them from the label.

* **Learning Rate (LR) Indicators:**

* Next to the top row: "**LR↓**" with a small, smoldering fire icon, indicating a *decreased* learning rate for general-critical parameters.

* Next to the bottom row: "**LR↑**" with a larger, active fire icon, indicating an *increased* learning rate for domain-adaptable parameters.

3. **Adaptation Pathway (Bottom):**

* **Yellow Brain Region (Bottom-Left):** A specific, highlighted region of the brain is labeled "**Least Important Region for General Domain**". A line connects this label to the region.

* **Connection to Chip:** This yellow region is directly connected via lines to a microchip icon.

* **Microchip Icon (Bottom-Right):** A yellow square chip with circuit lines is labeled "**Target Domain Extension**". (Note: "Domian" is a visible typo for "Domain").

* **Eye Icon:** A stylized eye with a magnifying glass is positioned over the chip, suggesting focused analysis or targeting.

### Detailed Analysis

The diagram proposes a two-pronged strategy for model adaptation:

1. **Preservation of General Knowledge:** The "General Core" of the model, containing "General-critical parameters," is protected. This is visually represented by the snowflake (freezing) and the instruction to use a decreased learning rate (`LR↓`) during any fine-tuning, minimizing changes to these foundational parameters.

2. **Targeted Domain Adaptation:** Adaptation is focused on a specific, identified "Least Important Region for General Domain." This region contains "Domain-adaptable parameters," which are modified using an increased learning rate (`LR↑`) to efficiently absorb knowledge from the "Target Domain Extension." The eye icon over the chip emphasizes the targeted, precise nature of this adaptation.

The flow suggests a process: identify the least important region for general tasks, then aggressively tune its adaptable parameters for the new domain while carefully constraining updates to the critical general parameters elsewhere.

### Key Observations

* **Visual Metaphors:** The diagram effectively uses common metaphors: a snowflake for freezing, fire for learning rate intensity (more fire = higher rate), stars for adaptable/special parameters, and a chip for the new domain/task.

* **Spatial Grounding:** The magnified parameter view is explicitly linked to the "General Core," not the "Least Important Region." This clarifies that the core contains both critical and adaptable parameters, but the adaptation strategy applies different learning rates to each type within that core.

* **Typo:** The label for the chip contains a spelling error: "Target **Domian** Extension" should be "Target **Domain** Extension."

* **Color Coding:** Yellow is used consistently to highlight the components involved in active adaptation (the "least important" brain region and the target domain chip).

### Interpretation

This diagram illustrates a sophisticated approach to **continual learning** or **transfer learning** in neural networks. The core problem it addresses is "catastrophic forgetting," where a model loses its general capabilities when fine-tuned on a new, specific task.

The proposed solution is **selective, asymmetric fine-tuning**. Instead of updating all model parameters equally, it:

1. **Identifies and protects** the parameters most crucial for general intelligence (the "General Core").

2. **Identifies and aggressively updates** parameters in brain regions deemed less critical for general function, repurposing them for the new domain.

This strategy aims to achieve a balance: efficiently acquiring new domain-specific skills ("Target Domain Extension") while maintaining robust general knowledge and capabilities ("Preserve General Knowledge & Skills"). The use of different learning rates (`LR↑` vs. `LR↓`) is a practical implementation detail for achieving this balance during the training process. The diagram suggests that not all parts of a trained model are equally valuable for all tasks, and intelligent adaptation requires diagnosing and acting on this functional hierarchy.