\n

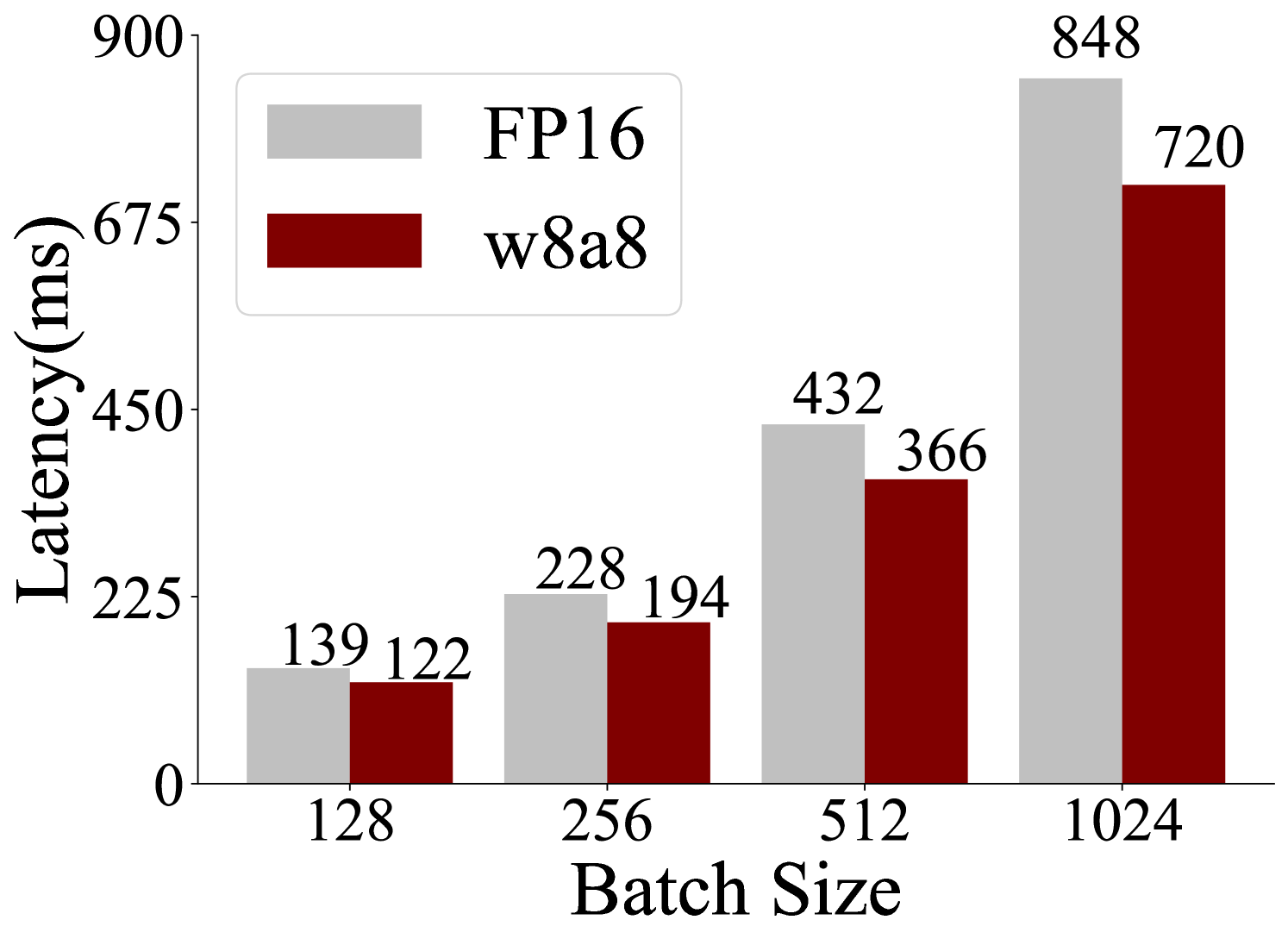

## Bar Chart: Latency vs. Batch Size for FP16 and w8a8

### Overview

This bar chart compares the latency (in milliseconds) of two data types, FP16 and w8a8, across different batch sizes. The batch sizes are 128, 256, 512, and 1024. The chart visually represents the relationship between batch size and latency for each data type.

### Components/Axes

* **X-axis:** Batch Size (labeled as "Batch Size"). Markers are 128, 256, 512, and 1024.

* **Y-axis:** Latency (in milliseconds) (labeled as "Latency(ms)"). Scale ranges from 0 to 900, with increments of approximately 225.

* **Legend:** Located in the top-left corner.

* FP16: Represented by light gray bars.

* w8a8: Represented by dark red bars.

### Detailed Analysis

The chart consists of paired bars for each batch size, representing FP16 and w8a8 latency.

* **Batch Size 128:**

* FP16: Approximately 139 ms.

* w8a8: Approximately 122 ms.

* **Batch Size 256:**

* FP16: Approximately 228 ms.

* w8a8: Approximately 194 ms.

* **Batch Size 512:**

* FP16: Approximately 432 ms.

* w8a8: Approximately 366 ms.

* **Batch Size 1024:**

* FP16: Approximately 848 ms.

* w8a8: Approximately 720 ms.

**Trends:**

* **FP16:** The latency increases as the batch size increases. The increase appears roughly linear, but with a steeper slope at higher batch sizes.

* **w8a8:** Similar to FP16, the latency increases with batch size. The increase also appears roughly linear, but with a steeper slope at higher batch sizes.

* **Comparison:** For all batch sizes, w8a8 consistently exhibits lower latency than FP16. The difference in latency between the two data types appears to increase slightly as the batch size increases.

### Key Observations

* w8a8 consistently outperforms FP16 in terms of latency across all tested batch sizes.

* The latency increases significantly as the batch size grows, for both data types.

* The difference between FP16 and w8a8 latency is most pronounced at the largest batch size (1024).

### Interpretation

The data suggests that using the w8a8 data type results in lower latency compared to FP16, particularly as the batch size increases. This indicates that w8a8 is more efficient for processing larger batches of data. The increasing latency with larger batch sizes is expected, as more data requires more computation time. The consistent performance advantage of w8a8 suggests it could be a valuable optimization technique for applications where latency is critical, especially when dealing with large batch sizes. The chart demonstrates a clear trade-off between batch size and latency, and highlights the potential benefits of using lower-precision data types like w8a8 to improve performance. The linear trend suggests that this relationship is predictable and can be used for performance modeling.