\n

## Diagram: Deep Neural Network Layer Representation

### Overview

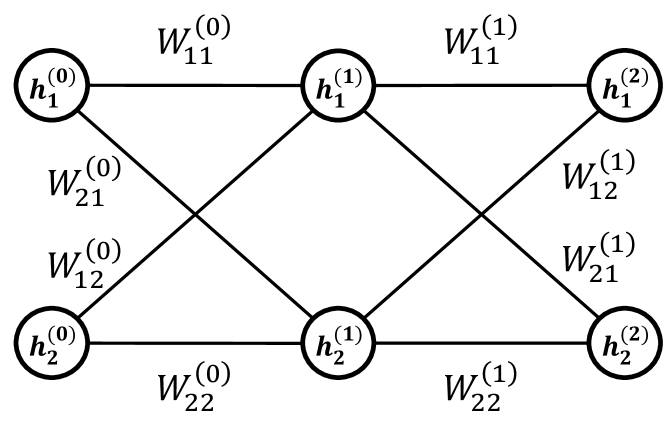

The image depicts a diagram of a two-layer deep neural network. It illustrates the connections between neurons in adjacent layers, labeled with weight values. The diagram shows two input neurons, two neurons in a hidden layer, and two output neurons.

### Components/Axes

The diagram consists of the following components:

* **Neurons:** Represented by circles labeled `h₁⁽⁰⁾`, `h₂⁽⁰⁾`, `h₁⁽¹⁾`, `h₂⁽¹⁾`, `h₁⁽²⁾`, and `h₂⁽²⁾`. The superscript indicates the layer number (0, 1, or 2), and the subscript indicates the neuron number within that layer (1 or 2).

* **Weights:** Represented by labels along the connecting lines between neurons. These labels are denoted as `Wᵢⱼ⁽ᵏ⁾`, where:

* `i` represents the neuron in the current layer.

* `j` represents the neuron in the next layer.

* `k` represents the layer number from which the weight originates.

### Detailed Analysis

The diagram shows the following connections and corresponding weights:

* `h₁⁽⁰⁾` to `h₁⁽¹⁾`: `W₁₁⁽⁰⁾`

* `h₁⁽⁰⁾` to `h₂⁽¹⁾`: `W₂₁⁽⁰⁾`

* `h₂⁽⁰⁾` to `h₁⁽¹⁾`: `W₁₂⁽⁰⁾`

* `h₂⁽⁰⁾` to `h₂⁽¹⁾`: `W₂₂⁽⁰⁾`

* `h₁⁽¹⁾` to `h₁⁽²⁾`: `W₁₁⁽¹⁾`

* `h₁⁽¹⁾` to `h₂⁽²⁾`: `W₁₂⁽¹⁾`

* `h₂⁽¹⁾` to `h₁⁽²⁾`: `W₂₁⁽¹⁾`

* `h₂⁽¹⁾` to `h₂⁽²⁾`: `W₂₂⁽¹⁾`

The diagram does not provide numerical values for the weights; it only labels them. The structure indicates a fully connected network, where each neuron in one layer is connected to every neuron in the next layer.

### Key Observations

The diagram illustrates a feedforward neural network architecture. The notation used for the weights is consistent and clearly defines the connections between layers. The diagram is symmetrical in terms of the number of neurons in each layer.

### Interpretation

This diagram represents a simplified view of a multi-layer perceptron (MLP), a fundamental building block of deep learning models. The weights (`Wᵢⱼ⁽ᵏ⁾`) represent the strength of the connections between neurons and are adjusted during the training process to learn patterns from data. The superscript `k` indicates the layer from which the weight originates, showing how information flows from the input layer (k=0) through the hidden layer (k=1) to the output layer (k=2). The diagram highlights the interconnectedness of neurons and the importance of weights in determining the network's behavior. The absence of numerical values suggests this is a conceptual illustration rather than a specific trained network.