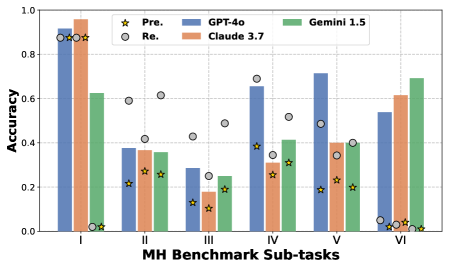

## Bar Chart: MH Benchmark Sub-task Accuracy Comparison

### Overview

This bar chart compares the accuracy of four large language models – GPT-4o, Claude 3.7, Gemini 1.5, and a "Pre." model (likely a predecessor or baseline) – across six sub-tasks within the MH Benchmark. Accuracy is represented on the y-axis, ranging from 0.0 to 1.0, while the x-axis denotes the six sub-tasks labeled I through VI. The chart uses bar graphs for GPT-4o, Claude 3.7, and Gemini 1.5, and star and circle markers to represent the "Pre." model's accuracy.

### Components/Axes

* **X-axis:** "MH Benchmark Sub-tasks" with markers I, II, III, IV, V, VI.

* **Y-axis:** "Accuracy" with a scale from 0.0 to 1.0, incrementing by 0.2.

* **Legend:**

* Blue bars: GPT-4o

* Orange bars: Claude 3.7

* Green bars: Gemini 1.5

* Yellow stars: Pre.

* White circles: Re. (likely representing a different pre-model or a revised version)

* **Data Series:** Four distinct data series representing the accuracy of each model across the six sub-tasks.

### Detailed Analysis

Here's a breakdown of the accuracy values for each model and sub-task, based on visual estimation:

* **Sub-task I:**

* GPT-4o: Approximately 0.94

* Claude 3.7: Approximately 0.88

* Gemini 1.5: Approximately 0.62

* Pre.: Approximately 0.05

* Re.: Approximately 0.86

* **Sub-task II:**

* GPT-4o: Approximately 0.38

* Claude 3.7: Approximately 0.34

* Gemini 1.5: Approximately 0.26

* Pre.: Approximately 0.24

* Re.: Approximately 0.36

* **Sub-task III:**

* GPT-4o: Approximately 0.22

* Claude 3.7: Approximately 0.24

* Gemini 1.5: Approximately 0.18

* Pre.: Approximately 0.12

* Re.: Approximately 0.46

* **Sub-task IV:**

* GPT-4o: Approximately 0.68

* Claude 3.7: Approximately 0.32

* Gemini 1.5: Approximately 0.38

* Pre.: Approximately 0.28

* Re.: Approximately 0.66

* **Sub-task V:**

* GPT-4o: Approximately 0.72

* Claude 3.7: Approximately 0.36

* Gemini 1.5: Approximately 0.40

* Pre.: Approximately 0.20

* Re.: Approximately 0.48

* **Sub-task VI:**

* GPT-4o: Approximately 0.50

* Claude 3.7: Approximately 0.64

* Gemini 1.5: Approximately 0.66

* Pre.: Approximately 0.02

* Re.: Approximately 0.04

**Trends:**

* GPT-4o generally exhibits the highest accuracy across most sub-tasks, with a slight dip in Sub-task VI.

* Claude 3.7 consistently performs well, often second to GPT-4o.

* Gemini 1.5 shows moderate accuracy, generally lower than GPT-4o and Claude 3.7.

* The "Pre." model consistently demonstrates the lowest accuracy across all sub-tasks.

* The "Re." model shows improved accuracy compared to "Pre." but generally remains lower than the other three models.

### Key Observations

* GPT-4o significantly outperforms the other models on Sub-task I, achieving an accuracy close to 0.95.

* Sub-task III presents the lowest overall accuracy scores for all models.

* Claude 3.7 and Gemini 1.5 show comparable performance on Sub-task VI, both exceeding GPT-4o's accuracy.

* The gap between GPT-4o and the other models is most pronounced in Sub-tasks I and IV.

### Interpretation

The data suggests that GPT-4o is the most accurate model across the majority of the MH Benchmark sub-tasks, indicating its superior performance in these specific areas. Claude 3.7 consistently provides strong results, positioning it as a robust alternative. Gemini 1.5 demonstrates reasonable accuracy but lags behind the top two performers. The "Pre." model serves as a clear baseline, highlighting the significant improvements achieved by the newer models. The "Re." model suggests iterative improvements are possible.

The varying performance across sub-tasks indicates that the models' strengths and weaknesses are task-dependent. Sub-task I appears to be particularly easy for all models, while Sub-task III presents a significant challenge. The differences in accuracy highlight the importance of evaluating models on a diverse set of tasks to gain a comprehensive understanding of their capabilities. The consistent low performance of the "Pre." model underscores the advancements made in language model technology. The "Re." model's performance suggests that fine-tuning or architectural changes can lead to incremental improvements.