\n

## Diagram: Recurrent Neural Network with Learning Block

### Overview

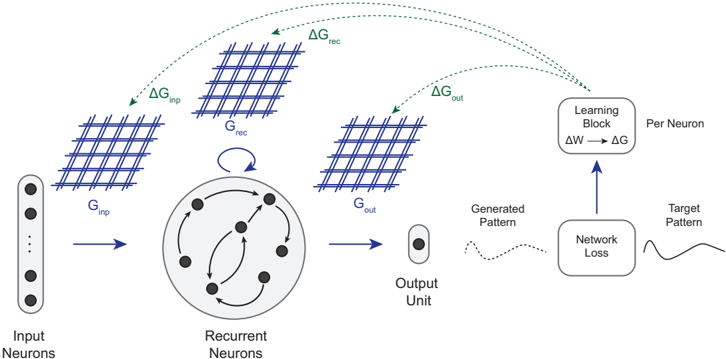

This diagram illustrates the architecture of a recurrent neural network (RNN) with a learning block, showing the flow of information and gradients during training. The diagram depicts input neurons, recurrent neurons, output units, generated patterns, and a learning block that adjusts weights based on network loss. The diagram uses arrows to indicate the direction of information flow and dashed arrows to indicate gradient flow.

### Components/Axes

The diagram consists of the following components:

* **Input Neurons:** Represented as a vertical stack of circles on the left.

* **Recurrent Neurons:** A circular arrangement of nodes with interconnections, positioned centrally.

* **Output Unit:** A single circle on the right.

* **G<sub>inp</sub>:** A grid-like structure representing the input to the recurrent neurons.

* **G<sub>rec</sub>:** A grid-like structure representing the recurrent connections.

* **G<sub>out</sub>:** A grid-like structure representing the output from the recurrent neurons.

* **Learning Block:** A rectangular block on the top-right, labeled "Learning Block" and "Per Neuron".

* **Network Loss:** A box containing a wavy line, labeled "Network Loss".

* **Target Pattern:** A box containing a wavy line, labeled "Target Pattern".

* **Generated Pattern:** A box containing a wavy line, labeled "Generated Pattern".

* **ΔG<sub>inp</sub>, ΔG<sub>rec</sub>, ΔG<sub>out</sub>:** Labels indicating gradient changes for input, recurrent, and output grids, respectively.

* **ΔW → ΔG:** Equation within the Learning Block, indicating weight change leading to gradient change.

### Detailed Analysis or Content Details

The diagram shows the following information flow:

1. **Input to Recurrent Neurons:** Input Neurons feed into G<sub>inp</sub>, which then connects to the Recurrent Neurons.

2. **Recurrent Connections:** The Recurrent Neurons have internal connections represented by G<sub>rec</sub>, creating a feedback loop.

3. **Output from Recurrent Neurons:** The Recurrent Neurons output to G<sub>out</sub>, which then connects to the Output Unit.

4. **Pattern Generation:** The Output Unit generates a pattern.

5. **Loss Calculation:** The Generated Pattern is compared to the Target Pattern, resulting in Network Loss.

6. **Gradient Flow:** The Network Loss drives gradient changes (ΔG<sub>out</sub>) back through G<sub>out</sub> to the Recurrent Neurons.

7. **Recurrent Gradient:** The gradient flows through the recurrent connections (ΔG<sub>rec</sub>).

8. **Input Gradient:** The gradient also flows back to the input (ΔG<sub>inp</sub>).

9. **Learning Block:** The gradients (ΔG) are used in the Learning Block to adjust the weights (ΔW).

The Learning Block contains the equation "ΔW → ΔG", indicating that changes in weights (ΔW) lead to changes in gradients (ΔG).

### Key Observations

* The diagram emphasizes the feedback loop inherent in recurrent neural networks through the recurrent connections (G<sub>rec</sub>).

* The gradient flow is depicted as dashed arrows, indicating that it's a process of backpropagation.

* The Learning Block highlights the weight adjustment mechanism based on the network loss.

* The grid-like structures (G<sub>inp</sub>, G<sub>rec</sub>, G<sub>out</sub>) likely represent weight matrices or activation patterns.

### Interpretation

This diagram illustrates the core principles of training a recurrent neural network. The network learns by comparing its generated output to a target pattern and adjusting its weights to minimize the network loss. The recurrent connections allow the network to maintain a "memory" of past inputs, making it suitable for processing sequential data. The gradient flow, facilitated by backpropagation, is crucial for updating the weights and improving the network's performance. The diagram effectively visualizes the complex interplay between input, recurrent connections, output, loss calculation, and weight adjustment in an RNN. The use of grids (G<sub>inp</sub>, G<sub>rec</sub>, G<sub>out</sub>) suggests a matrix-based representation of the network's connections and activations, common in deep learning implementations. The diagram is a conceptual representation and doesn't provide specific numerical values or detailed algorithmic steps, but it effectively conveys the fundamental architecture and training process of an RNN.