## Diagram: Hybrid Autoencoder with Differentiable Clustering and Rule Learning Architecture

### Overview

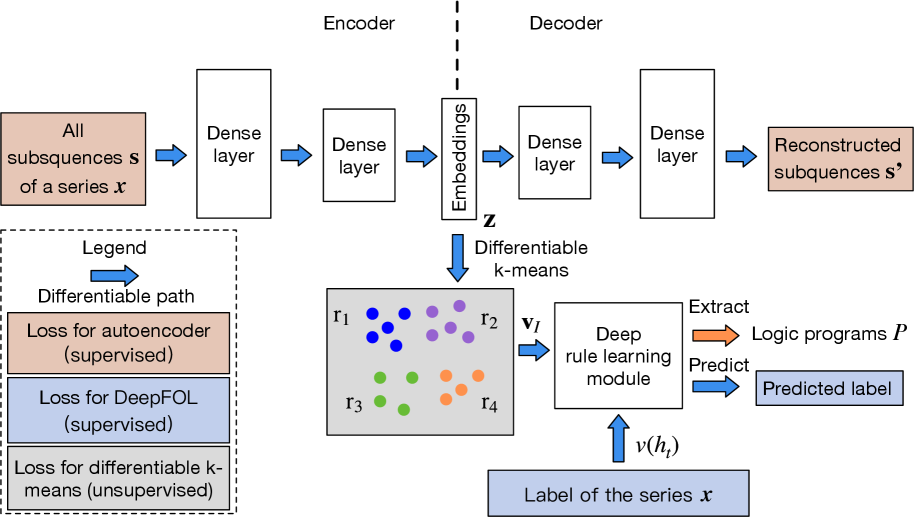

The image displays a technical architecture diagram for a machine learning model that combines an autoencoder with differentiable k-means clustering and a deep rule learning module. The system processes time-series data, learns compressed representations, clusters them, and then extracts interpretable logic programs for prediction. The diagram is divided into two primary sections: an **Encoder-Decoder Autoencoder** (top) and a **Rule Learning Pipeline** (bottom), connected via learned embeddings.

### Components/Axes

The diagram is not a chart with axes but a flowchart with labeled components and directional arrows indicating data flow.

**Primary Components:**

1. **Encoder:** Processes input data.

2. **Decoder:** Reconstructs the input from the learned representation.

3. **Differentiable k-means:** Clusters the learned embeddings.

4. **Deep rule learning module:** Learns logical rules from cluster assignments and labels.

5. **Legend:** Defines the meaning of colored boxes and arrows.

**Legend (Located in the bottom-left corner, enclosed in a dashed box):**

* **Blue Arrow:** Labeled "Differentiable path".

* **Light Orange Box:** Labeled "Loss for autoencoder (supervised)".

* **Light Blue Box:** Labeled "Loss for DeepFOL (supervised)".

* **Light Gray Box:** Labeled "Loss for differentiable k-means (unsupervised)".

### Detailed Analysis

**1. Encoder-Decoder (Autoencoder) Path:**

* **Input (Top-Left):** A light orange box labeled "All subsequences **s** of a series **x**".

* **Encoder Flow:** The input passes through a "Dense layer" (white box), then another "Dense layer" (white box), resulting in "Embeddings **z**" (a vertical white box). A dashed vertical line separates the Encoder and Decoder sections.

* **Decoder Flow:** The "Embeddings **z**" pass through a "Dense layer" (white box), then another "Dense layer" (white box), leading to the output.

* **Output (Top-Right):** A light orange box labeled "Reconstructed subsequences **s'**".

* **Loss Connection:** The input and output boxes are colored light orange, corresponding to the "Loss for autoencoder (supervised)" in the legend.

**2. Rule Learning Pipeline:**

* **Input to Clustering:** A blue arrow labeled "Differentiable k-means" points downward from the "Embeddings **z**" box.

* **Clustering Module:** A gray box contains four clusters of colored dots, labeled **r₁** (blue dots), **r₂** (purple dots), **r₃** (green dots), and **r₄** (orange dots). This represents the output of the differentiable k-means algorithm.

* **Cluster Assignment Vector:** A blue arrow labeled "**v_I**" points from the clustering box to the next module.

* **Deep Rule Learning Module:** A white box labeled "Deep rule learning module".

* **Label Input:** A light blue box at the bottom labeled "Label of the series **x**" feeds into the rule learning module via an arrow labeled "**v(h_t)**".

* **Outputs of Rule Learning:**

* An orange arrow labeled "Extract" points to a light orange box labeled "Logic programs **P**".

* A blue arrow labeled "Predict" points to a light blue box labeled "Predicted label".

* **Loss Connections:** The "Label of the series **x**" and "Predicted label" boxes are light blue, corresponding to "Loss for DeepFOL (supervised)". The clustering box is light gray, corresponding to "Loss for differentiable k-means (unsupervised)".

### Key Observations

1. **Hybrid Supervised/Unsupervised Learning:** The architecture integrates three distinct loss functions: two supervised (autoencoder reconstruction and final prediction) and one unsupervised (clustering).

2. **End-to-End Differentiability:** The legend explicitly marks a "Differentiable path" (blue arrows), indicating the entire pipeline, including the k-means clustering, is designed to be trained with gradient-based optimization.

3. **Interpretability Focus:** The system's goal extends beyond prediction to extracting "Logic programs **P**", suggesting a focus on creating interpretable models from the learned clusters and rules.

4. **Data Flow Segmentation:** The diagram clearly separates the representation learning phase (autoencoder) from the symbolic rule extraction phase (rule learning module), with the embeddings **z** and cluster assignments **v_I** serving as the bridge.

### Interpretation

This diagram illustrates a sophisticated machine learning framework designed for **interpretable time-series analysis**. The autoencoder first learns a compressed, latent representation (**z**) of input subsequences. This representation is then clustered in a differentiable manner, allowing the cluster assignments to be optimized jointly with the rest of the network. The core innovation appears to be the "Deep rule learning module," which takes these soft cluster assignments (**v_I**) and the true series label to learn human-readable logic programs (**P**).

The architecture suggests a Peircean investigative approach: it moves from raw data (the series **x**) to a lawful, symbolic representation (the logic programs **P**). The "Predicted label" is a byproduct of this rule-based reasoning. The separation of losses indicates a multi-objective optimization: the model must reconstruct its input well (autoencoder loss), form meaningful clusters (k-means loss), and make accurate, rule-based predictions (DeepFOL loss). The ultimate value of this system lies in its potential to provide **explainable predictions** for time-series data by revealing the underlying logical rules it has discovered, moving beyond black-box predictions.