TECHNICAL ASSET FINGERPRINT

bdc848b56c25a81d6b5d641b

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Chart: Per-Token Test Loss vs. Token Index and Step

### Overview

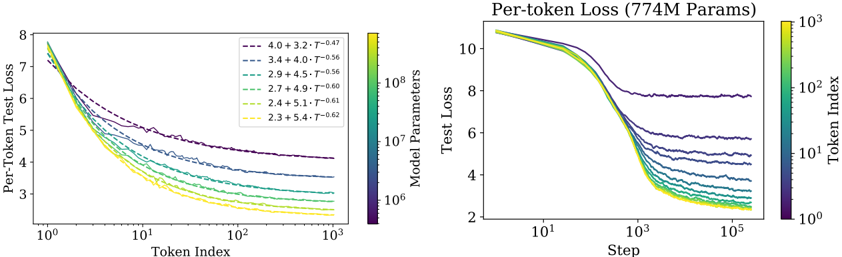

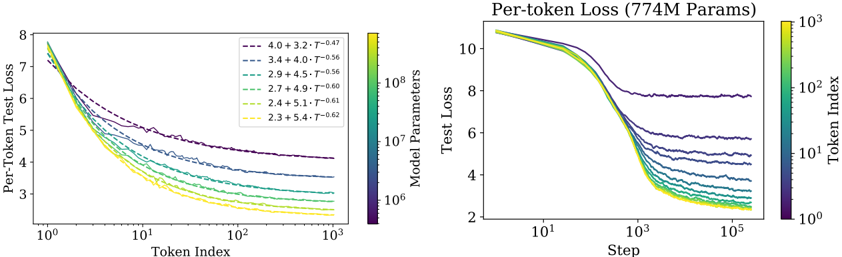

The image presents two line charts comparing per-token test loss against token index (left) and step (right). Both charts display multiple lines, each representing a different model parameter configuration. A color gradient is used to represent the model parameters and token index.

### Components/Axes

**Left Chart:**

* **Title:** Per-Token Test Loss

* **X-axis:** Token Index (Logarithmic scale from 10^0 to 10^3)

* **Y-axis:** Per-Token Test Loss (Linear scale from 2 to 8)

* **Legend:** Located at the top-right of the left chart. The legend entries are color-coded and represent different model parameter configurations. The color gradient ranges from dark purple to yellow, corresponding to the following values:

* Dark Purple: 4.0 + 3.2 * T^-0.47

* Purple: 3.4 + 4.0 * T^-0.56

* Green-Blue: 2.9 + 4.5 * T^-0.56

* Green: 2.7 + 4.9 * T^-0.60

* Yellow-Green: 2.4 + 5.1 * T^-0.61

* Yellow: 2.3 + 5.4 * T^-0.62

**Right Chart:**

* **Title:** Per-token Loss (774M Params)

* **X-axis:** Step (Logarithmic scale from 10^1 to 10^5)

* **Y-axis:** Test Loss (Linear scale from 2 to 10)

* **Color Bar (Right Side):** Token Index (Logarithmic scale from 10^0 to 10^3)

### Detailed Analysis

**Left Chart (Per-Token Test Loss vs. Token Index):**

* **Trend:** All lines show a decreasing trend as the token index increases. The rate of decrease diminishes as the token index grows larger.

* **Data Points:**

* The dark purple line (4.0 + 3.2 * T^-0.47) starts at approximately 7.5 at Token Index 1 and decreases to approximately 4.5 at Token Index 1000.

* The purple line (3.4 + 4.0 * T^-0.56) starts at approximately 7.5 at Token Index 1 and decreases to approximately 4.2 at Token Index 1000.

* The green-blue line (2.9 + 4.5 * T^-0.56) starts at approximately 7.5 at Token Index 1 and decreases to approximately 3.8 at Token Index 1000.

* The green line (2.7 + 4.9 * T^-0.60) starts at approximately 7.5 at Token Index 1 and decreases to approximately 3.5 at Token Index 1000.

* The yellow-green line (2.4 + 5.1 * T^-0.61) starts at approximately 7.5 at Token Index 1 and decreases to approximately 3.2 at Token Index 1000.

* The yellow line (2.3 + 5.4 * T^-0.62) starts at approximately 7.5 at Token Index 1 and decreases to approximately 3.0 at Token Index 1000.

**Right Chart (Per-token Loss vs. Step):**

* **Trend:** All lines show a decreasing trend as the step increases. The rate of decrease diminishes as the step grows larger.

* **Data Points:**

* The lines start at approximately 10 at Step 10^1 and decrease to between 6 and 2 at Step 10^5.

* The lines are clustered together, making it difficult to distinguish individual values.

### Key Observations

* In both charts, the loss decreases as the token index or step increases, indicating that the model is learning.

* The left chart shows a clear separation between the lines, indicating that different model parameter configurations result in different loss values.

* The right chart shows that the loss values converge as the step increases, suggesting that the model is reaching a point of diminishing returns.

### Interpretation

The charts illustrate the training process of a language model, showing how the per-token test loss decreases as the model is exposed to more tokens (left chart) and as the training progresses through more steps (right chart). The different lines in the left chart represent different model parameter configurations, and the fact that they converge in the right chart suggests that the model is learning to generalize well regardless of the initial parameter settings. The color gradient in the left chart represents the model parameters, while the color gradient in the right chart represents the token index. The convergence of the lines in the right chart suggests that the model is reaching a point of diminishing returns, where further training does not significantly improve the loss.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Chart: Per-Token Loss vs. Token Index & Step

### Overview

The image presents two charts displaying per-token loss as a function of token index (left) and step (right). Both charts show multiple lines representing different model parameter sizes, with a color gradient indicating the token index or step. The right chart has a title indicating the model size is 774M parameters.

### Components/Axes

**Left Chart:**

* **X-axis:** Token Index (logarithmic scale, ranging from approximately 10^2 to 10^3).

* **Y-axis:** Per-Token Test Loss (ranging from approximately 3 to 8).

* **Lines:** Represent different model parameter configurations. Each line is labeled with a parameter configuration (e.g., "4.0 + 3.2 • T^-0.47").

* **Colorbar:** Located on the right, representing Token Index with a gradient from green to yellow to red.

**Right Chart:**

* **X-axis:** Step (logarithmic scale, ranging from approximately 10^1 to 10^5).

* **Y-axis:** Test Loss (ranging from approximately 2 to 10).

* **Lines:** Represent different model parameter configurations. Each line is labeled with a parameter configuration (e.g., "4.0 + 3.2 • T^-0.47").

* **Colorbar:** Located on the right, representing Token Index with a gradient from green to yellow to red.

* **Title:** "Per-token Loss (774M Params)"

### Detailed Analysis or Content Details

**Left Chart:**

* **Line 1 (Purple):** 4.0 + 3.2 • T^-0.47. Starts at approximately 7.5 and decreases to approximately 4.5. The line exhibits some oscillation.

* **Line 2 (Dark Blue):** 3.4 + 4.0 • T^-0.56. Starts at approximately 7.0 and decreases to approximately 4.0. The line exhibits some oscillation.

* **Line 3 (Green):** 2.9 + 4.5 • T^-0.56. Starts at approximately 6.5 and decreases to approximately 3.5. The line exhibits some oscillation.

* **Line 4 (Light Green):** 2.7 + 4.9 • T^-0.60. Starts at approximately 6.0 and decreases to approximately 3.2. The line exhibits some oscillation.

* **Line 5 (Yellow):** 2.4 + 5.1 • T^-0.61. Starts at approximately 5.5 and decreases to approximately 3.0. The line exhibits some oscillation.

* **Line 6 (Orange):** 2.3 + 5.4 • T^-0.62. Starts at approximately 5.0 and decreases to approximately 2.8. The line exhibits some oscillation.

**Right Chart:**

* **Line 1 (Purple):** 4.0 + 3.2 • T^-0.47. Starts at approximately 9.0 and decreases rapidly to approximately 4.5, then plateaus with some oscillation.

* **Line 2 (Dark Blue):** 3.4 + 4.0 • T^-0.56. Starts at approximately 8.5 and decreases rapidly to approximately 4.0, then plateaus with some oscillation.

* **Line 3 (Green):** 2.9 + 4.5 • T^-0.56. Starts at approximately 8.0 and decreases rapidly to approximately 3.5, then plateaus with some oscillation.

* **Line 4 (Light Green):** 2.7 + 4.9 • T^-0.60. Starts at approximately 7.5 and decreases rapidly to approximately 3.2, then plateaus with some oscillation.

* **Line 5 (Yellow):** 2.4 + 5.1 • T^-0.61. Starts at approximately 7.0 and decreases rapidly to approximately 3.0, then plateaus with some oscillation.

* **Line 6 (Orange):** 2.3 + 5.4 • T^-0.62. Starts at approximately 6.5 and decreases rapidly to approximately 2.8, then plateaus with some oscillation.

### Key Observations

* In both charts, the lines generally trend downwards, indicating decreasing loss as token index or step increases.

* The lines with larger initial parameter values (e.g., 4.0 + 3.2) start with higher loss but decrease more slowly.

* The lines with smaller initial parameter values (e.g., 2.3 + 5.4) start with lower loss and decrease more rapidly.

* All lines exhibit some degree of oscillation, suggesting instability or fluctuations in the learning process.

* The color gradient on the colorbar does not appear to be directly correlated with the line colors.

### Interpretation

The charts demonstrate the relationship between model parameters, token index/step, and per-token loss during training. The different lines represent models with varying parameter configurations. The decreasing loss indicates that the models are learning and improving their performance as they process more tokens or steps. The parameter configurations influence the initial loss and the rate of learning. The oscillations suggest that the training process is not perfectly smooth and may require adjustments to hyperparameters or optimization algorithms. The right chart, specifically, shows how the loss converges as the model progresses through training steps. The 774M parameter size indicates the scale of the models being evaluated. The data suggests that there is a trade-off between initial loss and learning rate, with larger parameter values leading to higher initial loss but potentially slower learning. The colorbar, while present, doesn't seem to provide additional information about the data itself, and may be a visual artifact or represent a different dimension not directly displayed on the charts.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Dual Chart Analysis: Scaling Laws in Language Model Training

### Overview

The image displays two related line charts analyzing the relationship between language model performance (measured by test loss), model size, training data (tokens), and training duration (steps). The charts appear to be from a technical paper on scaling laws, illustrating how loss decreases with increased model parameters and training data.

### Components/Axes

**Left Chart:**

- **Title/Y-axis:** "Per-Token Test Loss" (Linear scale, range ~2 to 8).

- **X-axis:** "Token Index" (Logarithmic scale, range 10⁰ to 10³).

- **Legend (Top-Left):** Contains seven entries, each a dashed line of a different color paired with a mathematical formula of the form `a + b * T^(-c)`. The formulas are:

1. Purple: `4.0 + 3.2 * T^(-0.47)`

2. Dark Blue: `3.4 + 4.0 * T^(-0.56)`

3. Teal: `2.9 + 4.5 * T^(-0.56)`

4. Green: `2.7 + 4.9 * T^(-0.60)`

5. Light Green: `2.4 + 5.1 * T^(-0.61)`

6. Yellow-Green: `2.3 + 5.4 * T^(-0.62)`

7. Yellow: (The last formula is partially cut off but follows the same pattern).

- **Color Bar (Right side):** Labeled "Model Parameters". It is a vertical gradient bar with a logarithmic scale, marked at 10⁶, 10⁷, and 10⁸. The color gradient runs from dark purple (low parameters) to bright yellow (high parameters), corresponding to the line colors in the chart.

**Right Chart:**

- **Title:** "Per-token Loss (774M Params)".

- **Y-axis:** "Test Loss" (Linear scale, range ~2 to 10).

- **X-axis:** "Step" (Logarithmic scale, range 10¹ to 10⁵).

- **Color Bar (Right side):** Labeled "Token Index". It is a vertical gradient bar with a logarithmic scale, marked at 10⁰, 10¹, 10², and 10³. The color gradient runs from dark purple (low token index) to bright yellow (high token index).

### Detailed Analysis

**Left Chart Analysis (Loss vs. Token Index for Various Model Sizes):**

- **Trend Verification:** All seven lines show a clear downward trend, sloping from the top-left to the bottom-right. Test loss decreases as the Token Index increases. The rate of decrease (steepness) is greater for lines representing larger models (yellow/green) compared to smaller models (purple/blue).

- **Data Series & Values:** Each colored line corresponds to a model of a specific size, as indicated by the color bar. The purple line (smallest model, ~10⁶ params) has the highest loss, starting near 8 at Token Index 1 and plateauing around 4 at Token Index 1000. The yellow line (largest model, ~10⁸ params) has the lowest loss, starting near 7.5 and dropping to approximately 2.2 at Token Index 1000.

- **Legend Cross-Reference:** The formulas in the legend appear to be fitted power-law scaling equations for each model size, where `T` is the Token Index. The constant term (e.g., 4.0, 3.4) represents the asymptotic loss, and the exponent (e.g., -0.47, -0.56) indicates the scaling efficiency. Larger models have lower asymptotes and more negative exponents (steeper descent).

**Right Chart Analysis (Loss vs. Training Step for a Fixed Model Size):**

- **Trend Verification:** All lines show a sigmoidal (S-shaped) downward trend. They start high and flat, undergo a period of rapid decrease between steps 10² and 10⁴, and then begin to plateau after step 10⁴.

- **Data Series & Values:** Each colored line represents training on a different number of tokens (Token Index), as per the color bar. The dark purple line (Token Index ~1) shows the least improvement, plateauing at a high loss (~8). The bright yellow line (Token Index ~1000) shows the most improvement, reaching the lowest loss (~2.5). Lines for intermediate token counts (e.g., teal for ~10²) plateau at intermediate loss values.

- **Spatial Grounding:** The lines are layered, with the yellow line (most tokens) at the bottom (lowest loss) and the purple line (fewest tokens) at the top (highest loss) in the plateau region (right side of the chart).

### Key Observations

1. **Consistent Scaling:** Both charts demonstrate that increasing either the number of model parameters (left chart) or the number of training tokens (right chart) leads to lower per-token test loss.

2. **Power-Law Behavior:** The left chart's legend explicitly shows that loss scales as a power law with the number of tokens (`T^(-c)`), a fundamental finding in neural scaling laws.

3. **Diminishing Returns:** The curves in both charts flatten out, indicating diminishing returns. Adding more parameters or tokens yields progressively smaller improvements in loss.

4. **Interplay of Factors:** The right chart, fixed at 774M parameters, shows that model performance is ultimately limited by the amount of training data, even for a model of a given size.

### Interpretation

These charts provide empirical evidence for scaling laws in neural language models. The left chart illustrates **data scaling**: for a given model architecture, performance improves predictably with more training data, following a power law. The different curves show that larger models are more data-efficient—they achieve lower loss with the same number of tokens and have steeper scaling exponents.

The right chart illustrates **training dynamics**: for a specific model size (774M parameters), the training process (measured in steps) must be sufficiently long to realize the benefits of a large dataset. A model trained on many tokens (yellow line) requires more steps to converge but ultimately reaches a much lower loss than a model trained on few tokens (purple line), which converges quickly to a poor loss.

**Underlying Message:** The data suggests that optimal model performance requires co-scaling both model size and dataset size. A large model trained on insufficient data will plateau at a high loss (right chart, purple line), while a small model, even with abundant data, is limited by its capacity (left chart, purple line). The mathematical fits in the left legend provide a quantitative tool for predicting this performance, which is crucial for planning resource allocation in large-scale AI training. The charts collectively argue for the "scaling hypothesis" – that increasing scale in a coordinated fashion is a primary driver of capability improvement.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Per-Token Test Loss vs. Token Index and Step

### Overview

The image contains two side-by-side line graphs comparing per-token test loss across different model configurations. The left graph plots loss against token index (log scale), while the right graph plots loss against training steps (log scale). Both graphs use color-coded lines to represent model parameters and training-related variables.

### Components/Axes

**Left Graph (Per-Token Test Loss):**

- **X-axis**: Token Index (log scale, 10⁰ to 10³)

- **Y-axis**: Per-Token Test Loss (2 to 8)

- **Legend**:

- Line styles/colors represent combinations of model parameters (4.0, 3.4, 2.9, 2.7, 2.4, 2.3) and T values (3.2, 4.0, 4.5, 4.9, 5.1, 5.4)

- Example: "4.0 + 3.2 - T^0.47" (dashed purple line)

- **Color Bar**: Not present (legend uses discrete colors)

**Right Graph (Per-token Loss, 774M Params):**

- **X-axis**: Step (log scale, 10¹ to 10⁵)

- **Y-axis**: Test Loss (2 to 10)

- **Legend**:

- Color gradient from yellow (10³) to purple (10⁸) representing model parameters

- No explicit labels for individual lines

### Detailed Analysis

**Left Graph Trends:**

1. All lines show decreasing loss as token index increases, with steeper declines at lower token indices.

2. Lines with higher T values (e.g., 5.4) have shallower slopes compared to lower T values (e.g., 3.2).

3. Model parameter values (4.0 vs. 2.3) correlate with initial loss magnitude: higher parameters start with lower loss.

4. Example data points:

- "4.0 + 3.2 - T^0.47": Starts at ~7.5 loss at token index 10⁰, ends at ~4.2 at 10³

- "2.3 + 5.4 - T^0.62": Starts at ~7.8 loss, ends at ~3.0 at 10³

**Right Graph Trends:**

1. All lines show rapid initial loss reduction (steps 10¹–10³), then plateau.

2. Higher parameter models (yellow) maintain lower loss than lower parameter models (purple).

3. Example data points:

- 10³ parameters: Loss drops from ~10 to ~4 by step 10³

- 10⁸ parameters: Loss drops from ~10 to ~2.5 by step 10⁵

### Key Observations

1. **Log Scale Impact**: Both axes use logarithmic scales, emphasizing performance at extreme values (early tokens/steps and large parameter counts).

2. **Parameter Efficiency**: Higher parameter models (left graph's 4.0 vs. right graph's 10⁸) achieve better loss reduction.

3. **Training Dynamics**: The right graph suggests diminishing returns in loss reduction after ~10³ steps for all models.

4. **T Value Influence**: In the left graph, higher T values correlate with slower loss reduction, suggesting a trade-off between T and parameter efficiency.

### Interpretation

The data demonstrates that:

1. **Model Capacity Matters**: Larger models (higher parameters) consistently outperform smaller ones in both token index and step-based loss reduction.

2. **Training Efficiency**: Loss reduction follows a power-law decay, with most significant improvements occurring in the initial training phases (first 10³ steps/tokens).

3. **Hyperparameter Trade-offs**: The T values in the left graph appear to modulate the learning curve's steepness, potentially representing regularization or optimization parameters.

4. **Scalability Limits**: The plateauing loss in the right graph suggests diminishing returns for further training beyond ~10³ steps, regardless of model size.

The graphs collectively illustrate the relationship between model architecture (parameters), training dynamics (steps/tokens), and optimization hyperparameters (T) in determining per-token loss reduction. The color-coded legends provide critical context for comparing these multidimensional relationships.

DECODING INTELLIGENCE...