## Neural Network Diagram: Federated Learning with Matryoshka Representations

### Overview

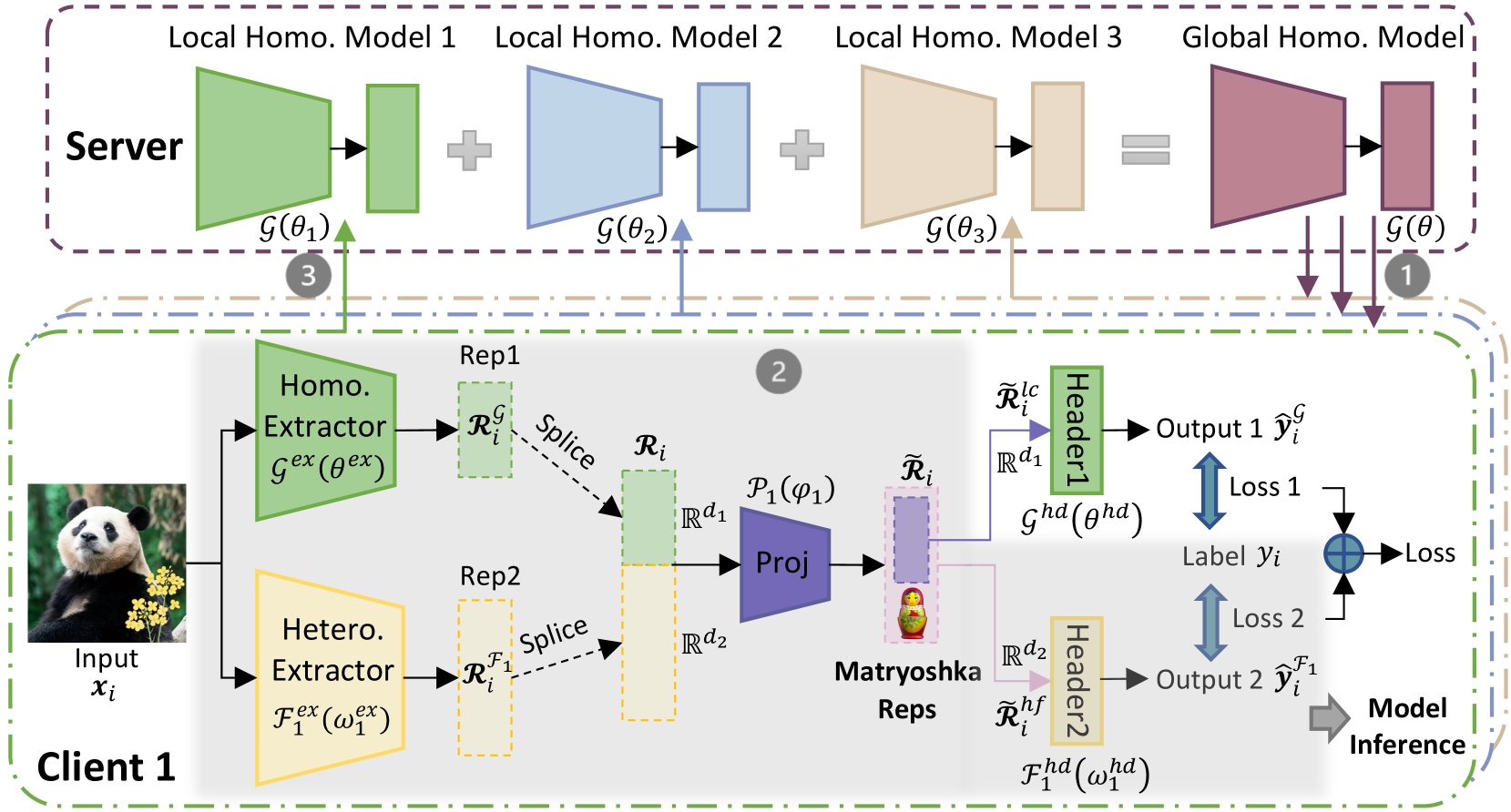

The image presents a diagram of a federated learning system, specifically focusing on the use of "Matryoshka Representations." The system involves a server and a client, with the client performing local feature extraction and the server aggregating information from multiple clients (in this case, represented by Local Homo. Models). The diagram highlights the flow of data and parameters between the client and server, as well as the internal processing steps within each.

### Components/Axes

**Regions:**

* **Server (Top):** Enclosed in a dashed purple box.

* **Client 1 (Bottom):** Enclosed in a dashed green box.

**Server Components:**

* **Local Homo. Model 1:** Represented by a green trapezoid and a green rectangle, labeled as G(θ1).

* **Local Homo. Model 2:** Represented by a blue trapezoid and a blue rectangle, labeled as G(θ2).

* **Local Homo. Model 3:** Represented by a tan trapezoid and a tan rectangle, labeled as G(θ3).

* **Global Homo. Model:** Represented by a maroon trapezoid and a maroon rectangle, labeled as G(θ).

**Client 1 Components:**

* **Input:** An image of a panda, labeled as "Input xi".

* **Homo. Extractor:** A green trapezoid labeled as "Gex (θex)".

* **Hetero. Extractor:** A tan trapezoid labeled as "Fex (ω1ex)".

* **Rep1:** A dashed green rectangle labeled as "RiG".

* **Rep2:** A dashed tan rectangle labeled as "RiF1".

* **Proj:** A blue trapezoid labeled as "P1(φ1)".

* **Matryoshka Reps:** A purple rectangle containing a Matryoshka doll.

* **Header1:** A green rectangle labeled as "Header1".

* **Header2:** A tan rectangle labeled as "Header2".

* **Output 1:** Labeled as "Output 1 ŷiG".

* **Output 2:** Labeled as "Output 2 ŷiF1".

* **Label:** Labeled as "Label yi".

**Arrows and Labels:**

* Arrows indicate the flow of data and parameters.

* "Splice" labels indicate the combination of representations.

* "Loss 1" and "Loss 2" indicate loss calculations.

* "Model Inference" indicates the final output.

* Rd1 and Rd2 indicate the dimensionality of the representations.

* R̃i lc and R̃i hf are representations after the Matryoshka Reps.

**Numerical Indicators:**

* (1), (2), and (3) in gray circles indicate different stages or steps in the process.

### Detailed Analysis

**Server Side:**

* The server aggregates information from three "Local Homo. Models." Each model consists of a trapezoidal shape followed by a rectangular shape.

* The outputs of the local models are combined (indicated by "+" signs) to form a "Global Homo. Model."

**Client Side:**

* The client takes an image as input and extracts features using two extractors: "Homo. Extractor" and "Hetero. Extractor."

* The outputs of the extractors are represented as "RiG" and "RiF1" respectively.

* These representations are spliced and projected using "Proj."

* The projected representation is then processed by "Matryoshka Reps."

* The outputs of the Matryoshka Reps are fed into "Header1" and "Header2" to produce "Output 1" and "Output 2."

* Losses are calculated based on the difference between the outputs and the "Label yi."

**Data Flow:**

* The "Global Homo. Model" parameters are sent to the client (indicated by arrow 1).

* The client processes the input and sends information back to the server (indicated by arrow 2).

* The server updates its local models based on the information received from the client (indicated by arrow 3).

### Key Observations

* The diagram illustrates a federated learning setup where the client performs local feature extraction and the server aggregates information from multiple clients.

* The use of "Matryoshka Representations" suggests a hierarchical or multi-scale approach to feature learning.

* The presence of two extractors ("Homo." and "Hetero.") indicates that the system is designed to capture different types of features.

### Interpretation

The diagram depicts a federated learning system that leverages "Matryoshka Representations" for feature learning. The system aims to learn a global model by aggregating information from multiple clients while preserving data privacy. The use of two extractors on the client side suggests that the system is designed to capture both homogeneous and heterogeneous features from the input data. The "Matryoshka Reps" likely play a role in creating a more robust and generalizable representation of the data. The diagram highlights the key components and data flow within the system, providing a high-level overview of its architecture and functionality. The system appears to be designed for a scenario where data is distributed across multiple clients, and it is not feasible or desirable to centralize the data for training.