## Dual-Axis Line Chart: Average Math-benchmark Accuracy vs. Compression-Rate on Llama3.2-3B Model

### Overview

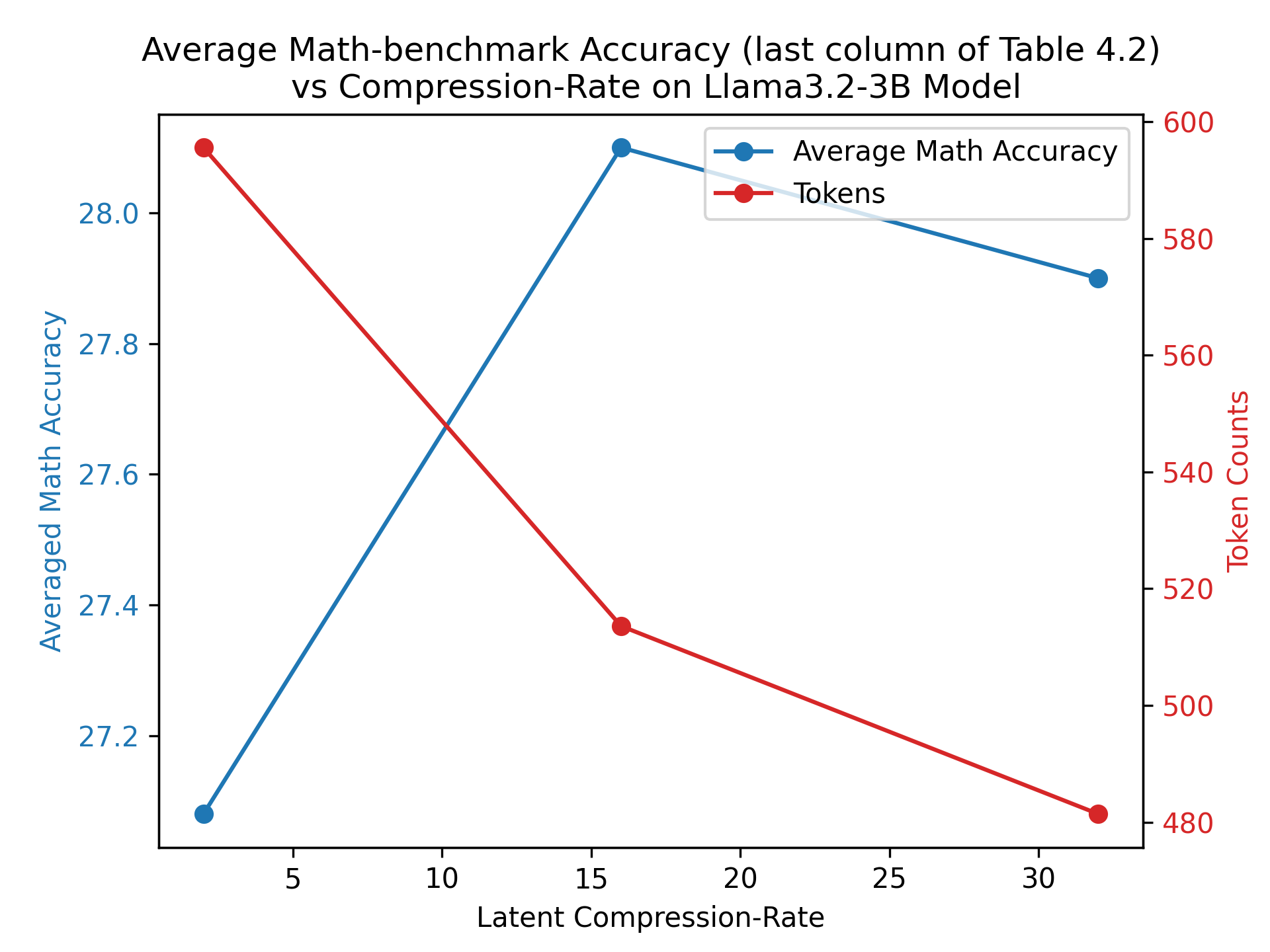

This image is a dual-axis line chart plotting two different metrics against a common independent variable. The chart visualizes the relationship between the "Latent Compression-Rate" of a Llama3.2-3B model and two dependent variables: "Averaged Math Accuracy" and "Token Counts." The data suggests an investigation into the trade-offs between model compression, performance (accuracy), and output length (tokens).

### Components/Axes

* **Chart Title:** "Average Math-benchmark Accuracy (last column of Table 4.2) vs Compression-Rate on Llama3.2-3B Model"

* **X-Axis (Bottom):**

* **Label:** "Latent Compression-Rate"

* **Scale:** Linear scale with major tick marks at 5, 10, 15, 20, 25, and 30.

* **Primary Y-Axis (Left):**

* **Label:** "Averaged Math Accuracy"

* **Scale:** Linear scale with major tick marks at 27.2, 27.4, 27.6, 27.8, and 28.0.

* **Color:** Blue, corresponding to the "Average Math Accuracy" data series.

* **Secondary Y-Axis (Right):**

* **Label:** "Token Counts"

* **Scale:** Linear scale with major tick marks at 480, 500, 520, 540, 560, 580, and 600.

* **Color:** Red, corresponding to the "Tokens" data series.

* **Legend:**

* **Position:** Top-right corner of the plot area.

* **Series 1:** A blue line with a circular marker labeled "Average Math Accuracy."

* **Series 2:** A red line with a circular marker labeled "Tokens."

### Detailed Analysis

The chart displays three data points for each series, connected by straight lines.

**Data Series 1: Average Math Accuracy (Blue Line, Left Y-Axis)**

* **Trend Verification:** The blue line shows a sharp increase followed by a slight decrease. It slopes steeply upward from the first to the second point, then slopes gently downward to the third point.

* **Data Points (Approximate):**

1. At a Latent Compression-Rate of **~2**, the Averaged Math Accuracy is **~27.1**.

2. At a Latent Compression-Rate of **~16**, the Averaged Math Accuracy peaks at **~28.1**.

3. At a Latent Compression-Rate of **~32**, the Averaged Math Accuracy decreases to **~27.9**.

**Data Series 2: Tokens (Red Line, Right Y-Axis)**

* **Trend Verification:** The red line shows a consistent, steep downward trend across all data points.

* **Data Points (Approximate):**

1. At a Latent Compression-Rate of **~2**, the Token Count is **~595**.

2. At a Latent Compression-Rate of **~16**, the Token Count is **~515**.

3. At a Latent Compression-Rate of **~32**, the Token Count is **~480**.

### Key Observations

1. **Inverse Relationship:** There is a clear inverse relationship between the two plotted metrics. As the Latent Compression-Rate increases, the Token Count consistently decreases, while the Math Accuracy first increases and then slightly decreases.

2. **Peak Performance:** The highest average math accuracy (~28.1) is achieved at a moderate compression rate (~16), not at the lowest or highest rate shown.

3. **Non-Linear Accuracy Response:** The model's accuracy does not degrade linearly with compression. It improves significantly from a very low compression rate to a moderate one before beginning to decline.

4. **Strong Linear Compression-Token Correlation:** The reduction in token counts appears to be strongly and almost linearly correlated with the increase in the latent compression rate.

### Interpretation

This chart demonstrates a critical engineering trade-off in model optimization. The data suggests that applying a moderate amount of latent compression (around a rate of 16) to the Llama3.2-3B model yields a "sweet spot": it significantly improves performance on math benchmarks compared to minimal compression, while also reducing the number of tokens generated (which implies greater efficiency). However, pushing compression further (to a rate of 32) begins to harm accuracy, even as it continues to reduce token counts.

The findings imply that compression is not merely a method for reducing model size or inference cost (as proxied by token count) but can also act as a form of regularization or optimization that enhances certain capabilities, up to a point. The peak in accuracy suggests an optimal balance where the compressed representation retains or even focuses on the most salient features for mathematical reasoning. The subsequent decline indicates that excessive compression starts to discard information necessary for maintaining peak performance. This visualization would be crucial for a researcher or engineer deciding on the operational parameters for deploying this model, balancing the need for accuracy against computational efficiency.