## Grouped Bar Chart: Model Performance Comparison Across Benchmarks

### Overview

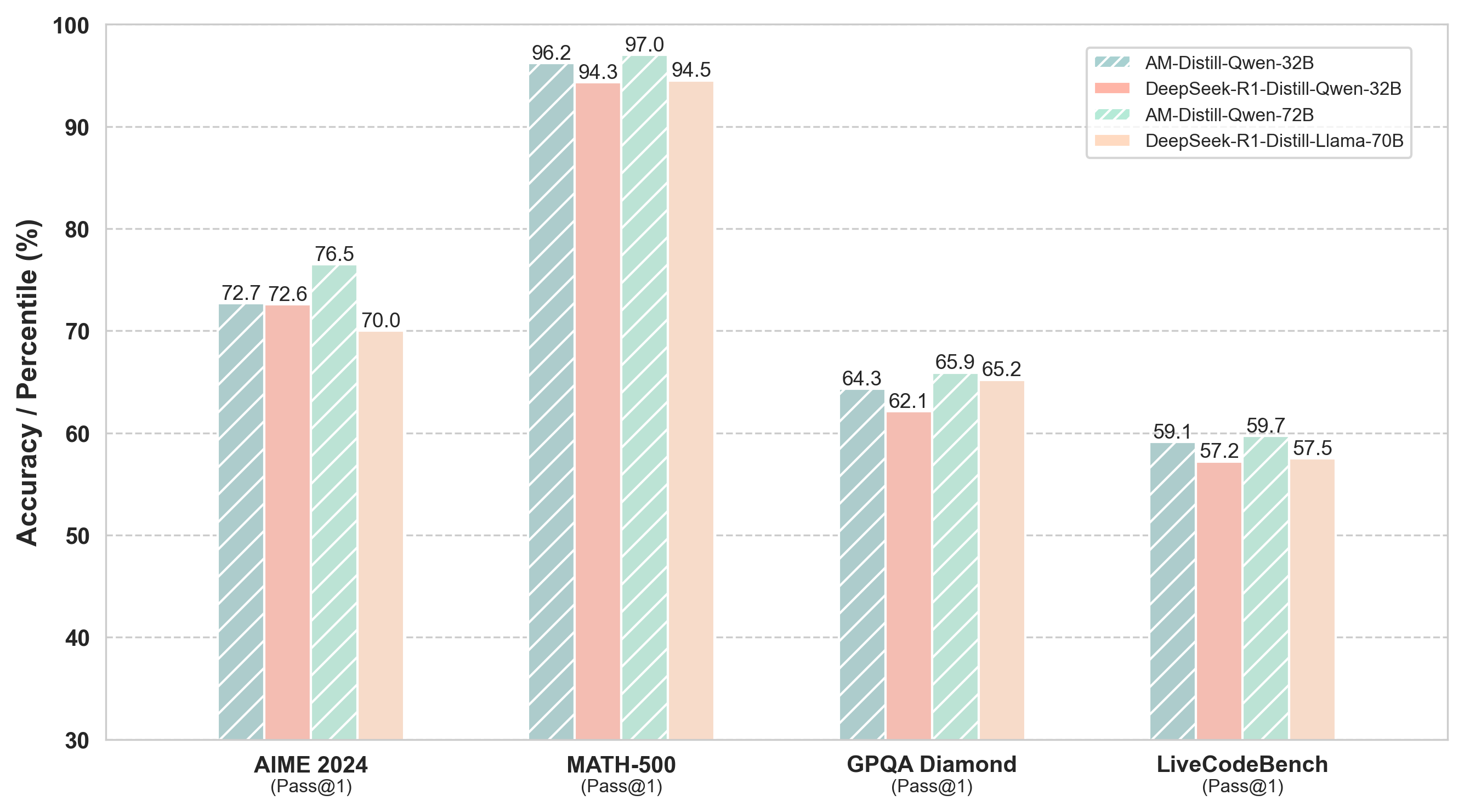

This image is a grouped bar chart comparing the performance of four different AI models across four distinct benchmarks. The chart measures "Accuracy / Percentile (%)" on the y-axis against four benchmark categories on the x-axis. The legend is positioned in the top-right corner of the chart area.

### Components/Axes

* **Y-Axis:** Labeled "Accuracy / Percentile (%)". The scale runs from 30 to 100, with major gridlines at intervals of 10 (30, 40, 50, 60, 70, 80, 90, 100).

* **X-Axis:** Lists four benchmark categories. Each category has a primary label and a secondary label in parentheses.

1. **AIME 2024** (Pass@1)

2. **MATH-500** (Pass@1)

3. **GPQA Diamond** (Pass@1)

4. **LiveCodeBench** (Pass@1)

* **Legend (Top-Right):** Identifies four models, each associated with a unique color and pattern.

1. **AM-Distill-Qwen-32B:** Teal color with diagonal stripes (\\).

2. **DeepSeek-R1-Distill-Qwen-32B:** Solid light salmon/pink color.

3. **AM-Distill-Qwen-72B:** Light mint green color with diagonal stripes (//).

4. **DeepSeek-R1-Distill-Llama-70B:** Solid light peach/beige color.

### Detailed Analysis

The chart presents the following numerical data for each model on each benchmark. The values are read directly from the labels atop each bar.

**1. AIME 2024 (Pass@1)**

* AM-Distill-Qwen-32B: 72.7%

* DeepSeek-R1-Distill-Qwen-32B: 72.6%

* AM-Distill-Qwen-72B: 76.5%

* DeepSeek-R1-Distill-Llama-70B: 70.0%

**2. MATH-500 (Pass@1)**

* AM-Distill-Qwen-32B: 96.2%

* DeepSeek-R1-Distill-Qwen-32B: 94.3%

* AM-Distill-Qwen-72B: 97.0%

* DeepSeek-R1-Distill-Llama-70B: 94.5%

**3. GPQA Diamond (Pass@1)**

* AM-Distill-Qwen-32B: 64.3%

* DeepSeek-R1-Distill-Qwen-32B: 62.1%

* AM-Distill-Qwen-72B: 65.9%

* DeepSeek-R1-Distill-Llama-70B: 65.2%

**4. LiveCodeBench (Pass@1)**

* AM-Distill-Qwen-32B: 59.1%

* DeepSeek-R1-Distill-Qwen-32B: 57.2%

* AM-Distill-Qwen-72B: 59.7%

* DeepSeek-R1-Distill-Llama-70B: 57.5%

### Key Observations

* **Highest Overall Performance:** The **MATH-500** benchmark yielded the highest scores for all models, with all values above 94%.

* **Model Ranking Consistency:** The **AM-Distill-Qwen-72B** model (light green, striped) achieves the highest score in three out of four benchmarks (AIME 2024, MATH-500, GPQA Diamond). It is narrowly beaten by its smaller counterpart, AM-Distill-Qwen-32B, on LiveCodeBench (59.7% vs. 59.1%).

* **Performance Gap:** The performance gap between the AM-Distill and DeepSeek-R1-Distill variants of the Qwen-32B model is smallest on AIME 2024 (0.1%) and largest on GPQA Diamond (2.2%).

* **Lowest Scores:** The **LiveCodeBench** benchmark appears to be the most challenging, with all models scoring below 60%.

* **Architecture Comparison:** On the Qwen-32B base, the AM-Distill variant consistently outperforms the DeepSeek-R1-Distill variant. The larger AM-Distill-Qwen-72B generally outperforms the similarly sized DeepSeek-R1-Distill-Llama-70B.

### Interpretation

This chart provides a comparative performance analysis of distilled language models on reasoning-heavy benchmarks. The data suggests that the "AM-Distill" method, when applied to the Qwen architecture, yields models that are highly competitive and often superior to the "DeepSeek-R1-Distill" method on these specific tasks.

The consistently high scores on MATH-500 indicate that all evaluated models have strong mathematical reasoning capabilities. Conversely, the lower scores on LiveCodeBench suggest that coding generation and execution in a live environment remains a more difficult challenge for these models relative to the other tested domains (math competitions, graduate-level QA).

The chart effectively communicates that model scale (72B/70B vs. 32B) and distillation technique (AM vs. DeepSeek-R1) are both significant factors in performance, with the AM-Distill approach showing a slight but consistent advantage in this comparison. The visualization allows for quick cross-benchmark and cross-model comparisons, highlighting strengths and relative weaknesses.