## Line Chart: Mamba-2.8B: Block vs Mixer Output F1 Scores

### Overview

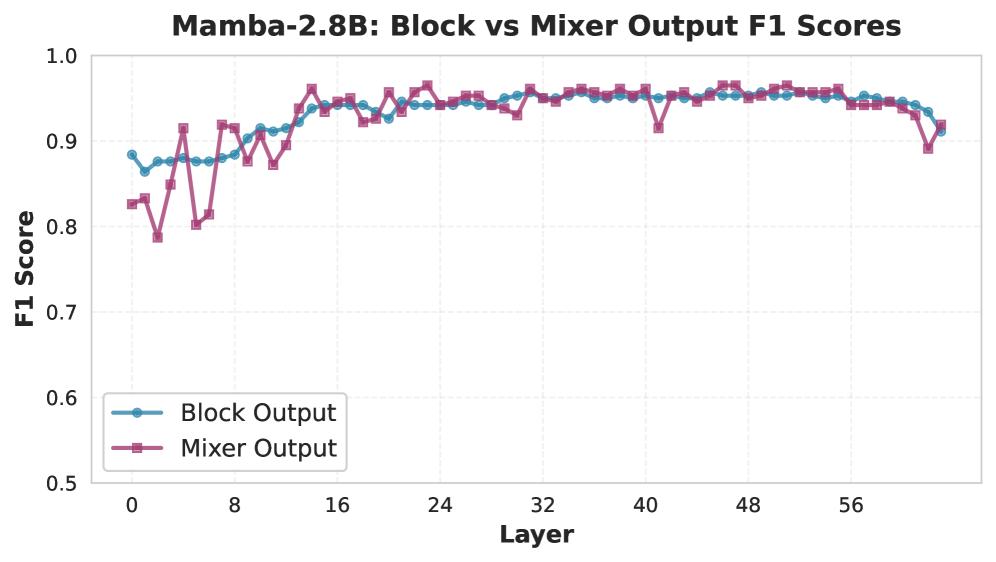

This image is a line chart comparing the F1 Score performance of two different output types ("Block Output" and "Mixer Output") across the layers of a model named "Mamba-2.8B". The chart plots the F1 Score on the vertical axis against the layer number on the horizontal axis.

### Components/Axes

* **Chart Title:** "Mamba-2.8B: Block vs Mixer Output F1 Scores" (centered at the top).

* **X-Axis:**

* **Label:** "Layer" (centered below the axis).

* **Scale:** Linear scale from 0 to 64, with major tick marks and labels at intervals of 8 (0, 8, 16, 24, 32, 40, 48, 56).

* **Y-Axis:**

* **Label:** "F1 Score" (rotated vertically, left of the axis).

* **Scale:** Linear scale from 0.5 to 1.0, with major tick marks and labels at intervals of 0.1 (0.5, 0.6, 0.7, 0.8, 0.9, 1.0).

* **Legend:** Located in the bottom-left corner of the plot area.

* **Series 1:** "Block Output" - Represented by a blue line with circular markers.

* **Series 2:** "Mixer Output" - Represented by a purple/magenta line with square markers.

* **Grid:** A light gray grid is present in the background.

### Detailed Analysis

**Trend Verification & Data Points:**

1. **Block Output (Blue line, circular markers):**

* **Trend:** Starts relatively high, experiences a minor initial dip, then shows a steady, smooth upward trend before plateauing in the middle-to-late layers, with a slight decline at the very end.

* **Approximate Data Points:**

* Layer 0: ~0.88

* Layer 4: ~0.87 (slight dip)

* Layer 8: ~0.88

* Layer 16: ~0.94

* Layer 24: ~0.95

* Layer 32: ~0.95

* Layer 40: ~0.95

* Layer 48: ~0.95

* Layer 56: ~0.95

* Layer 64: ~0.91

2. **Mixer Output (Purple line, square markers):**

* **Trend:** Exhibits high volatility in the early layers (0-16), with sharp drops and spikes. After approximately layer 16, it converges with the Block Output line and follows a very similar, stable, high-scoring path for the remainder of the layers, also showing a slight final decline.

* **Approximate Data Points:**

* Layer 0: ~0.83

* Layer 2: ~0.79 (sharp drop)

* Layer 4: ~0.92 (sharp spike)

* Layer 6: ~0.81 (sharp drop)

* Layer 8: ~0.92

* Layer 12: ~0.88

* Layer 16: ~0.94 (converges with Block Output)

* Layer 24: ~0.96

* Layer 32: ~0.95

* Layer 40: ~0.92 (notable dip)

* Layer 48: ~0.96

* Layer 56: ~0.95

* Layer 64: ~0.92

### Key Observations

* **Early Layer Instability:** The most striking feature is the significant volatility of the Mixer Output in the first 16 layers, contrasting with the relatively stable, gradual ascent of the Block Output.

* **Convergence:** After layer 16, the two lines become tightly coupled, suggesting that the performance difference between the Block and Mixer outputs becomes negligible in the deeper layers of the model.

* **Performance Plateau:** Both outputs achieve and maintain a high F1 Score (between ~0.94 and ~0.96) from approximately layer 16 to layer 56.

* **Final Layer Dip:** Both series show a noticeable decrease in F1 Score at the final layer (64), dropping to approximately 0.91-0.92.

* **Outlier Point:** The Mixer Output has a distinct, isolated dip around layer 40 (to ~0.92) while the Block Output remains stable at ~0.95.

### Interpretation

This chart provides a layer-wise performance diagnostic for the Mamba-2.8B model. The data suggests that the "Mixer" component of the architecture is highly sensitive or unstable during the initial processing stages (early layers), leading to erratic F1 scores. In contrast, the "Block" output provides a more consistent and reliable signal from the start.

The critical finding is that after about 16 layers of processing, the model's internal representations from both the Block and Mixer pathways become functionally equivalent in terms of the measured task performance (F1 Score). This could indicate that the model's deeper layers learn to integrate or stabilize the information from both pathways.

The high, sustained plateau indicates the model reaches its peak task-specific performance in the middle layers. The slight decline at the very final layer is a common phenomenon in deep networks and could be due to over-specialization, a slight degradation in representation quality, or the final layer being optimized for a different objective than the F1-scored task. The isolated dip for Mixer Output at layer 40 warrants investigation as a potential layer-specific instability or artifact.