# Technical Document Extraction: Kolmogorov-Arnold Networks (KANs)

## Key Components and Flow

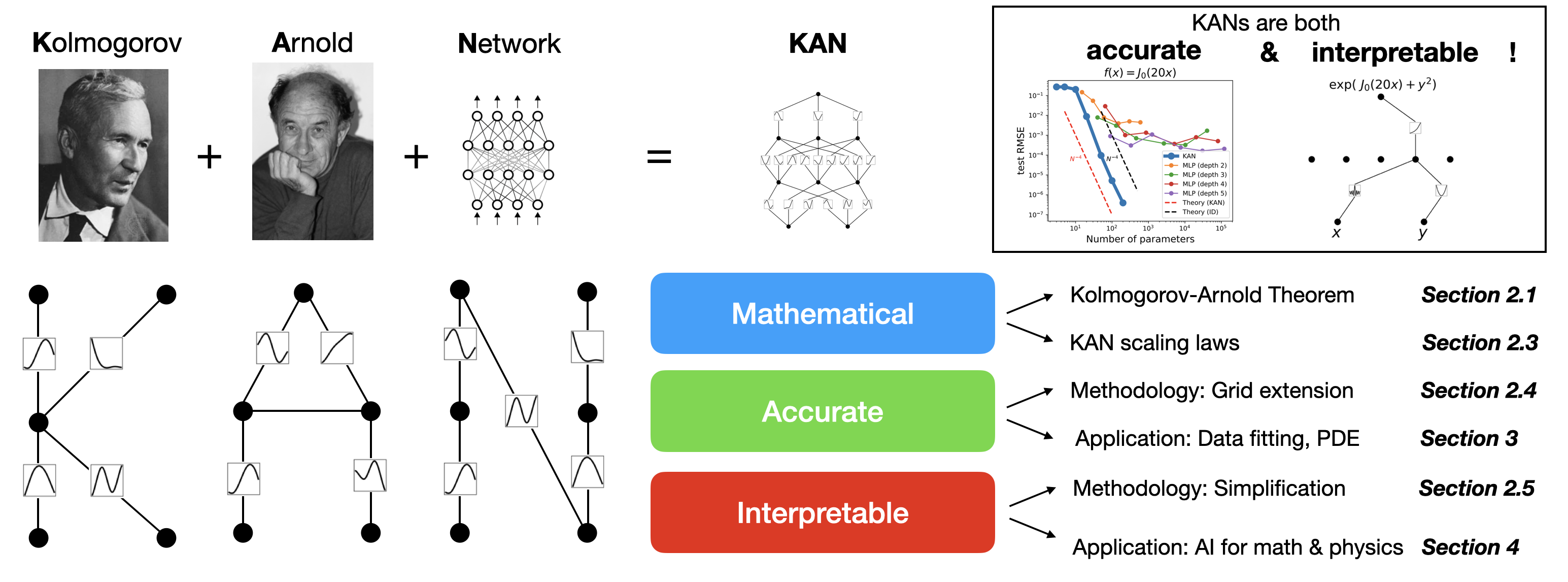

### 1. **Historical Figures and Conceptual Foundation**

- **Kolmogorov** (left portrait): Mathematical logician, foundational work in function approximation.

- **Arnold** (right portrait): Mathematician, contributed to the development of the Kolmogorov-Arnold representation theorem.

- **Network Diagram**: Grid of interconnected nodes labeled "Network" (symbolizing traditional neural networks).

- **KAN Diagram**: Complex interconnected nodes labeled "KAN" (Kolmogorov-Arnold Network), representing a hybrid of mathematical and neural architectures.

### 2. **Core Equation and Claims**

- **Boxed Text**:

```

KANs are both

accurate & interpretable!

```

- **Mathematical Expressions**:

- `f(x) = J₀(20x)` (Bessel function of the first kind, order 0, scaled by 20).

- `exp(J₀(20x) + y²)` (Exponential function combining Bessel and quadratic terms).

### 3. **Performance Graph: Test RMSE vs. Parameters**

- **Axes**:

- **Y-axis**: `test RMSE` (log scale, `10⁻¹` to `10⁻⁷`).

- **X-axis**: `Number of parameters` (log scale, `10¹` to `10⁵`).

- **Legend**:

- **Blue**: KAN (lowest RMSE, steepest decline).

- **Orange**: MLP (depth 2).

- **Green**: MLP (depth 3).

- **Red**: MLP (depth 4).

- **Purple**: MLP (depth 5).

- **Dashed Red**: Theory (KAN).

- **Dashed Black**: Theory (ID).

- **Trend**: KANs outperform MLPs across all parameter scales, with RMSE decreasing exponentially as parameters increase.

### 4. **Flowchart: KAN Methodology and Applications**

- **Nodes and Arrows**:

- **Mathematical** (Blue):

- Kolmogorov-Arnold Theorem (Section 2.1).

- KAN scaling laws (Section 2.3).

- **Accurate** (Green):

- Methodology: Grid extension (Section 2.4).

- Application: Data fitting, PDE (Section 3).

- **Interpretable** (Red):

- Methodology: Simplification (Section 2.5).

- Application: AI for math & physics (Section 4).

### 5. **Diagrammatic Representations**

- **KAN Architecture**:

- Nodes with embedded functions (e.g., `x`, `y`, `J₀(20x)`).

- Connections represent function composition and parameter scaling.

- **Flowchart Structure**:

- Hierarchical organization of KAN properties (Mathematical → Accurate → Interpretable).

- Cross-references to technical sections (e.g., "Section 2.1" for Kolmogorov-Arnold Theorem).

### 6. **Critical Observations**

- **Accuracy**: KANs achieve lower test RMSE than MLPs of comparable depth, validating their theoretical efficiency.

- **Interpretability**: KANs retain mathematical transparency (e.g., explicit use of Bessel functions), unlike black-box MLPs.

- **Scalability**: KAN scaling laws (Section 2.3) suggest polynomial growth in performance with parameter count, contrasting with exponential MLP growth.

### 7. **Section References**

- **Section 2.1**: Kolmogorov-Arnold Theorem.

- **Section 2.3**: KAN scaling laws.

- **Section 2.4**: Grid extension methodology.

- **Section 2.5**: Simplification methodology.

- **Section 3**: Applications in data fitting and PDEs.

- **Section 4**: Applications in AI for mathematics and physics.

## Conclusion

The image illustrates the theoretical and practical advantages of KANs over traditional MLPs, emphasizing their mathematical rigor, accuracy, and interpretability. The performance graph and flowchart provide a roadmap for implementing KANs in scientific and engineering domains.