## Line Chart: Accuracy vs. Number of Generated Solutions

### Overview

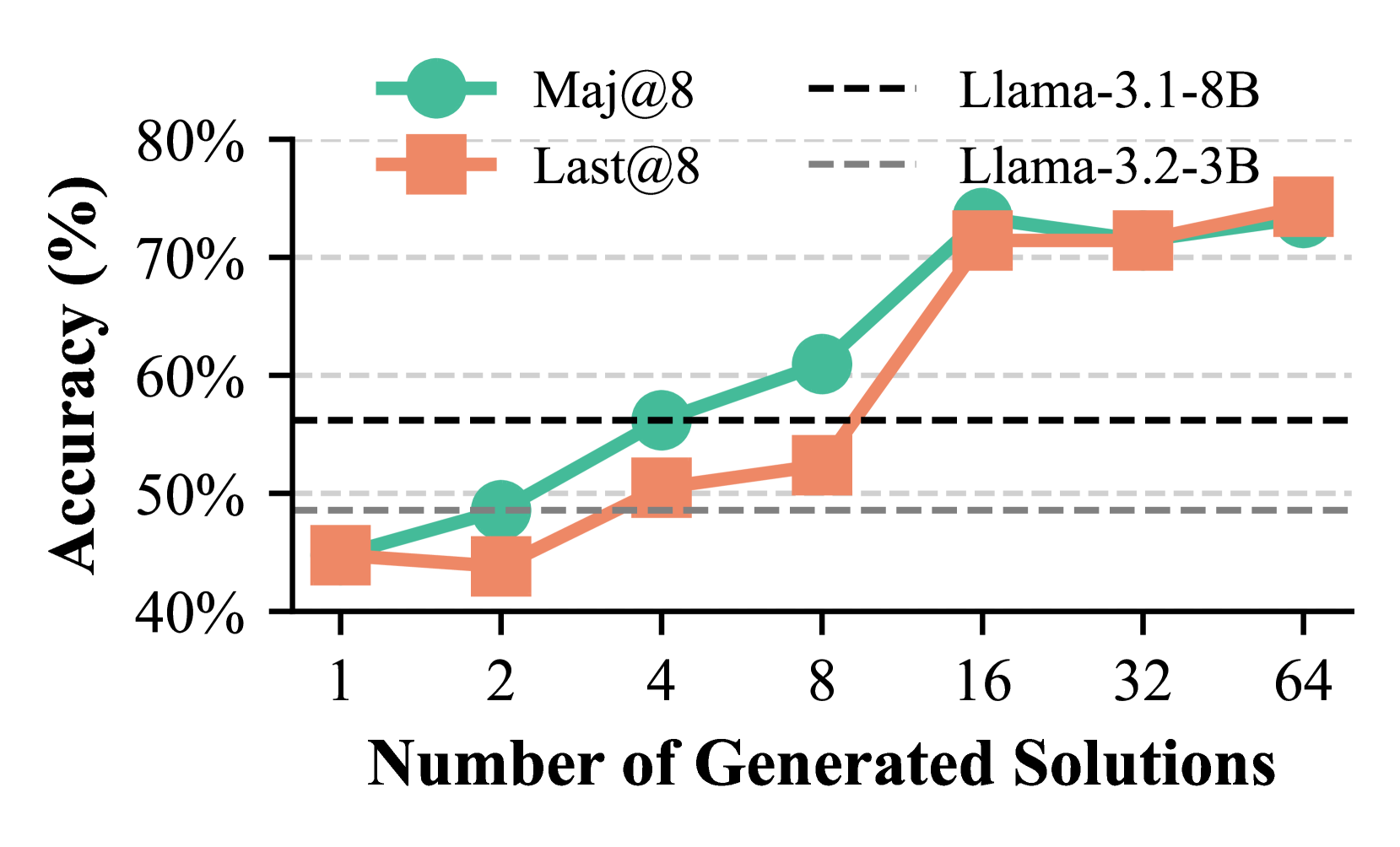

This image displays a line chart illustrating the accuracy of two different methods, "Maj@8" and "Last@8", as a function of the "Number of Generated Solutions". The chart also includes two horizontal dashed lines representing baseline accuracies for "Llama-3.1-8B" and "Llama-3.2-3B". The primary trend shows that accuracy generally increases with the number of generated solutions for both "Maj@8" and "Last@8".

### Components/Axes

* **Chart Type**: Line Chart

* **Title**: Implicitly, "Accuracy of Methods with Varying Number of Generated Solutions"

* **Y-axis Label**: "Accuracy (%)"

* **Scale**: Linear, ranging from approximately 40% to 80%.

* **Markers**: 40%, 50%, 60%, 70%, 80%.

* **X-axis Label**: "Number of Generated Solutions"

* **Scale**: Logarithmic (implied by the spacing of markers), with markers at 1, 2, 4, 8, 16, 32, 64.

* **Legend**: Located in the top-center of the chart.

* **"Maj@8"**: Represented by a teal line with circular markers.

* **"Last@8"**: Represented by an orange line with square markers.

* **"Llama-3.1-8B"**: Represented by a dashed black line.

* **"Llama-3.2-3B"**: Represented by a dashed grey line.

### Detailed Analysis

**Data Series: "Maj@8" (Teal Line with Circles)**

* **Trend**: The "Maj@8" line shows a consistent upward trend, indicating that accuracy improves as more solutions are generated. The slope appears to steepen between 4 and 16 generated solutions, then flattens out.

* **Data Points (approximate values with uncertainty +/- 1%)**:

* At 1 solution: ~45%

* At 2 solutions: ~50%

* At 4 solutions: ~57%

* At 8 solutions: ~61%

* At 16 solutions: ~72%

* At 32 solutions: ~72%

* At 64 solutions: ~74%

**Data Series: "Last@8" (Orange Line with Squares)**

* **Trend**: The "Last@8" line also shows an upward trend, but generally at a lower accuracy than "Maj@8" for most of the plotted range. The trend is less steep than "Maj@8" initially, but catches up and surpasses "Maj@8" between 8 and 16 solutions before falling slightly behind again.

* **Data Points (approximate values with uncertainty +/- 1%)**:

* At 1 solution: ~43%

* At 2 solutions: ~42%

* At 4 solutions: ~50%

* At 8 solutions: ~51%

* At 16 solutions: ~71%

* At 32 solutions: ~71%

* At 64 solutions: ~73%

**Baseline Data Series:**

* **"Llama-3.1-8B" (Dashed Black Line)**: This line is positioned at approximately 57% accuracy.

* **"Llama-3.2-3B" (Dashed Grey Line)**: This line is positioned at approximately 49% accuracy.

### Key Observations

* Both "Maj@8" and "Last@8" methods demonstrate a positive correlation between the number of generated solutions and accuracy.

* The "Maj@8" method generally achieves higher accuracy than the "Last@8" method, especially at lower numbers of generated solutions (1-4).

* The "Last@8" method shows a significant jump in accuracy between 4 and 16 generated solutions, briefly matching the performance of "Maj@8" at 16 and 32 solutions.

* Both methods surpass the "Llama-3.2-3B" baseline at 4 generated solutions and the "Llama-3.1-8B" baseline at 16 generated solutions.

* The accuracy gains for both methods appear to plateau or diminish at higher numbers of generated solutions (beyond 16).

### Interpretation

The data suggests that for the tasks represented by "Maj@8" and "Last@8", increasing the number of generated solutions is a viable strategy to improve accuracy. The "Maj@8" method appears to be more robust and consistently performs better, particularly when fewer solutions are available. The "Last@8" method, while initially lagging, shows a strong improvement curve, indicating it might be more sensitive to a larger pool of generated solutions.

The horizontal lines for "Llama-3.1-8B" and "Llama-3.2-3B" serve as benchmarks. The fact that both "Maj@8" and "Last@8" eventually exceed these benchmarks implies that these methods are effective for the problem domain. The plateauing of accuracy at higher numbers of generated solutions suggests diminishing returns, meaning that generating significantly more solutions beyond a certain point might not yield substantial improvements in accuracy and could incur higher computational costs. This could indicate that the models are converging or that the diversity of generated solutions is no longer significantly contributing to finding more correct answers.