## Diagram: Multi-Hop Reasoning Training and Inference Pipeline

### Overview

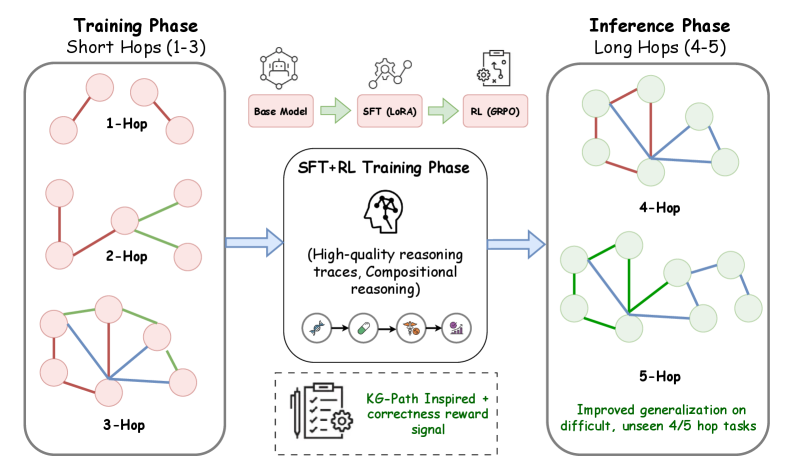

The image is a technical diagram illustrating a machine learning pipeline designed to improve multi-hop reasoning. It depicts a two-stage process: a **Training Phase** focusing on short reasoning chains (1-3 hops) and an **Inference Phase** where the trained model tackles longer, more complex chains (4-5 hops). The central mechanism enabling this generalization is a combined Supervised Fine-Tuning (SFT) and Reinforcement Learning (RL) training regimen.

### Components/Axes

The diagram is organized into three primary vertical sections, connected by directional arrows indicating flow.

1. **Left Section: Training Phase (Short Hops 1-3)**

* **Title:** "Training Phase / Short Hops (1-3)"

* **Content:** Three vertically stacked graph diagrams labeled "1-Hop", "2-Hop", and "3-Hop".

* **Visual Elements:** Each graph consists of pink circular nodes connected by colored lines (red, green, blue). The complexity (number of nodes and connections) increases from 1-Hop to 3-Hop.

2. **Center Section: SFT+RL Training Phase**

* **Title:** "SFT+RL Training Phase"

* **Top Flow Diagram:** A horizontal sequence of three pink rectangular boxes connected by green arrows:

* Box 1: "Base Model" (with a network icon above).

* Box 2: "SFT (LoRA)" (with a gear/network icon above).

* Box 3: "RL (GRPO)" (with a clipboard/checklist icon above).

* **Central Icon & Text:** A stylized brain icon with a lightning bolt. Below it, the text: "(High-quality reasoning traces, Compositional reasoning)".

* **Tool Icons:** A horizontal row of four circular icons below the text: a wrench, a key, a toolbox, and a brain with a gear.

* **Bottom Dashed Box:** Contains an icon of a pen writing on a checklist next to a gear. The text reads: "K6-Path Inspired + / correctness reward signal".

3. **Right Section: Inference Phase (Long Hops 4-5)**

* **Title:** "Inference Phase / Long Hops (4-5)"

* **Content:** Two vertically stacked graph diagrams labeled "4-Hop" and "5-Hop".

* **Visual Elements:** Graphs with light green circular nodes connected by colored lines (red, green, blue). These graphs are more complex and interconnected than those in the training phase.

* **Footer Text:** Below the 5-Hop graph, green text states: "Improved generalization on / difficult, unseen 4/5 hop tasks".

### Detailed Analysis

* **Training Data Structure:** The training phase uses progressively complex reasoning graphs:

* **1-Hop:** A simple chain of 3 nodes connected by 2 red lines.

* **2-Hop:** A branching structure with 5 nodes. Connections include red, green, and blue lines.

* **3-Hop:** A more densely connected graph with 6 nodes and multiple red, green, and blue connections forming cycles.

* **Training Methodology:** The core training process involves:

1. Starting with a **Base Model**.

2. Applying **Supervised Fine-Tuning (SFT)** using **LoRA** (Low-Rank Adaptation).

3. Further refining the model with **Reinforcement Learning (RL)** using **GRPO** (likely a specific RL algorithm).

4. This process is designed to produce "High-quality reasoning traces" and enable "Compositional reasoning".

5. A specific reward signal, inspired by "K6-Path" and focused on "correctness", guides the RL phase.

* **Inference Outcome:** The model, after training on 1-3 hop tasks, is applied to more complex **4-Hop** and **5-Hop** graphs during inference. These graphs feature light green nodes and intricate, multi-colored connections, representing "difficult, unseen" tasks. The diagram asserts this leads to "Improved generalization".

### Key Observations

1. **Color Coding:** Node color changes from **pink** (training) to **light green** (inference), visually distinguishing the phases. Edge colors (red, green, blue) are consistent across both phases, likely representing different types of relationships or reasoning steps.

2. **Complexity Progression:** There is a clear visual increase in graph complexity (node count and connection density) from the 1-Hop training example to the 5-Hop inference example.

3. **Spatial Flow:** The process flows left-to-right: Training Data -> Training Methodology -> Inference Application. The central "SFT+RL Training Phase" box is the transformative engine.

4. **Explicit Claim:** The diagram makes a direct causal claim: training on short-hop graphs (1-3) using the described SFT+RL method results in the ability to handle long-hop graphs (4-5) that were not seen during training.

### Interpretation

This diagram outlines a curriculum learning strategy for AI reasoning. The core hypothesis is that by mastering simpler, shorter reasoning chains (1-3 hops), a model can develop fundamental compositional reasoning skills. These skills then **generalize** to solve more complex, longer-chain problems (4-5 hops) without direct training on them.

The "K6-Path Inspired + correctness reward signal" is a critical component. It suggests the RL phase doesn't just reward correct final answers but likely evaluates the quality and logical soundness of the intermediate reasoning steps (the "path"), encouraging the model to build robust, generalizable reasoning procedures.

The shift from pink to green nodes symbolizes the transition from a learning state to a deployed, capable state. The diagram argues that this specific training pipeline (Base -> SFT/LoRA -> RL/GRPO with a structured reward) is an effective method for achieving **out-of-distribution generalization** in multi-step reasoning tasks, a significant challenge in AI. The ultimate goal is to create models that don't just memorize patterns but learn underlying logical structures applicable to novel, more complex situations.