TECHNICAL ASSET FINGERPRINT

be7cba0f8a8fbcc5e81b4224

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

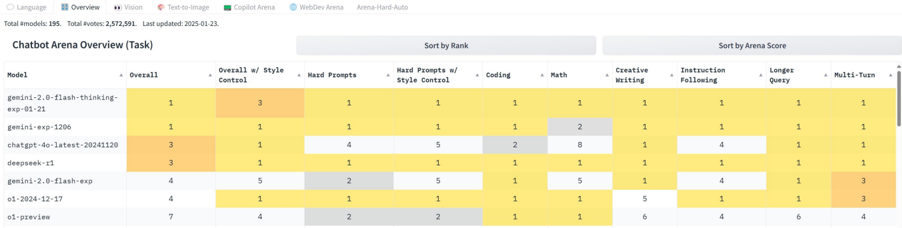

## Heatmap: Chatbot Arena Overview (Task)

### Overview

The image is a heatmap displaying the performance of various chatbot models across different tasks and metrics. The models are listed on the left, and the tasks/metrics are listed across the top. The cells are colored based on the model's performance in each category, with darker shades indicating better performance. The data includes overall rankings, performance on specific tasks like coding and math, and style-related metrics.

### Components/Axes

* **Title:** Chatbot Arena Overview (Task)

* **X-Axis (Columns):**

* Model

* Overall

* Overall w/ Style Control

* Hard Prompts

* Hard Prompts w/ Style Control

* Coding

* Math

* Creative Writing

* Instruction Following

* Longer Query

* Multi-Turn

* **Y-Axis (Rows):**

* gemini-2.0-flash-thinking-exp-01-21

* gemini-exp-1206

* chatgpt-4o-latest-20241120

* deepseek-t1

* gemini-2.0-flash-exp

* o1-2024-12-17

* o1-preview

* **Color Scale:** The heatmap uses a color scale where darker shades (likely yellow/orange) indicate better performance (lower numerical rank). Lighter shades (likely gray/white) indicate worse performance (higher numerical rank).

### Detailed Analysis or ### Content Details

Here's a breakdown of the data for each model across the different categories:

* **gemini-2.0-flash-thinking-exp-01-21:**

* Overall: 1

* Overall w/ Style Control: 3

* Hard Prompts: 1

* Hard Prompts w/ Style Control: 1

* Coding: 1

* Math: 1

* Creative Writing: 1

* Instruction Following: 1

* Longer Query: 1

* Multi-Turn: 1

* **gemini-exp-1206:**

* Overall: 1

* Overall w/ Style Control: 1

* Hard Prompts: 1

* Hard Prompts w/ Style Control: 1

* Coding: 1

* Math: 2

* Creative Writing: 1

* Instruction Following: 1

* Longer Query: 1

* Multi-Turn: 1

* **chatgpt-4o-latest-20241120:**

* Overall: 3

* Overall w/ Style Control: 1

* Hard Prompts: 4

* Hard Prompts w/ Style Control: 5

* Coding: 2

* Math: 8

* Creative Writing: 1

* Instruction Following: 4

* Longer Query: 1

* Multi-Turn: 1

* **deepseek-t1:**

* Overall: 3

* Overall w/ Style Control: 1

* Hard Prompts: 1

* Hard Prompts w/ Style Control: 1

* Coding: 1

* Math: 1

* Creative Writing: 1

* Instruction Following: 1

* Longer Query: 1

* Multi-Turn: 1

* **gemini-2.0-flash-exp:**

* Overall: 4

* Overall w/ Style Control: 5

* Hard Prompts: 2

* Hard Prompts w/ Style Control: 5

* Coding: 1

* Math: 5

* Creative Writing: 1

* Instruction Following: 4

* Longer Query: 1

* Multi-Turn: 3

* **o1-2024-12-17:**

* Overall: 4

* Overall w/ Style Control: 1

* Hard Prompts: 1

* Hard Prompts w/ Style Control: 1

* Coding: 1

* Math: 1

* Creative Writing: 5

* Instruction Following: 1

* Longer Query: 1

* Multi-Turn: 3

* **o1-preview:**

* Overall: 7

* Overall w/ Style Control: 4

* Hard Prompts: 2

* Hard Prompts w/ Style Control: 2

* Coding: 1

* Math: 1

* Creative Writing: 6

* Instruction Following: 4

* Longer Query: 6

* Multi-Turn: 4

### Key Observations

* The models "gemini-2.0-flash-thinking-exp-01-21" and "gemini-exp-1206" generally perform very well, consistently ranking at the top (rank 1) across most categories.

* "chatgpt-4o-latest-20241120" shows a weaker performance in "Hard Prompts" (rank 4), "Hard Prompts w/ Style Control" (rank 5), and "Math" (rank 8) compared to other categories.

* "o1-preview" has the lowest overall ranking (rank 7) and performs relatively worse in "Creative Writing" (rank 6) and "Longer Query" (rank 6).

### Interpretation

The heatmap provides a comparative overview of chatbot model performance across various tasks. The data suggests that some models excel in specific areas while others offer more consistent performance across the board. For example, while "chatgpt-4o-latest-20241120" performs well in most categories, it struggles with math and hard prompts. "gemini-2.0-flash-thinking-exp-01-21" and "gemini-exp-1206" appear to be the most consistently high-performing models based on this data. The "o1-preview" model seems to have the weakest overall performance. The data can be used to identify the strengths and weaknesses of each model and to select the most appropriate model for a given task.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

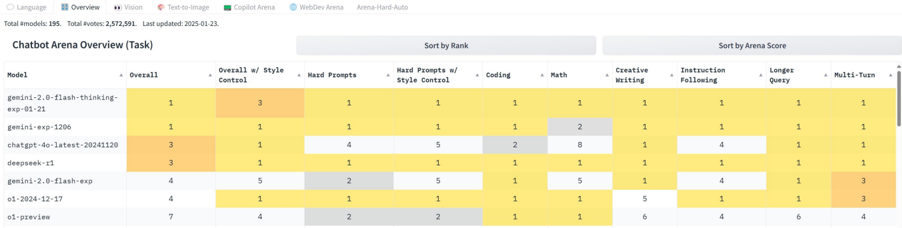

## Heatmap: Chatbot Arena Overview

### Overview

The image presents a heatmap visualizing the performance of several language models across various tasks within the Chatbot Arena. The heatmap uses color intensity to represent relative ranking, with darker yellow indicating higher performance and lighter gray indicating lower performance. The data is organized with models listed vertically and tasks horizontally.

### Components/Axes

* **Models (Vertical Axis):**

* gemini-2.0-flash-thinking-exp-01

* gemini-exp-120b

* chatgpt-4p-latest-20241120

* deepseek-v1

* gpt-3.5-turbo

* 01-2024-12-17

* 01-preview

* **Tasks (Horizontal Axis):**

* Overall

* Overall w/ Context

* Style

* Hard Prompts w/ Style Control

* Coding

* Math

* Creative Writing

* Instruction Following

* Longer Query

* Multi-Turn

* **Color Scale/Legend:** The heatmap uses a gradient from dark yellow (high rank/performance) to light gray (low rank/performance). The numbers 1-5 are used within the cells to indicate the rank.

* **Header:** "Chatbot Arena Overview (Task)"

* **Metadata:** "Total Models: 195. Total Votes: 2,372,591. Last updated: 2023-01-23"

* **Sorting Options:** "Sort by Rank" and "Sort by Arena Score"

### Detailed Analysis

The heatmap displays the ranking of each model for each task, indicated by the numbers 1-5 within each cell. The color intensity corresponds to the rank.

* **gemini-2.0-flash-thinking-exp-01:**

* Overall: 3

* Overall w/ Context: 3

* Style: 1

* Hard Prompts w/ Style Control: 1

* Coding: 1

* Math: 1

* Creative Writing: 1

* Instruction Following: 2

* Longer Query: 1

* Multi-Turn: 1

* **gemini-exp-120b:**

* Overall: 2

* Overall w/ Context: 2

* Style: 2

* Hard Prompts w/ Style Control: 3

* Coding: 2

* Math: 2

* Creative Writing: 2

* Instruction Following: 3

* Longer Query: 2

* Multi-Turn: 2

* **chatgpt-4p-latest-20241120:**

* Overall: 1

* Overall w/ Context: 1

* Style: 3

* Hard Prompts w/ Style Control: 2

* Coding: 2

* Math: 3

* Creative Writing: 3

* Instruction Following: 1

* Longer Query: 3

* Multi-Turn: 3

* **deepseek-v1:**

* Overall: 4

* Overall w/ Context: 4

* Style: 4

* Hard Prompts w/ Style Control: 4

* Coding: 1

* Math: 1

* Creative Writing: 1

* Instruction Following: 4

* Longer Query: 4

* Multi-Turn: 4

* **gpt-3.5-turbo:**

* Overall: 5

* Overall w/ Context: 5

* Style: 5

* Hard Prompts w/ Style Control: 5

* Coding: 5

* Math: 5

* Creative Writing: 5

* Instruction Following: 5

* Longer Query: 5

* Multi-Turn: 3

* **01-2024-12-17:**

* Overall: 6

* Overall w/ Context: 6

* Style: 6

* Hard Prompts w/ Style Control: 6

* Coding: 6

* Math: 6

* Creative Writing: 6

* Instruction Following: 6

* Longer Query: 6

* Multi-Turn: 6

* **01-preview:**

* Overall: 7

* Overall w/ Context: 7

* Style: 2

* Hard Prompts w/ Style Control: 2

* Coding: 1

* Math: 1

* Creative Writing: 6

* Instruction Following: 4

* Longer Query: 6

* Multi-Turn: 6

### Key Observations

* `gemini-2.0-flash-thinking-exp-01` consistently ranks highly (mostly 1s and 2s) across most tasks.

* `gpt-3.5-turbo` consistently ranks lowest (mostly 5s) across most tasks.

* `chatgpt-4p-latest-20241120` performs well overall, ranking 1st in Overall and Overall w/ Context, and 1st in Instruction Following.

* `deepseek-v1` excels in Coding and Math, consistently ranking 1st in those categories.

* The "Style" task shows the most variation in rankings among the models.

### Interpretation

The heatmap provides a comparative overview of the strengths and weaknesses of different language models across a range of chatbot-related tasks. The data suggests that `gemini-2.0-flash-thinking-exp-01` is a strong all-around performer, while `chatgpt-4p-latest-20241120` excels in overall understanding and instruction following. `deepseek-v1` appears to be particularly well-suited for technical tasks like coding and math. `gpt-3.5-turbo` consistently underperforms compared to the other models. The variation in rankings for the "Style" task suggests that stylistic nuance is a challenging area for these models. The metadata indicates that the data is based on a substantial number of votes (2,372,591), lending credibility to the findings. The last updated date (2023-01-23) suggests the data may be somewhat outdated, as models are constantly evolving. The presence of "preview" models (01-preview) indicates ongoing development and experimentation.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Table: Chatbot Arena Overview (Task)

### Overview

This image is a screenshot of a web interface displaying a comparative performance table for various AI language models. The table ranks models across multiple task categories based on user votes from an arena-style evaluation platform. The data is presented in a grid format with models as rows and evaluation categories as columns. Numerical rankings (1 being best) are displayed in cells, with a color gradient (yellow to gray) visually indicating performance levels.

### Components/Axes

**Header Metadata (Top of Image):**

- **Language:** English (implied by all text content).

- **Navigation Tabs:** Overview, Vision, Text-to-Image, Copilot Arena, WebDev Arena, Arena-Hard-Auto.

- **Statistics:** "Total models: 195", "Total votes: 2,372,591", "Last updated: 2025-03-23".

**Table Structure:**

- **Title:** "Chatbot Arena Overview (Task)"

- **Sorting Controls:** "Sort by Rank" and "Sort by Arena Score" buttons above the table.

- **Column Headers (Left to Right):**

1. `model`

2. `Overall`

3. `Overall w/ Style Control`

4. `Hard Prompts`

5. `Hard Prompts w/ Style Control`

6. `Coding`

7. `Math`

8. `Creative Writing`

9. `Instruction Following`

10. `Longer Query`

11. `Multi-Turn`

- **Row Labels (Model Names, Top to Bottom):**

1. `gemini-2.0-flash-thinking-exp-01-21`

2. `gemini-exp-1206`

3. `chatgpt-4o-latest-20241120`

4. `deepseek-v3`

5. `gemini-2.0-flash-exp`

6. `o1-2024-12-17`

7. `o1-preview`

**Visual Legend (Implied by Color):**

- **Yellow/Gold:** Indicates a top rank (value of 1). The most intense yellow corresponds to rank 1.

- **Light Yellow/Beige:** Indicates mid-tier ranks (values like 2, 3, 4, 5).

- **Gray:** Indicates lower ranks within this subset (values like 6, 7, 8).

### Detailed Analysis

**Data Extraction by Model (Row):**

1. **gemini-2.0-flash-thinking-exp-01-21:**

- Overall: 1

- Overall w/ Style Control: 3

- Hard Prompts: 1

- Hard Prompts w/ Style Control: 1

- Coding: 1

- Math: 1

- Creative Writing: 1

- Instruction Following: 1

- Longer Query: 1

- Multi-Turn: 1

*Trend: Dominant performance, ranking 1st in 9 out of 10 categories. Its only non-first rank is 3rd in "Overall w/ Style Control".*

2. **gemini-exp-1206:**

- Overall: 1

- Overall w/ Style Control: 1

- Hard Prompts: 1

- Hard Prompts w/ Style Control: 1

- Coding: 1

- Math: 2

- Creative Writing: 1

- Instruction Following: 1

- Longer Query: 1

- Multi-Turn: 1

*Trend: Nearly perfect, ranking 1st in 9 categories. Its only deviation is 2nd place in "Math".*

3. **chatgpt-4o-latest-20241120:**

- Overall: 3

- Overall w/ Style Control: 1

- Hard Prompts: 4

- Hard Prompts w/ Style Control: 5

- Coding: 2

- Math: 8

- Creative Writing: 1

- Instruction Following: 4

- Longer Query: 1

- Multi-Turn: 1

*Trend: Highly variable. Ranks 1st in three categories (Overall w/ Style Control, Creative Writing, Longer Query, Multi-Turn) but shows significant weakness in "Math" (8th) and "Hard Prompts w/ Style Control" (5th).*

4. **deepseek-v3:**

- Overall: 3

- Overall w/ Style Control: 1

- Hard Prompts: 1

- Hard Prompts w/ Style Control: 1

- Coding: 1

- Math: 1

- Creative Writing: 1

- Instruction Following: 1

- Longer Query: 1

- Multi-Turn: 1

*Trend: Very strong, ranking 1st in 8 categories. Tied for 3rd in "Overall" and 1st in "Overall w/ Style Control".*

5. **gemini-2.0-flash-exp:**

- Overall: 4

- Overall w/ Style Control: 5

- Hard Prompts: 2

- Hard Prompts w/ Style Control: 5

- Coding: 1

- Math: 5

- Creative Writing: 1

- Instruction Following: 4

- Longer Query: 1

- Multi-Turn: 3

*Trend: Mixed performance. Strong in "Coding", "Creative Writing", and "Longer Query" (all 1st). Weaker in "Overall", "Overall w/ Style Control", "Hard Prompts w/ Style Control", and "Math".*

6. **o1-2024-12-17:**

- Overall: 4

- Overall w/ Style Control: 1

- Hard Prompts: 1

- Hard Prompts w/ Style Control: 1

- Coding: 1

- Math: 1

- Creative Writing: 5

- Instruction Following: 1

- Longer Query: 1

- Multi-Turn: 3

*Trend: Excellent in technical and reasoning tasks (1st in Hard Prompts, Coding, Math, Instruction Following). Notably weaker in "Creative Writing" (5th).*

7. **o1-preview:**

- Overall: 7

- Overall w/ Style Control: 4

- Hard Prompts: 1

- Hard Prompts w/ Style Control: 2

- Coding: 1

- Math: 1

- Creative Writing: 6

- Instruction Following: 4

- Longer Query: 6

- Multi-Turn: 4

*Trend: Strong in core reasoning tasks ("Hard Prompts", "Coding", "Math") but shows the weakest performance in this group for "Creative Writing", "Longer Query", and "Overall".*

### Key Observations

1. **Dominance at the Top:** The top two rows (`gemini-2.0-flash-thinking-exp-01-21` and `gemini-exp-1206`) are overwhelmingly dominant, securing almost exclusively 1st place ranks.

2. **Category Specialization:** Models show clear specialization. For example, `o1` models excel in "Coding" and "Math" but are weaker in "Creative Writing". `chatgpt-4o-latest` shows strength in creative and stylistic tasks but weakness in hard reasoning and math.

3. **Impact of "Style Control":** The "Overall w/ Style Control" column often differs significantly from the "Overall" column for the same model (e.g., `chatgpt-4o-latest` jumps from 3rd to 1st), suggesting style adherence is a separate performance dimension.

4. **Consistency vs. Variance:** Some models (`gemini-exp-1206`, `deepseek-v3`) are highly consistent across categories. Others (`chatgpt-4o-latest`, `o1-preview`) have high variance, indicating strengths in specific domains and weaknesses in others.

### Interpretation

This table provides a snapshot of the competitive landscape among leading AI models as of March 2025, based on aggregated user preferences. It demonstrates that **no single model is universally superior across all task types**. Performance is highly contextual.

- **The data suggests a bifurcation:** Models either pursue broad, top-tier dominance (like the leading Gemini variants) or exhibit specialized excellence (like the o1 series in reasoning).

- **The "Style Control" metric is a key differentiator,** revealing that a model's raw capability ("Overall") does not perfectly correlate with its ability to follow stylistic instructions, which is a crucial aspect of user experience.

- **Notable Anomaly:** The `chatgpt-4o-latest` model's 8th place in "Math" is a significant outlier compared to its other rankings, highlighting a potential specific weakness or a quirk in the evaluation data for that category.

- **Underlying Message:** For users, the choice of model should be task-dependent. For technical problem-solving, the o1 series or top Gemini models are indicated. For creative or style-sensitive tasks, other models may be preferable despite lower overall rankings. The arena format effectively surfaces these nuanced trade-offs that a single leaderboard score would obscure.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Table: Chatbot Arena Overview (Task)

### Overview

The image displays a tabular dataset titled "Chatbot Arena Overview (Task)" with 7 rows (models) and 9 columns (performance metrics). The table uses color-coded cells (yellow, orange, gray) to represent rankings or scores, though no explicit legend is visible. The data is organized to compare models across categories like "Overall," "Coding," "Math," and "Creative Writing."

### Components/Axes

- **Headers**:

- Model (leftmost column)

- Overall

- Overall w/ Style Control

- Hard Prompts

- Hard Prompts w/ Style Control

- Coding

- Math

- Creative Writing

- Instruction Following

- Longer Query

- Multi-Turn

- **Rows**:

- gemini-2.0-flash-thinking-exp-01-21

- gemini-exp-1206

- chatgpt-4o-latest-20241120

- deepseek-r1

- gemini-2.0-flash-exp

- o1-2024-12-17

- o1-preview

- **Top Bar Text**:

- "Total #models: 195. Total #votes: 2,572,591. Last updated: 2025-01-23."

### Detailed Analysis

- **Model: gemini-2.0-flash-thinking-exp-01-21**

- Overall: 1 (yellow)

- Overall w/ Style Control: 3 (orange)

- Hard Prompts: 1 (yellow)

- Hard Prompts w/ Style Control: 1 (yellow)

- Coding: 1 (yellow)

- Math: 1 (yellow)

- Creative Writing: 1 (yellow)

- Instruction Following: 1 (yellow)

- Longer Query: 1 (yellow)

- Multi-Turn: 1 (yellow)

- **Model: gemini-exp-1206**

- Overall: 1 (yellow)

- Overall w/ Style Control: 1 (yellow)

- Hard Prompts: 1 (yellow)

- Hard Prompts w/ Style Control: 1 (yellow)

- Coding: 1 (yellow)

- Math: 2 (orange)

- Creative Writing: 1 (yellow)

- Instruction Following: 1 (yellow)

- Longer Query: 1 (yellow)

- Multi-Turn: 1 (yellow)

- **Model: chatgpt-4o-latest-20241120**

- Overall: 3 (orange)

- Overall w/ Style Control: 1 (yellow)

- Hard Prompts: 4 (orange)

- Hard Prompts w/ Style Control: 5 (orange)

- Coding: 2 (orange)

- Math: 8 (orange)

- Creative Writing: 1 (yellow)

- Instruction Following: 4 (orange)

- Longer Query: 1 (yellow)

- Multi-Turn: 1 (yellow)

- **Model: deepseek-r1**

- Overall: 3 (orange)

- Overall w/ Style Control: 1 (yellow)

- Hard Prompts: 1 (yellow)

- Hard Prompts w/ Style Control: 1 (yellow)

- Coding: 1 (yellow)

- Math: 1 (yellow)

- Creative Writing: 1 (yellow)

- Instruction Following: 1 (yellow)

- Longer Query: 1 (yellow)

- Multi-Turn: 1 (yellow)

- **Model: gemini-2.0-flash-exp**

- Overall: 4 (orange)

- Overall w/ Style Control: 5 (orange)

- Hard Prompts: 2 (orange)

- Hard Prompts w/ Style Control: 5 (orange)

- Coding: 1 (yellow)

- Math: 5 (orange)

- Creative Writing: 1 (yellow)

- Instruction Following: 4 (orange)

- Longer Query: 1 (yellow)

- Multi-Turn: 3 (orange)

- **Model: o1-2024-12-17**

- Overall: 4 (orange)

- Overall w/ Style Control: 1 (yellow)

- Hard Prompts: 1 (yellow)

- Hard Prompts w/ Style Control: 1 (yellow)

- Coding: 1 (yellow)

- Math: 1 (yellow)

- Creative Writing: 5 (orange)

- Instruction Following: 1 (yellow)

- Longer Query: 1 (yellow)

- Multi-Turn: 3 (orange)

- **Model: o1-preview**

- Overall: 7 (orange)

- Overall w/ Style Control: 4 (orange)

- Hard Prompts: 2 (orange)

- Hard Prompts w/ Style Control: 2 (orange)

- Coding: 1 (yellow)

- Math: 1 (yellow)

- Creative Writing: 6 (orange)

- Instruction Following: 4 (orange)

- Longer Query: 6 (orange)

- Multi-Turn: 4 (orange)

### Key Observations

1. **Highest Overall Scores**:

- "o1-preview" has the highest "Overall" score (7), followed by "o1-2024-12-17" and "gemini-2.0-flash-exp" (both 4).

2. **Math Performance**:

- "chatgpt-4o-latest-20241120" scores 8 in "Math," significantly higher than others (max 5 for "gemini-2.0-flash-exp").

3. **Style Control Impact**:

- "gemini-2.0-flash-thinking-exp-01-21" and "gemini-2.0-flash-exp" show improved scores with style control (e.g., "Overall w/ Style Control" increases from 1 to 3 and 1 to 5, respectively).

4. **Creative Writing**:

- "o1-2024-12-17" and "o1-preview" have the highest scores (5 and 6, respectively).

### Interpretation

The table highlights performance disparities across models in specific tasks. For example:

- **Math Dominance**: "chatgpt-4o-latest-20241120" excels in math (8), suggesting specialized training or architecture for numerical reasoning.

- **Multi-Turn Complexity**: "o1-preview" and "o1-2024-12-17" perform well in multi-turn interactions (4 and 3, respectively), indicating robustness in maintaining context over extended conversations.

- **Style Control Benefits**: Models like "gemini-2.0-flash-thinking-exp-01-21" and "gemini-2.0-flash-exp" show significant improvements when style control is applied, implying that structured output formatting enhances performance in certain tasks.

The data suggests that no single model dominates all categories, emphasizing the importance of task-specific optimization. The "o1-preview" model, with the highest "Overall" score, may represent a balanced performer across multiple domains, while "chatgpt-4o-latest-20241120" specializes in math and coding.

DECODING INTELLIGENCE...