TECHNICAL ASSET FINGERPRINT

be7d2f7bb5013c96723a0c33

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Learning Paradigms: Direct, Reinforcement, and Experiential Learning

### Overview

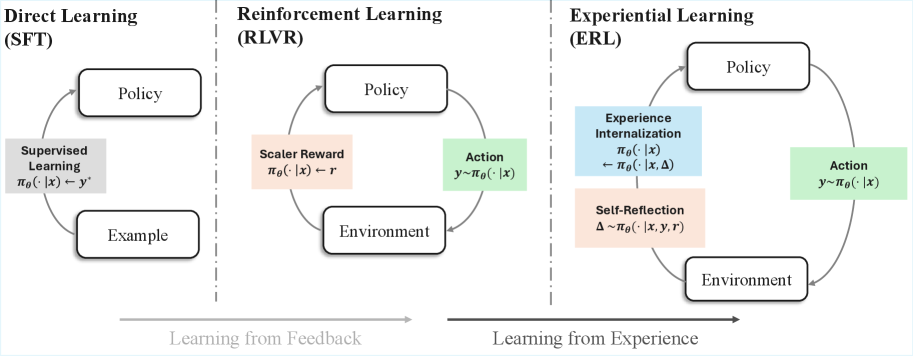

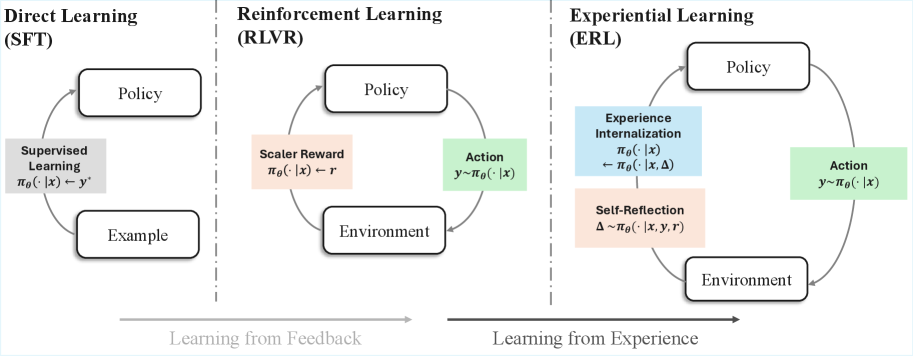

The image presents a comparative diagram illustrating three distinct learning paradigms: Direct Learning (SFT), Reinforcement Learning (RLVR), and Experiential Learning (ERL). Each paradigm is represented by a cyclical flow diagram, highlighting the interaction between different components such as policy, environment, actions, and feedback mechanisms. The diagram emphasizes the shift from learning from explicit examples (Direct Learning) to learning from feedback (Reinforcement Learning) and finally to learning from experience (Experiential Learning).

### Components/Axes

* **Titles:**

* Direct Learning (SFT) - Top-left

* Reinforcement Learning (RLVR) - Top-center

* Experiential Learning (ERL) - Top-right

* **Nodes:** Each paradigm includes nodes representing key components:

* Policy (in all three paradigms)

* Example (Direct Learning)

* Environment (Reinforcement and Experiential Learning)

* Supervised Learning (Direct Learning)

* Scaler Reward (Reinforcement Learning)

* Action (Reinforcement and Experiential Learning)

* Experience Internalization (Experiential Learning)

* Self-Reflection (Experiential Learning)

* **Arrows:** Arrows indicate the flow of information and interaction between the nodes.

* **Equations:** Each node contains equations describing the relationships between variables.

* **Horizontal Axis:** A horizontal axis at the bottom indicates the progression from "Learning from Feedback" to "Learning from Experience."

### Detailed Analysis or ### Content Details

**1. Direct Learning (SFT):**

* **Policy:** Located at the top.

* **Example:** Located at the bottom.

* **Supervised Learning:** Located on the left side, between Policy and Example.

* Equation: πθ(· | x) → y'

* **Flow:** The flow starts from the Example, goes to Supervised Learning, then to Policy, and back to Example, forming a loop.

**2. Reinforcement Learning (RLVR):**

* **Policy:** Located at the top.

* **Environment:** Located at the bottom.

* **Action:** Located on the right side, between Policy and Environment.

* Equation: y ~ πθ(· | x)

* **Scaler Reward:** Located on the left side, between Policy and Environment.

* Equation: πθ(· | x) → r

* **Flow:** The flow starts from the Environment, goes to Scaler Reward, then to Policy, then to Action, and back to Environment, forming a loop.

**3. Experiential Learning (ERL):**

* **Policy:** Located at the top.

* **Environment:** Located at the bottom.

* **Action:** Located on the right side, between Policy and Environment.

* Equation: y ~ πθ(· | x)

* **Experience Internalization:** Located on the left side, above Self-Reflection, between Policy and Environment.

* Equation: πθ(· | x) ← πθ(· | x, Δ)

* **Self-Reflection:** Located on the left side, below Experience Internalization, between Policy and Environment.

* Equation: Δ ~ πθ(· | x, y, r)

* **Flow:** The flow starts from the Environment, goes to Self-Reflection, then to Experience Internalization, then to Policy, then to Action, and back to Environment, forming a loop.

### Key Observations

* **Progression:** The diagrams illustrate a progression from simple supervised learning to more complex reinforcement and experiential learning.

* **Feedback:** Reinforcement Learning introduces the concept of a scalar reward, while Experiential Learning incorporates experience internalization and self-reflection.

* **Complexity:** Experiential Learning has the most complex flow, involving multiple feedback loops and internal processes.

### Interpretation

The image effectively visualizes the evolution of learning paradigms. Direct Learning relies on explicit examples, Reinforcement Learning learns from feedback signals (rewards), and Experiential Learning learns by internalizing experiences and reflecting on them. The diagrams highlight the increasing complexity and sophistication of learning algorithms as they move from supervised to reinforcement and experiential learning. The inclusion of equations within each node provides a concise mathematical representation of the relationships between the components. The progression from "Learning from Feedback" to "Learning from Experience" suggests a shift towards more autonomous and adaptive learning systems.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Learning Paradigms - Direct, Reinforcement, and Experiential

### Overview

The image is a diagram illustrating three different learning paradigms: Direct Learning (SFT), Reinforcement Learning (RLVR), and Experiential Learning (ERL). It visually represents the flow of information and interaction within each paradigm, highlighting the differences in how learning occurs. The diagram uses boxes to represent components and arrows to indicate the direction of influence or data flow. A horizontal arrow at the bottom indicates a transition from "Learning from Feedback" to "Learning from Experience".

### Components/Axes

The diagram is divided into three main sections, each representing a learning paradigm. Each section contains the following components:

* **Policy:** Represented by a purple rectangle in all three paradigms.

* **Environment:** Represented by a brown rectangle in Reinforcement and Experiential Learning.

* **Action:** Represented by a green rectangle in Reinforcement and Experiential Learning.

* **Example:** Represented by a light purple rectangle in Direct Learning.

* **Scalar Reward:** Represented by a light green rectangle in Reinforcement Learning.

* **Experience Internalization & Self-Reflection:** Represented by a light blue and light orange rectangle in Experiential Learning.

The diagram also includes mathematical notations within the boxes, representing the underlying functions or processes. These include:

* π<sub>θ</sub><sup>(·)</sup>(x) → y<sup>*</sup> (Direct Learning)

* π<sub>θ</sub><sup>(·)</sup>(x) → r (Scalar Reward)

* y → π<sub>θ</sub><sup>(·)</sup>(x) (Action)

* π<sub>θ</sub><sup>(·)</sup>(x) (Experience Internalization)

* Δ ~ π<sub>θ</sub><sup>(·)</sup>(x, y, r) (Self-Reflection)

A horizontal arrow at the bottom is labeled "Learning from Feedback" on the left and "Learning from Experience" on the right.

### Detailed Analysis / Content Details

**Direct Learning (SFT):**

* The "Policy" box is connected to "Supervised Learning" which is connected to the "Example" box.

* The equation within the "Supervised Learning" box is: π<sub>θ</sub><sup>(·)</sup>(x) → y<sup>*</sup>.

* This paradigm appears to be a direct mapping from input (x) to output (y<sup>*</sup>) guided by a policy.

**Reinforcement Learning (RLVR):**

* The "Policy" box is connected to "Scalar Reward" and "Action".

* The "Scalar Reward" box has the equation: π<sub>θ</sub><sup>(·)</sup>(x) → r.

* The "Action" box has the equation: y → π<sub>θ</sub><sup>(·)</sup>(x).

* The "Action" box is connected to the "Environment" box, which then loops back to the "Policy" box.

* This paradigm involves learning through trial and error, receiving rewards (r) based on actions (y) taken in the environment (x).

**Experiential Learning (ERL):**

* The "Policy" box is connected to "Experience Internalization" and "Action".

* The "Experience Internalization" box has the equation: π<sub>θ</sub><sup>(·)</sup>(x).

* The "Self-Reflection" box has the equation: Δ ~ π<sub>θ</sub><sup>(·)</sup>(x, y, r).

* The "Action" box has the equation: y → π<sub>θ</sub><sup>(·)</sup>(x).

* The "Action" box is connected to the "Environment" box, which then loops back to the "Policy" box.

* This paradigm incorporates both experience internalization and self-reflection (Δ) in addition to action and environment interaction.

### Key Observations

* The diagram clearly illustrates a progression from direct, supervised learning to more complex learning paradigms that involve interaction with an environment.

* The inclusion of mathematical notations suggests a formal, algorithmic approach to each learning paradigm.

* The "Experiential Learning" paradigm appears to be the most complex, incorporating elements of both reinforcement learning and self-reflection.

* The transition from "Learning from Feedback" to "Learning from Experience" highlights a shift in the source of information used for learning.

### Interpretation

The diagram demonstrates a conceptual hierarchy of learning approaches. Direct Learning represents the simplest form, relying on explicit examples. Reinforcement Learning introduces the concept of learning through interaction and reward, while Experiential Learning builds upon this by adding a layer of self-reflection and internal experience processing. The diagram suggests that as learning becomes more complex, it moves away from relying solely on external feedback (examples or rewards) and incorporates internal processes for understanding and adapting to the environment. The mathematical notations indicate that these paradigms are not merely conceptual but can be formalized and implemented as algorithms. The diagram is a high-level overview and does not delve into the specifics of each algorithm or the underlying mechanisms of learning. It serves as a useful visual aid for understanding the fundamental differences between these three learning paradigms.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Comparison of Three Learning Paradigms

### Overview

The image is a conceptual diagram comparing three machine learning paradigms: Direct Learning (Supervised Fine-Tuning, SFT), Reinforcement Learning (RLVR), and Experiential Learning (ERL). The diagram is divided into three vertical sections, each illustrating the core feedback loop of a paradigm. A horizontal arrow at the bottom indicates a conceptual progression from "Learning from Feedback" to "Learning from Experience."

### Components/Axes

The diagram has no traditional axes. Its components are labeled boxes, arrows, and text annotations arranged in three distinct columns.

**1. Left Column: Direct Learning (SFT)**

* **Title:** "Direct Learning (SFT)"

* **Components:**

* A box labeled "Policy" (top).

* A box labeled "Example" (bottom).

* A gray box labeled "Supervised Learning" positioned on the arrow from "Example" to "Policy".

* **Flow/Text:** An arrow points from "Example" to "Policy". The text on the arrow reads: `π_θ(·|x) ← y'`. This denotes the policy `π_θ` being updated with a target label `y'` given input `x`.

**2. Middle Column: Reinforcement Learning (RLVR)**

* **Title:** "Reinforcement Learning (RLVR)"

* **Components:**

* A box labeled "Policy" (top).

* A box labeled "Environment" (bottom).

* A green box labeled "Action" on the arrow from "Policy" to "Environment".

* A peach-colored box labeled "Scalar Reward" on the arrow from "Environment" to "Policy".

* **Flow/Text:**

* Arrow from "Policy" to "Environment": Labeled with `y ~ π_θ(·|x)`, indicating an action `y` is sampled from the policy.

* Arrow from "Environment" to "Policy": Labeled with `π_θ(·|x) ← r`, indicating the policy is updated based on a scalar reward `r`.

**3. Right Column: Experiential Learning (ERL)**

* **Title:** "Experiential Learning (ERL)"

* **Components:**

* A box labeled "Policy" (top).

* A box labeled "Environment" (bottom).

* A green box labeled "Action" on the arrow from "Policy" to "Environment".

* A blue box labeled "Experience Internalization" positioned between "Environment" and "Policy".

* A peach-colored box labeled "Self-Reflection" positioned below "Experience Internalization".

* **Flow/Text:**

* Arrow from "Policy" to "Environment": Labeled with `y ~ π_θ(·|x)`.

* Arrow from "Environment" to "Policy": This path is more complex. It flows through the "Experience Internalization" box.

* Text in the "Experience Internalization" box: `π_θ(·|x) ← π_θ(·|x, Δ)`. This suggests the policy is updated using an internalized version of experience, parameterized by `Δ`.

* Text in the "Self-Reflection" box: `Δ ~ π_θ(·|x, y, r)`. This indicates that the internal parameter `Δ` is generated by a self-reflection process based on the input `x`, action `y`, and reward `r`.

**Bottom Annotation:**

* A long, gray horizontal arrow spans the width of the diagram beneath the three columns.

* Left end label: "Learning from Feedback" (aligned under SFT and RLVR).

* Right end label: "Learning from Experience" (aligned under ERL).

### Detailed Analysis

The diagram illustrates an evolution in learning complexity:

1. **Direct Learning (SFT):** A simple, one-step supervised loop. The policy is directly corrected towards a known good example (`y'`). The feedback is the example itself.

2. **Reinforcement Learning (RLVR):** An interactive loop with an environment. The policy takes an action, receives a scalar reward signal from the environment, and updates accordingly. Feedback is indirect (a reward number).

3. **Experiential Learning (ERL):** An enhanced interactive loop that adds an internal cognitive layer. Instead of updating the policy directly from the reward `r`, the system first performs "Self-Reflection" to generate an internal representation `Δ` from the tuple `(x, y, r)`. This `Δ` is then used for "Experience Internalization," updating the policy in a more nuanced way (`π_θ(·|x, Δ)`). This suggests learning from a processed, internalized form of experience rather than the raw reward signal.

### Key Observations

* **Increasing Complexity:** The diagrams grow more complex from left to right, adding components (Environment, Action, Self-Reflection) and more sophisticated update rules.

* **Shift in Feedback Source:** The source of learning signal evolves: from a static `y'` (SFT), to an external scalar `r` (RLVR), to an internally generated `Δ` (ERL).

* **Spatial Grounding:** The "Self-Reflection" and "Experience Internalization" boxes in the ERL diagram are centrally located between the Environment and Policy, visually emphasizing their role as a mediating, internal process.

* **Color Coding:** Green is consistently used for the "Action" output. Peach/light red is used for reward-related signals (`r` in RLVR, `Self-Reflection` in ERL). Blue is introduced in ERL for the new "Experience Internalization" process.

### Interpretation

This diagram argues for a progression in machine learning paradigms towards more autonomous and introspective systems.

* **SFT** represents foundational learning from curated data, but it's limited to mimicking provided examples.

* **RLVR** introduces learning through trial-and-error interaction with an environment, a significant step towards autonomous decision-making. However, it relies on an external reward function, which can be sparse or poorly defined.

* **ERL** proposes a next step where the agent doesn't just react to rewards but actively *reflects* on its experiences (`x, y, r`) to form an internal understanding (`Δ`). This internalized experience then guides learning. The key innovation is the **Self-Reflection** module, which transforms raw experience into a form more suitable for deep learning. This mimics aspects of biological learning, where experience is consolidated and interpreted internally, potentially leading to more robust, generalizable, and sample-efficient learning. The bottom arrow frames this as a shift from learning based on external feedback to learning based on internalized experience.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Comparison of Learning Paradigms (SFT, RLVR, ERL)

### Overview

The diagram illustrates three distinct learning frameworks: **Direct Learning (SFT)**, **Reinforcement Learning (RLVR)**, and **Experiential Learning (ERL)**. Each framework is represented as a cyclical system involving a **Policy**, **Environment**, and domain-specific mechanisms. Arrows indicate directional relationships, with labels describing processes like feedback loops, rewards, and internalization.

### Components/Axes

1. **Sections**:

- **Left (SFT)**: Direct Learning via Supervised Fine-Tuning.

- **Center (RLVR)**: Reinforcement Learning with Value Regularization.

- **Right (ERL)**: Experiential Learning emphasizing internalization and reflection.

2. **Key Elements**:

- **Policy**: Central node in all frameworks, representing decision-making rules.

- **Environment**: External context interacting with the Policy.

- **Arrows**: Denote causal or informational flow (e.g., "Learning from Feedback" spans SFT/RLVR; "Learning from Experience" spans ERL).

3. **Labels**:

- **SFT**:

- `πθ(·|x) ← y*` (Supervised Learning: Policy maps input `x` to supervised output `y*`).

- `πθ(·|x) ← Example` (Policy learns from labeled examples).

- **RLVR**:

- `πθ(·|x) ← r` (Scaler Reward: Policy maps input `x` to scalar reward `r`).

- `y∼πθ(·|x)` (Action: Policy generates action `y` from input `x`).

- **ERL**:

- `πθ(·|x) ← πθ(·|x,Δ)` (Experience Internalization: Policy updates using experience `Δ`).

- `Δ∼πθ(·|x,y,r)` (Self-Reflection: Experience `Δ` derived from action `y`, input `x`, and reward `r`).

### Detailed Analysis

- **SFT (Direct Learning)**:

- Policy is trained via **supervised learning** using labeled examples (`y*`).

- Feedback loop: Examples → Policy → Environment → Policy (closed loop).

- **RLVR (Reinforcement Learning)**:

- Policy interacts with the Environment to generate actions (`y∼πθ(·|x)`).

- Scaler rewards (`r`) shape Policy updates.

- Feedback loop: Environment → Reward → Policy → Environment.

- **ERL (Experiential Learning)**:

- Policy generates actions (`y∼πθ(·|x)`) and experiences (`Δ∼πθ(·|x,y,r)`).

- **Experience Internalization**: Policy refines itself using internalized experiences (`πθ(·|x) ← πθ(·|x,Δ)`).

- Self-reflection integrates input, action, and reward to update the Policy.

### Key Observations

1. **Feedback vs. Experience**:

- SFT and RLVR rely on **external feedback** (supervised labels or scalar rewards).

- ERL emphasizes **internalized experience** (self-generated `Δ`) for learning.

2. **Cyclical Nature**:

- All frameworks involve iterative Policy-Environment interactions.

- ERL introduces a unique self-reflection mechanism absent in SFT/RLVR.

3. **Notation Consistency**:

- Policy notation (`πθ(·|x)`) is consistent across frameworks, denoting parameterized mappings.

- Arrows explicitly differentiate between **input-driven** (SFT/RLVR) and **experience-driven** (ERL) learning.

### Interpretation

The diagram contrasts three learning paradigms:

- **SFT** represents traditional supervised learning, where Policies are rigidly trained on labeled data.

- **RLVR** introduces reward-driven adaptation, allowing Policies to optimize for scalar feedback in dynamic Environments.

- **ERL** shifts focus to **autonomous learning**, where Policies internalize experiences and reflect on past actions/rewards to improve.

The separation of "Learning from Feedback" (SFT/RLVR) and "Learning from Experience" (ERL) highlights a spectrum from externally guided to self-directed learning. ERL’s inclusion of **Experience Internalization** suggests a more holistic approach, where Policies evolve by synthesizing past interactions rather than relying solely on external signals. This aligns with theories of metacognition and adaptive systems in AI.

No numerical data or outliers are present; the diagram is purely conceptual, emphasizing structural differences between learning methodologies.

DECODING INTELLIGENCE...