## Stacked Area Chart: Research Paper Trends in LLMs

### Overview

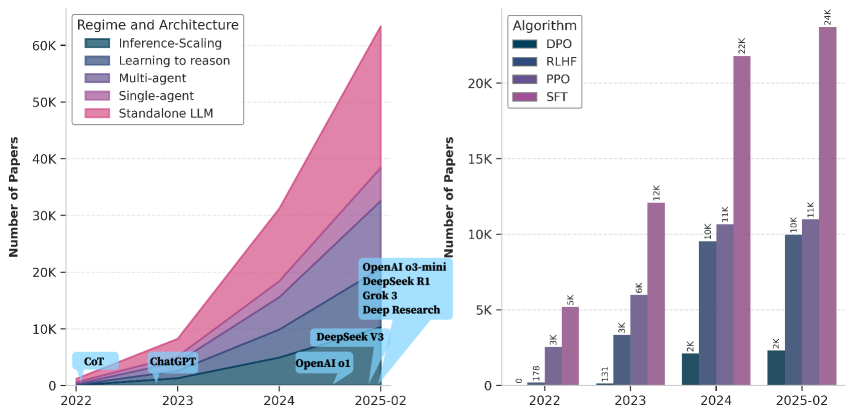

The image presents two stacked area charts visualizing the growth in research papers related to Large Language Models (LLMs) from 2022 to February 2025. The left chart categorizes papers by "Regime and Architecture," while the right chart categorizes them by "Algorithm." Both charts share a common Y-axis representing the "Number of Papers."

### Components/Axes

**Left Chart:**

* **X-axis:** Time, ranging from 2022 to February 2025 (2025-02).

* **Y-axis:** Number of Papers, ranging from 0 to 60K.

* **Legend (Top-Left):**

* Inference-Scaling (Green)

* Learning to reason (Gray)

* Multi-agent (Light Blue)

* Single-agent (Dark Blue)

* Standalone LLM (Pink)

* **Annotations:** "CoT", "ChatGPT", "OpenAI 01", "DeepSeek R1", "Grok 3", "Deep Research", "DeepSeek V3"

**Right Chart:**

* **X-axis:** Time, ranging from 2022 to February 2025 (2025-02).

* **Y-axis:** Number of Papers, ranging from 0 to 24K.

* **Legend (Top-Left):**

* DPO (Dark Teal)

* RLHF (Medium Blue)

* PPO (Dark Blue)

* SFT (Black)

### Detailed Analysis or Content Details

**Left Chart (Regime and Architecture):**

* **Standalone LLM (Pink):** Starts at approximately 0 in 2022, rises steadily to around 10K in 2023, then experiences a very rapid increase, reaching approximately 45K by February 2025. This is the dominant trend.

* **Single-agent (Dark Blue):** Starts at approximately 0 in 2022, rises to around 5K in 2023, and continues to increase, reaching approximately 15K by February 2025.

* **Multi-agent (Light Blue):** Remains relatively flat at around 0-2K papers throughout the period.

* **Learning to reason (Gray):** Starts at approximately 0 in 2022, rises to around 5K in 2023, and reaches approximately 10K by February 2025.

* **Inference-Scaling (Green):** Starts at approximately 0 in 2022, rises to around 2K in 2023, and reaches approximately 5K by February 2025.

* **Annotations:**

* "CoT" is positioned around 2022, at approximately 1K papers.

* "ChatGPT" is positioned around mid-2023, at approximately 5K papers.

* "OpenAI 01" is positioned around late 2023/early 2024, at approximately 8K papers.

* "DeepSeek R1" is positioned around mid-2024, at approximately 15K papers.

* "Grok 3" is positioned around late 2024, at approximately 20K papers.

* "Deep Research" is positioned around late 2024, at approximately 18K papers.

* "DeepSeek V3" is positioned around February 2025, at approximately 25K papers.

**Right Chart (Algorithm):**

* **DPO (Dark Teal):** Starts at approximately 1.7K in 2022, rises to 3K in 2023, then increases rapidly to approximately 11K by February 2025.

* **RLHF (Medium Blue):** Starts at approximately 1.3K in 2022, rises to 6K in 2023, then increases to approximately 10K by February 2025.

* **PPO (Dark Blue):** Starts at approximately 3K in 2022, rises to 2K in 2023, then increases to approximately 2K by February 2025.

* **SFT (Black):** Starts at approximately 5K in 2022, rises to 12K in 2024, then increases to approximately 24K by February 2025. This is the dominant trend.

* **Approximate Values (February 2025):**

* DPO: 11K

* RLHF: 10K

* PPO: 2K

* SFT: 24K

### Key Observations

* Both charts show a significant increase in research papers related to LLMs over the period 2022-2025.

* In the "Regime and Architecture" chart, "Standalone LLM" is the dominant category, indicating a strong focus on developing independent LLMs.

* In the "Algorithm" chart, "SFT" is the dominant category, suggesting that Supervised Fine-Tuning is the most researched algorithm.

* The annotations on the left chart highlight key milestones and models in the LLM landscape.

* The growth in papers appears to be accelerating towards the end of the period (2024-2025).

### Interpretation

The data suggests a rapidly expanding field of research in LLMs. The dominance of "Standalone LLM" in the architecture chart indicates a shift towards building more self-contained and powerful models. The prominence of "SFT" in the algorithm chart suggests that supervised fine-tuning remains a crucial technique for adapting and improving LLMs. The annotations provide a timeline of key developments, linking research trends to specific models and techniques. The accelerating growth in the number of papers indicates increasing interest and investment in this area. The relatively flat trend of "Multi-agent" research suggests it is a less prominent area of focus compared to other architectures. The divergence in trends between algorithms (SFT increasing rapidly, PPO remaining relatively stable) suggests a potential shift in research priorities. The charts, taken together, paint a picture of a dynamic and rapidly evolving field with significant potential for future innovation.