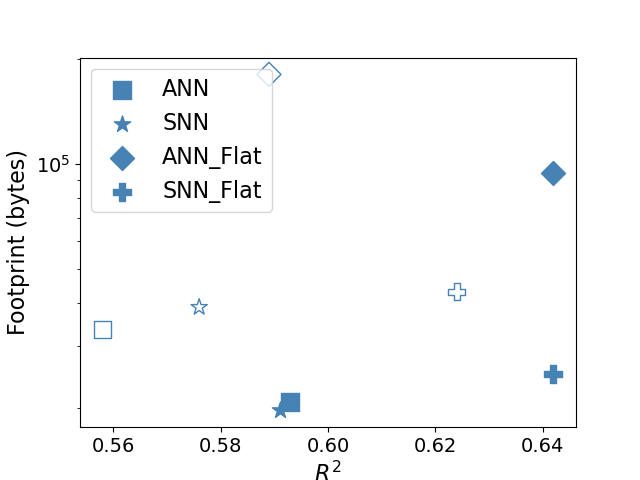

## Scatter Plot: Neural Network Model Performance vs. Memory Footprint

### Overview

This is a scatter plot comparing four different neural network model variants based on two metrics: their coefficient of determination (R²) on the x-axis and their memory footprint in bytes on a logarithmic y-axis. The plot visualizes the trade-off between model performance (R²) and resource consumption (footprint).

### Components/Axes

* **X-Axis:** Labeled "R²". The scale is linear, ranging from approximately 0.55 to 0.65. Major tick marks are visible at 0.56, 0.58, 0.60, 0.62, and 0.64.

* **Y-Axis:** Labeled "Footprint (bytes)". The scale is logarithmic (base 10). A major tick mark and label are present at `10^5` (100,000 bytes). The axis spans from below `10^4` to above `10^5`.

* **Legend:** Positioned in the top-left corner of the plot area. It defines four data series with distinct symbols and labels:

* **ANN:** Represented by a solid blue square (■).

* **SNN:** Represented by a solid blue star (★).

* **ANN_Flat:** Represented by a solid blue diamond (◆).

* **SNN_Flat:** Represented by a solid blue plus sign (✚).

### Detailed Analysis

The plot contains six distinct data points, corresponding to the four model types. Some model types have multiple data points.

**Data Point Extraction (Approximate Values):**

1. **ANN (Square ■):**

* **Point 1:** Located at approximately R² = 0.59, Footprint ≈ 1.2 x 10^4 bytes (12,000 bytes).

* *Trend Verification:* This is the only data point for the standard ANN model. It shows moderate performance (R² ~0.59) with a relatively low footprint.

2. **SNN (Star ★):**

* **Point 1:** Located at approximately R² = 0.575, Footprint ≈ 1.5 x 10^4 bytes (15,000 bytes).

* *Trend Verification:* This is the only data point for the standard SNN model. It has slightly lower performance (R² ~0.575) and a slightly higher footprint than the ANN point.

3. **ANN_Flat (Diamond ◆):**

* **Point 1 (Top-Left):** Located at approximately R² = 0.56, Footprint ≈ 1.0 x 10^4 bytes (10,000 bytes). This is the lowest R² value on the plot.

* **Point 2 (Top-Right):** Located at approximately R² = 0.64, Footprint ≈ 9.0 x 10^4 bytes (90,000 bytes). This is the highest R² value and the highest footprint on the plot.

* *Trend Verification:* The two ANN_Flat points show a strong positive correlation between R² and footprint. Achieving the highest performance (R²=0.64) comes at the cost of a nearly order-of-magnitude increase in memory footprint compared to other models.

4. **SNN_Flat (Plus ✚):**

* **Point 1 (Center-Right):** Located at approximately R² = 0.625, Footprint ≈ 3.0 x 10^4 bytes (30,000 bytes).

* **Point 2 (Bottom-Right):** Located at approximately R² = 0.64, Footprint ≈ 1.8 x 10^4 bytes (18,000 bytes).

* *Trend Verification:* The two SNN_Flat points show an interesting inverse relationship. The point with slightly lower R² (0.625) has a higher footprint (30k bytes) than the point with the highest R² (0.64, 18k bytes). This suggests a potential efficiency gain or different configuration for the higher-performing SNN_Flat model.

### Key Observations

1. **Performance-Footprint Trade-off:** The most striking observation is the position of the high-performing `ANN_Flat` point (R²=0.64). It is an outlier in terms of footprint, being roughly 3-5 times larger than any other data point.

2. **Efficiency of SNN_Flat:** The `SNN_Flat` model achieves the joint-highest R² score (0.64) with a footprint (18k bytes) that is comparable to the baseline ANN and SNN models, and significantly lower than the high-performing ANN_Flat.

3. **Clustering of Baseline Models:** The standard `ANN` and `SNN` models cluster in the lower-left quadrant, indicating lower performance and lower memory usage.

4. **Impact of "Flat" Variant:** Applying the "Flat" modification appears to generally increase the potential R² score for both ANN and SNN architectures, but with vastly different implications for memory footprint.

### Interpretation

This chart demonstrates a critical engineering trade-off in neural network design between predictive accuracy (R²) and deployment cost (memory footprint).

* The data suggests that the "Flat" modification is a technique for boosting model performance. However, its cost is highly architecture-dependent. For ANNs, the performance gain (from R² ~0.59 to 0.64) requires a massive increase in model size (from ~12k to ~90k bytes), which may be prohibitive for edge or embedded devices.

* In contrast, the SNN_Flat variant appears to be a much more efficient optimization. It reaches the same peak performance (R²=0.64) while maintaining a footprint (18k bytes) within the same order of magnitude as the baseline models. This makes SNN_Flat a potentially superior choice for applications where both high accuracy and low memory usage are required.

* The presence of two points for both ANN_Flat and SNN_Flat implies these are not single models but categories, possibly representing different hyperparameter settings or training regimes within the "Flat" paradigm. The inverse trend for SNN_Flat is particularly noteworthy and warrants further investigation into what configuration yields higher accuracy with lower memory cost.

**In summary, the plot argues for the efficiency of Spiking Neural Network (SNN) architectures when combined with the "Flat" technique, as they achieve state-of-the-art performance in this comparison without the extreme memory penalty seen in the equivalent ANN variant.**