\n

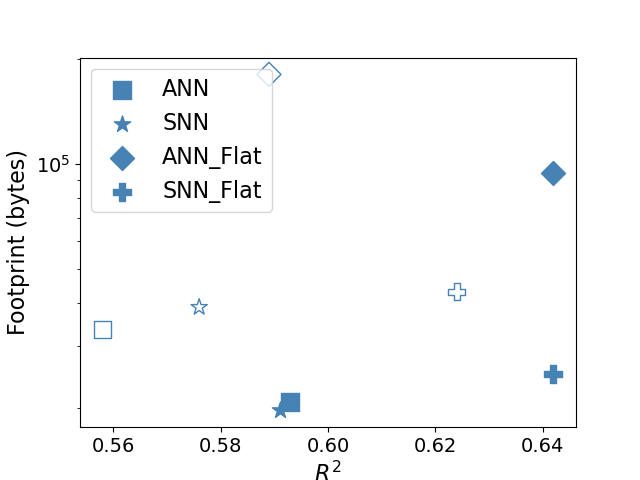

## Scatter Plot: Footprint vs. R-squared for Neural Network Architectures

### Overview

This image presents a scatter plot comparing the memory footprint (in bytes) against the R-squared value for four different neural network architectures: ANN, SNN, ANN_Flat, and SNN_Flat. The plot aims to visualize the trade-off between model performance (R-squared) and memory usage (Footprint).

### Components/Axes

* **X-axis:** R² (R-squared), ranging from approximately 0.56 to 0.65.

* **Y-axis:** Footprint (bytes), displayed on a logarithmic scale, ranging from approximately 10⁴ to 10⁵.

* **Legend:** Located in the top-left corner, defining the markers for each architecture:

* ANN (Blue Square)

* SNN (Light Blue Triangle)

* ANN_Flat (Blue Diamond)

* SNN_Flat (Light Blue Plus Sign)

### Detailed Analysis

The plot contains data points for each of the four architectures. Let's analyze each one:

* **ANN (Blue Square):** The trend is generally upward.

* (0.59, ~6.0 x 10⁴)

* (0.64, ~9.0 x 10⁴)

* **SNN (Light Blue Triangle):** The trend is relatively flat.

* (0.58, ~3.0 x 10⁴)

* (0.62, ~3.5 x 10⁴)

* **ANN_Flat (Blue Diamond):** The trend is upward.

* (0.56, ~1.0 x 10⁵)

* (0.64, ~8.0 x 10⁴)

* **SNN_Flat (Light Blue Plus Sign):** The trend is upward.

* (0.62, ~4.0 x 10⁴)

* (0.64, ~3.0 x 10⁴)

### Key Observations

* The ANN and ANN_Flat architectures generally exhibit a positive correlation between R-squared and footprint – higher R-squared values correspond to larger memory footprints.

* The SNN architecture maintains a relatively constant footprint across the observed R-squared range.

* The SNN_Flat architecture shows a slight decrease in footprint as R-squared increases.

* The ANN_Flat architecture has the largest footprint at the lowest R-squared value (0.56).

* The SNN architecture has the smallest footprint across all data points.

### Interpretation

The data suggests that flattening the neural network architecture (comparing ANN vs. ANN_Flat and SNN vs. SNN_Flat) can significantly impact the memory footprint. While flattening the ANN architecture results in a larger initial footprint, it also allows for higher R-squared values. Flattening the SNN architecture appears to reduce the footprint, but the impact on R-squared is less pronounced.

The difference in footprint between SNN and ANN architectures suggests that SNNs are inherently more memory-efficient. The relatively stable footprint of SNNs across different R-squared values indicates that increasing model complexity (and potentially performance) does not necessarily lead to a proportional increase in memory usage.

The outlier is the ANN_Flat data point at R² = 0.56, which has a significantly larger footprint than any other point at a similar R² value. This could indicate a specific characteristic of that model configuration or a potential anomaly in the data. Further investigation would be needed to understand the cause of this outlier.