\n

## Line Charts: Model Performance vs. Parameters

### Overview

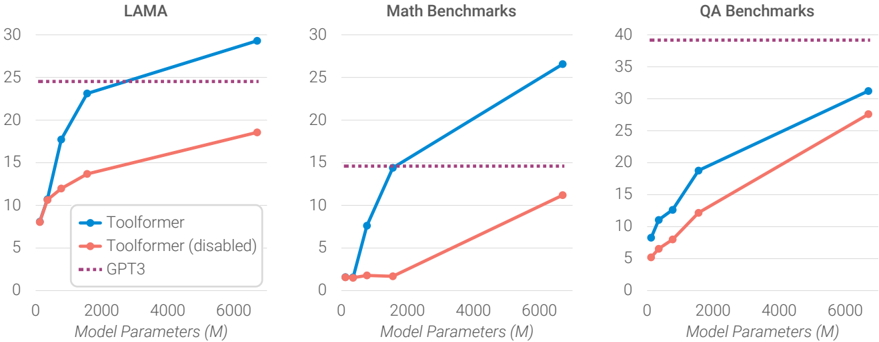

The image presents three line charts comparing the performance of "Toolformer" (with and without disabled functionality) and "GPT3" across three benchmark categories: LAMA, Math Benchmarks, and QA Benchmarks. The x-axis of each chart represents "Model Parameters (M)", ranging from 0 to 6000. The y-axis represents a performance score, with scales varying between the charts.

### Components/Axes

* **X-axis (all charts):** Model Parameters (M), scale 0 to 6000.

* **Y-axis (LAMA):** Performance score, scale 0 to 30.

* **Y-axis (Math Benchmarks):** Performance score, scale 0 to 30.

* **Y-axis (QA Benchmarks):** Performance score, scale 0 to 40.

* **Legend (all charts):**

* Blue Line with Circle Marker: Toolformer

* Orange Line with Diamond Marker: Toolformer (disabled)

* Purple Dotted Line with Plus Marker: GPT3

### Detailed Analysis or Content Details

**LAMA Chart:**

* **Toolformer (Blue):** The line slopes upward, starting at approximately 8 at 0 Model Parameters, reaching approximately 29 at 6000 Model Parameters. Approximate data points: (0, 8), (2000, 18), (4000, 24), (6000, 29).

* **Toolformer (disabled) (Orange):** The line slopes upward, starting at approximately 2 at 0 Model Parameters, reaching approximately 18 at 6000 Model Parameters. Approximate data points: (0, 2), (2000, 8), (4000, 14), (6000, 18).

* **GPT3 (Purple):** The line is relatively flat, starting at approximately 25 at 0 Model Parameters and remaining around 25 throughout the range of Model Parameters. Approximate data points: (0, 25), (2000, 25), (4000, 25), (6000, 25).

**Math Benchmarks Chart:**

* **Toolformer (Blue):** The line slopes upward, starting at approximately 2 at 0 Model Parameters, reaching approximately 30 at 6000 Model Parameters. Approximate data points: (0, 2), (2000, 14), (4000, 24), (6000, 30).

* **Toolformer (disabled) (Orange):** The line slopes upward, starting at approximately 1 at 0 Model Parameters, reaching approximately 16 at 6000 Model Parameters. Approximate data points: (0, 1), (2000, 6), (4000, 12), (6000, 16).

* **GPT3 (Purple):** The line is relatively flat, starting at approximately 15 at 0 Model Parameters and remaining around 15 throughout the range of Model Parameters. Approximate data points: (0, 15), (2000, 15), (4000, 15), (6000, 15).

**QA Benchmarks Chart:**

* **Toolformer (Blue):** The line slopes upward, starting at approximately 6 at 0 Model Parameters, reaching approximately 32 at 6000 Model Parameters. Approximate data points: (0, 6), (2000, 16), (4000, 26), (6000, 32).

* **Toolformer (disabled) (Orange):** The line slopes upward, starting at approximately 5 at 0 Model Parameters, reaching approximately 22 at 6000 Model Parameters. Approximate data points: (0, 5), (2000, 12), (4000, 18), (6000, 22).

* **GPT3 (Purple):** The line is relatively flat, starting at approximately 20 at 0 Model Parameters and remaining around 20 throughout the range of Model Parameters. Approximate data points: (0, 20), (2000, 20), (4000, 20), (6000, 20).

### Key Observations

* In all three benchmarks, Toolformer consistently outperforms Toolformer (disabled).

* GPT3's performance remains relatively constant across all model parameter sizes.

* Toolformer shows a strong positive correlation between model parameters and performance in all three benchmarks.

* The performance gap between Toolformer and GPT3 widens as model parameters increase, particularly in the LAMA and QA benchmarks.

### Interpretation

The data suggests that increasing model parameters significantly improves the performance of Toolformer across all three benchmark categories. The consistent outperformance of Toolformer over its disabled version indicates that the "Toolformer" functionality is effective. GPT3, while performing reasonably well, does not exhibit the same level of improvement with increasing model parameters, suggesting it may have reached a performance plateau. The widening gap between Toolformer and GPT3 with larger models suggests that Toolformer is better able to leverage the increased capacity provided by larger models. The flat line for GPT3 indicates that its performance is not significantly affected by the number of model parameters, potentially due to architectural limitations or training data constraints. This data supports the idea that Toolformer is a more scalable architecture than GPT3, and that increasing model size can lead to substantial performance gains.