TECHNICAL ASSET FINGERPRINT

bedad4515c70a5ea4d60c955

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: Knowledge Representation and Usage

### Overview

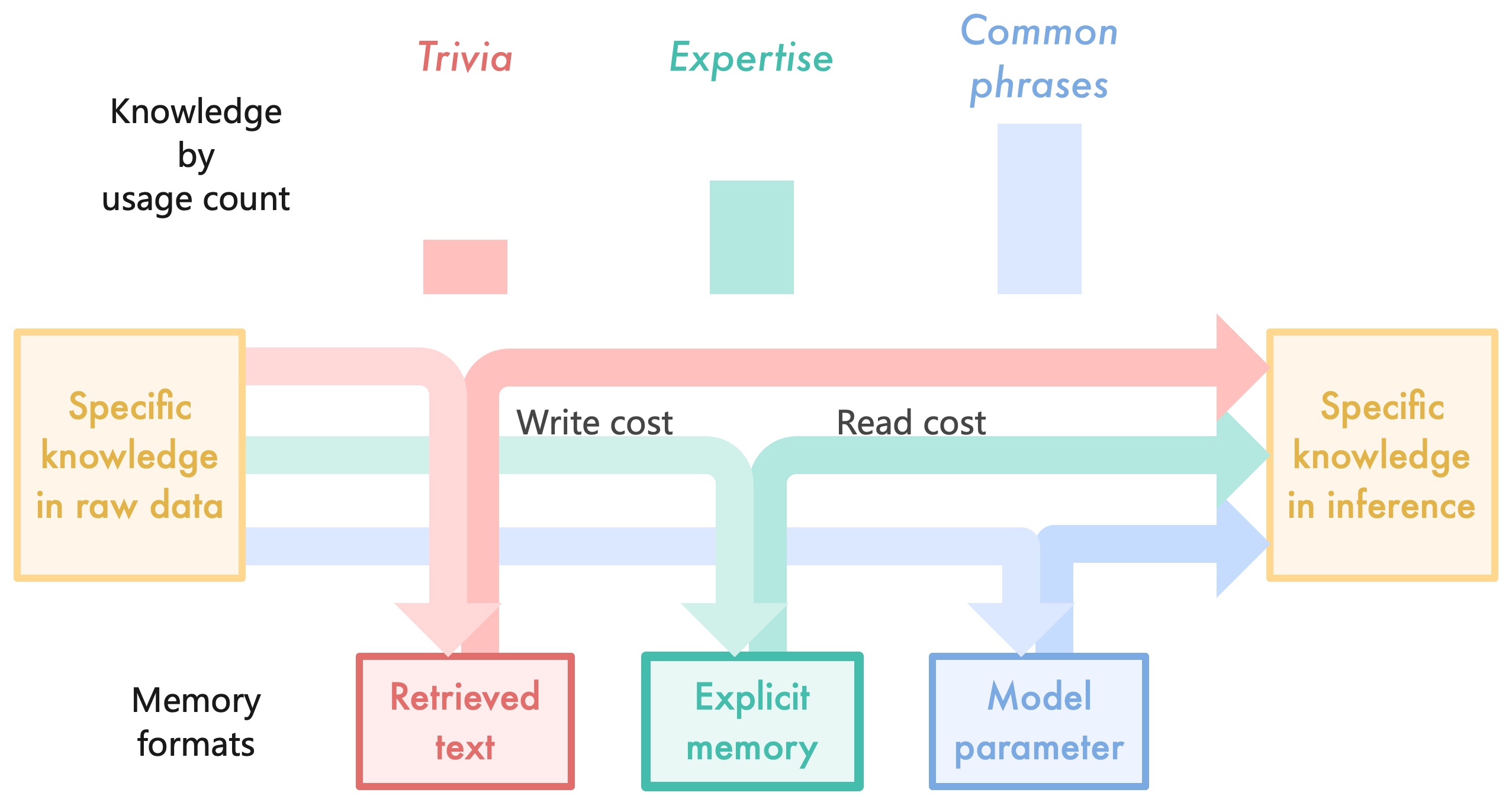

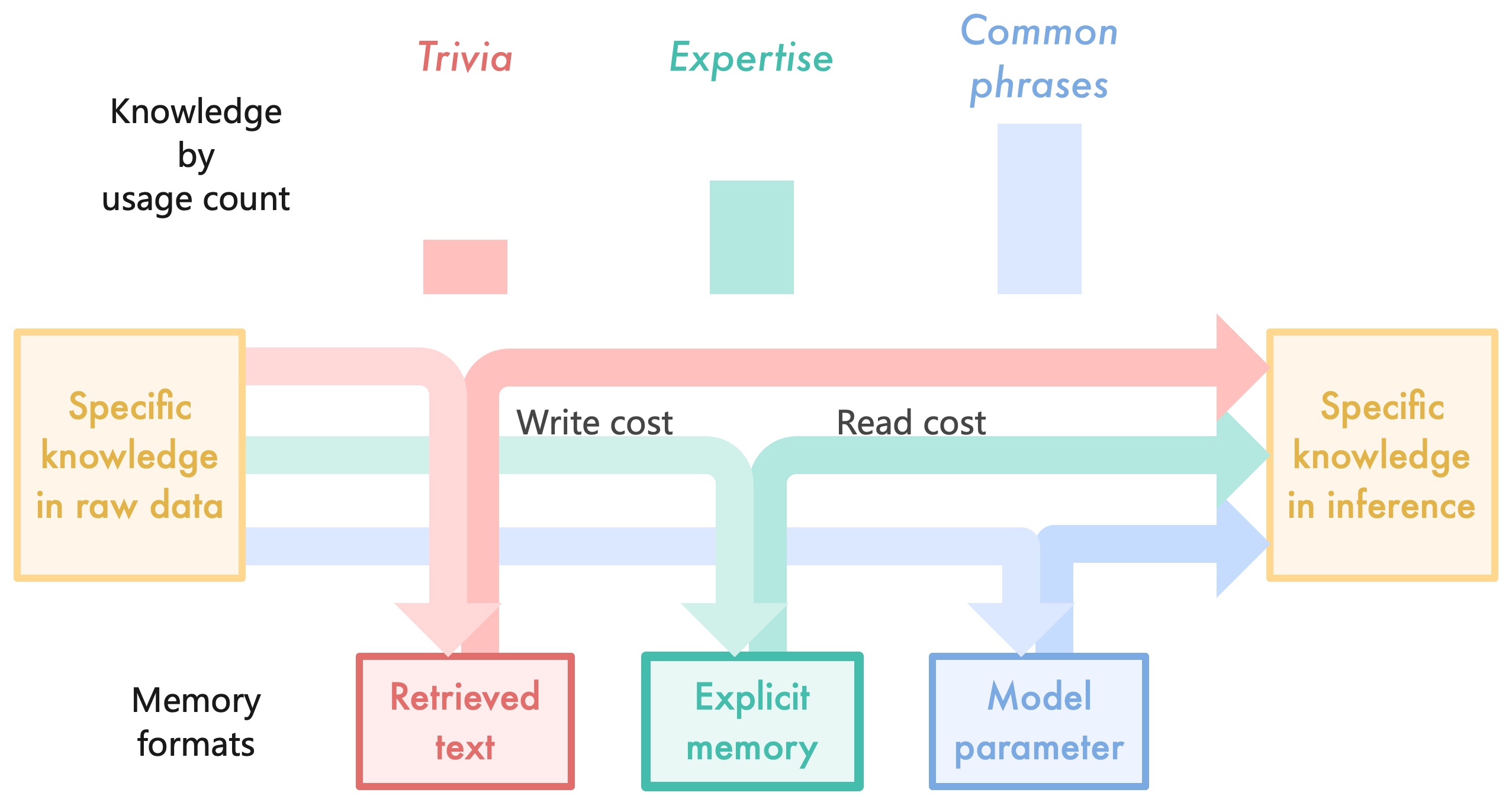

The image is a diagram illustrating how knowledge is represented and used, categorized by usage count. It shows the flow of information from "Specific knowledge in raw data" to "Specific knowledge in inference" through different pathways representing "Trivia," "Expertise," and "Common phrases." The diagram also highlights the "Write cost" and "Read cost" associated with different memory formats.

### Components/Axes

* **Title:** Knowledge by usage count

* **Source Node (Left):** Specific knowledge in raw data (contained in a light orange box)

* **Destination Node (Right):** Specific knowledge in inference (contained in a light orange box)

* **Memory Formats (Bottom Left):** Memory formats

* **Intermediate Nodes (Bottom):**

* Retrieved text (contained in a red box)

* Explicit memory (contained in a green box)

* Model parameter (contained in a blue box)

* **Categories (Top):**

* Trivia (red)

* Expertise (green)

* Common phrases (blue)

* **Arrows:** Represent the flow of information, colored according to the category.

* Red arrows: Trivia

* Green arrows: Expertise

* Blue arrows: Common phrases

* **Labels on Arrows:**

* Write cost (associated with Trivia and Expertise)

* Read cost (associated with Expertise and Common phrases)

### Detailed Analysis

* **Specific knowledge in raw data:** Located on the left side, represented by a light orange box.

* **Specific knowledge in inference:** Located on the right side, represented by a light orange box.

* **Trivia (Red):**

* A small red rectangle is positioned above the "Retrieved text" box, indicating the relative usage count of trivia.

* A red arrow originates from the "Specific knowledge in raw data" box, splits into two paths: one going down to "Retrieved text" and the other going directly to "Specific knowledge in inference."

* The arrow segment going from the split to "Retrieved text" is labeled "Write cost".

* **Expertise (Green):**

* A green rectangle is positioned above the "Explicit memory" box, indicating the relative usage count of expertise.

* A green arrow originates from the "Specific knowledge in raw data" box, splits into two paths: one going down to "Explicit memory" and the other going directly to "Specific knowledge in inference."

* The arrow segment going from the split to "Explicit memory" is labeled "Write cost".

* The arrow segment going from "Explicit memory" to "Specific knowledge in inference" is labeled "Read cost".

* **Common phrases (Blue):**

* A blue rectangle is positioned above the "Model parameter" box, indicating the relative usage count of common phrases.

* A blue arrow originates from the "Specific knowledge in raw data" box, goes down to "Model parameter" and then to "Specific knowledge in inference."

* The arrow segment going from "Model parameter" to "Specific knowledge in inference" is labeled "Read cost".

* **Memory Formats:** Located at the bottom, indicating the type of memory associated with each category.

* Retrieved text (red box)

* Explicit memory (green box)

* Model parameter (blue box)

### Key Observations

* The diagram illustrates the flow of knowledge from raw data to inference, categorized by usage count.

* Trivia has a direct path from raw data to inference and also involves retrieved text.

* Expertise involves explicit memory and has both write and read costs.

* Common phrases involve model parameters and have a read cost.

* The relative size of the rectangles above each category ("Trivia," "Expertise," "Common phrases") indicates the relative usage count, with "Common phrases" having the highest usage count, followed by "Expertise," and then "Trivia."

### Interpretation

The diagram suggests that different types of knowledge are processed and stored in different ways. Trivia is quickly retrieved and may not require extensive processing. Expertise requires more structured storage and processing, involving both writing to and reading from explicit memory. Common phrases are likely stored as model parameters, allowing for efficient retrieval during inference. The diagram highlights the trade-offs between different knowledge representation methods in terms of storage, processing cost, and usage frequency. The "Write cost" and "Read cost" labels suggest that there are computational or resource costs associated with storing and retrieving different types of knowledge. The relative heights of the "Trivia", "Expertise", and "Common phrases" blocks suggest the relative frequency of each type of knowledge.

DECODING INTELLIGENCE...

EXPERT: gemini-3.1-pro-preview VERSION 1

RUNTIME: gemini/gemini-3.1-pro-preview

INTEL_VERIFIED

## Conceptual Diagram: Knowledge Processing, Usage, and Memory Formats

### Overview

This image is a conceptual flow diagram combined with a qualitative bar chart. It illustrates how different categories of knowledge transition from raw data to inference within a system (likely a Large Language Model or AI architecture). It correlates the frequency of knowledge usage with the preferred memory format for storing that knowledge, highlighting the trade-offs between "Write cost" and "Read cost."

### Components/Axes

**1. Top Section (Bar Chart):**

* **Y-Axis Label (Implied):** "Knowledge by usage count" (Located top-left).

* **X-Axis Categories & Color Legend:**

* **Red/Pink:** "Trivia"

* **Green/Teal:** "Expertise"

* **Blue:** "Common phrases"

**2. Middle Section (Flow Diagram):**

* **Source Node (Left):** A yellow-outlined box containing the text: "Specific knowledge in raw data".

* **Destination Node (Right):** A yellow-outlined box containing the text: "Specific knowledge in inference".

* **Flow Lines:** Three colored pathways (Red, Green, Blue) connecting the source to the destination, with branches routing downward and upward.

* **Process Labels:**

* "Write cost" (Positioned above the downward-pointing arrows).

* "Read cost" (Positioned above the upward-pointing arrows).

**3. Bottom Section (Memory Formats):**

* **Category Label:** "Memory formats" (Located bottom-left).

* **Storage Nodes:**

* **Red/Pink Box:** "Retrieved text"

* **Green/Teal Box:** "Explicit memory"

* **Blue Box:** "Model parameter"

### Detailed Analysis

**Component 1: Knowledge by Usage Count (Top Chart)**

* **Visual Trend:** The bars increase in height from left to right.

* **Data Points (Approximate relative heights):**

* **Trivia (Red):** Lowest usage count (approx. 1 unit high).

* **Expertise (Green):** Medium usage count (approx. 3 units high).

* **Common phrases (Blue):** Highest usage count (approx. 5.5 units high).

**Component 2 & 3: Flow Routing and Memory Formats (Middle & Bottom)**

* **Visual Trend:** The diagram uses the *thickness* of the flow lines to indicate the dominant pathway for each knowledge type. All three colors start at "Specific knowledge in raw data" and end at "Specific knowledge in inference," but their routing through the memory formats differs significantly based on line weight.

* **Red Pathway (Trivia):**

* *Horizontal (Direct) Path:* Very thin line.

* *Memory Loop:* A thick arrow flows down (Write cost) into "Retrieved text", and a thick arrow flows up (Read cost) from "Retrieved text" to join the path to inference.

* *Extraction:* Trivia relies heavily on the "Retrieved text" memory format rather than a direct/internalized path.

* **Green Pathway (Expertise):**

* *Horizontal (Direct) Path:* Medium thickness line.

* *Memory Loop:* A medium thickness arrow flows down (Write cost) into "Explicit memory", and a medium thickness arrow flows up (Read cost) from "Explicit memory" to inference.

* *Extraction:* Expertise utilizes a balanced approach, splitting the flow evenly between direct pathways and "Explicit memory."

* **Blue Pathway (Common phrases):**

* *Horizontal (Direct) Path:* Very thick line.

* *Memory Loop:* A thin arrow flows down (Write cost) into "Model parameter", and a thin arrow flows up (Read cost) from "Model parameter" to inference.

* *Extraction:* Common phrases rely almost entirely on the direct horizontal pathway, with minimal active routing through the "Model parameter" loop during the inference phase (implying the knowledge is already baked into the direct path).

### Key Observations

1. **Inverse Relationship:** There is an inverse relationship between "Usage count" and reliance on externalized memory formats (like "Retrieved text"). The lowest usage item (Trivia) has the thickest routing through its respective memory box.

2. **Direct Relationship:** There is a direct relationship between "Usage count" and the thickness of the direct horizontal flow. The highest usage item (Common phrases) has the thickest direct line to inference.

3. **Cost Association:** The downward arrows represent the "Write cost" (storing the data), and the upward arrows represent the "Read cost" (accessing the data for inference).

### Interpretation

This diagram illustrates the architectural trade-offs in Large Language Models (LLMs) regarding how different types of information should be stored and accessed, specifically comparing Parametric Memory (internal model weights) with Non-Parametric Memory (external databases, like in Retrieval-Augmented Generation or RAG).

* **Trivia (Low Usage):** Because trivia is rarely asked for, it is inefficient to spend high computational "Write cost" to train it directly into the model's core parameters. Instead, it is better stored externally as "Retrieved text." When needed, the system incurs a "Read cost" to fetch it. The thick arrows through the memory box show this is the primary mechanism for handling rare facts.

* **Common Phrases (High Usage):** Because common phrases are used constantly, they must be available instantly. Therefore, the system pays the high "Write cost" upfront during training to embed them directly into the "Model parameter." During inference, the flow bypasses the active read/write loop (thin arrows) and flows directly to inference (thick horizontal arrow), representing zero-latency recall from the model's internal weights.

* **Expertise (Medium Usage):** This represents domain-specific knowledge that is used moderately. It utilizes a hybrid approach ("Explicit memory"), balancing the costs of training it into the model versus retrieving it on the fly.

In essence, the diagram argues that AI systems should not memorize everything equally. High-frequency data should be internalized (parameters), while low-frequency data should be externalized (retrieval), optimizing the balance between training costs (write) and inference latency (read).

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Knowledge Flow and Cost Analysis

### Overview

The image is a diagram illustrating the flow of knowledge from different sources (Trivia, Expertise, Common phrases) to specific knowledge in raw data and inference, along with associated costs (Write cost, Read cost). The diagram uses colored arrows to represent the flow and rectangular boxes to represent knowledge types and costs.

### Components/Axes

The diagram consists of the following components:

* **Knowledge Sources (Top):**

* Trivia (represented by a pink rectangle)

* Expertise (represented by a teal rectangle)

* Common phrases (represented by a blue rectangle)

* **Intermediate Stages (Center):**

* Write cost (represented by a pink rectangle)

* Read cost (represented by a teal rectangle)

* **Knowledge Destinations (Sides):**

* Specific knowledge in raw data (represented by a yellow rectangle on the left)

* Specific knowledge in inference (represented by a green rectangle on the right)

* **Memory Formats (Bottom-Left):**

* Memory formats (represented by a yellow rectangle)

* **Data Storage (Bottom):**

* Retrieved text (represented by a pink rectangle)

* Explicit memory (represented by a teal rectangle)

* Model parameter (represented by a blue rectangle)

* **Arrows:** Colored arrows indicate the flow of knowledge, with colors corresponding to the knowledge sources.

### Detailed Analysis or Content Details

The diagram shows the following knowledge flows:

* **Trivia (Pink):** Flows from Trivia to Write cost, then to Specific knowledge in raw data, and finally to Retrieved text.

* **Expertise (Teal):** Flows from Expertise to Read cost, then to Specific knowledge in raw data, and finally to Explicit memory.

* **Common phrases (Blue):** Flows from Common phrases to Read cost, then to Specific knowledge in inference, and finally to Model parameter.

* **Specific knowledge in raw data (Yellow):** Receives input from Trivia (via Write cost) and Expertise (via Read cost). It also connects to Memory formats.

* **Specific knowledge in inference (Green):** Receives input from Common phrases (via Read cost).

* **Memory formats (Yellow):** Connects to Specific knowledge in raw data.

The diagram does not contain numerical data, but visually represents the relative strength or frequency of knowledge flow through the thickness of the arrows. All arrows appear to be of equal thickness, suggesting equal flow.

### Key Observations

* The diagram highlights a distinction between knowledge used in "raw data" versus "inference."

* "Write cost" is associated with Trivia, while "Read cost" is associated with both Expertise and Common phrases.

* The diagram suggests that Trivia is primarily used for building knowledge in raw data, while Expertise and Common phrases contribute to knowledge used in inference.

* The diagram does not provide any quantitative information about the costs or the amount of knowledge flowing.

### Interpretation

The diagram illustrates a model of knowledge acquisition and utilization. It suggests that different types of knowledge (Trivia, Expertise, Common phrases) are processed differently and contribute to different stages of a system. The "Write cost" and "Read cost" represent the computational or resource requirements for incorporating and accessing this knowledge. The separation between "raw data" and "inference" suggests a two-stage process: first, knowledge is accumulated and stored (raw data), and then it is used for reasoning or decision-making (inference).

The diagram could be interpreted as a simplified representation of a knowledge-based system, such as a large language model. Trivia might represent factual information, Expertise might represent domain-specific knowledge, and Common phrases might represent linguistic patterns. The diagram highlights the importance of managing both the acquisition and utilization of knowledge, as well as the associated costs. The lack of quantitative data suggests that the diagram is intended to convey a conceptual framework rather than precise measurements. The diagram is a high-level overview and does not delve into the specifics of how these costs are calculated or how the knowledge is represented.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Knowledge Processing Pathways in AI Systems

### Overview

This image is a conceptual diagram illustrating how different types of knowledge ("Trivia," "Expertise," "Common phrases") are processed and stored in an AI system. It maps the flow from raw data to inference-ready knowledge, highlighting the associated computational costs and the memory formats used for storage. The diagram combines a simple bar chart with a flowchart to show relationships and pathways.

### Components/Axes

**Top Section - Bar Chart:**

* **Title/Label:** "Knowledge by usage count" (positioned top-left).

* **Categories (X-axis):** Three distinct knowledge types, each with a corresponding colored bar.

1. **Trivia** (Label: Pink text, top-left). Bar: A short, solid pink rectangle.

2. **Expertise** (Label: Teal text, top-center). Bar: A medium-height, solid teal rectangle.

3. **Common phrases** (Label: Light blue text, top-right). Bar: The tallest, solid light blue rectangle.

* **Y-axis:** Implied to represent "usage count" or frequency, with bar height indicating relative magnitude. No numerical scale is provided.

**Middle Section - Flow Diagram:**

* **Source Node (Left):** A yellow-bordered box labeled "Specific knowledge in raw data" (text in gold).

* **Destination Node (Right):** A yellow-bordered box labeled "Specific knowledge in inference" (text in gold).

* **Flow Paths:** Three colored, semi-transparent arrows flow from the source to the destination, each splitting to also feed into a corresponding memory format box below.

1. **Pink Path:** Associated with "Trivia." It has a label "Write cost" positioned above its initial segment.

2. **Teal Path:** Associated with "Expertise." It has a label "Read cost" positioned above its initial segment.

3. **Light Blue Path:** Associated with "Common phrases." No specific cost label is attached to this path segment.

**Bottom Section - Memory Formats:**

* **Label:** "Memory formats" (positioned bottom-left).

* **Three storage boxes,** each receiving an arrow from the flow paths above:

1. **Retrieved text** (Red-bordered box, pink text). Receives the pink arrow.

2. **Explicit memory** (Teal-bordered box, teal text). Receives the teal arrow.

3. **Model parameter** (Blue-bordered box, light blue text). Receives the light blue arrow.

### Detailed Analysis

The diagram establishes a clear mapping between knowledge types, memory formats, and processing costs:

* **Trivia** is characterized by low usage count (shortest bar). It is processed via a pathway with a noted "Write cost" and is stored in the "Retrieved text" memory format.

* **Expertise** has medium usage count (medium bar). Its pathway is associated with a "Read cost" and it is stored as "Explicit memory."

* **Common phrases** have the highest usage count (tallest bar). They flow through a pathway without a specific cost label in this diagram and are stored directly as "Model parameter."

The flow indicates that "Specific knowledge in raw data" is transformed into "Specific knowledge in inference" through these three parallel channels, each utilizing a different storage mechanism with different implied computational trade-offs (write vs. read cost).

### Key Observations

1. **Usage vs. Storage Complexity:** There is an inverse relationship suggested between usage frequency and the apparent complexity of the storage format. The most frequently used knowledge ("Common phrases") is stored in the most integrated format ("Model parameter"), while the least used ("Trivia") is stored in a more external format ("Retrieved text").

2. **Cost Attribution:** "Write cost" is explicitly linked to the Trivia/Retrieved text pathway, implying that storing this information has a significant initial overhead. "Read cost" is linked to the Expertise/Explicit memory pathway, suggesting that accessing this structured knowledge has a recurring computational cost.

3. **Color-Coded Consistency:** The diagram uses a strict color-coding scheme (pink, teal, light blue) to visually link each knowledge type (top), its flow path (middle), and its final memory format (bottom), ensuring clear traceability.

### Interpretation

This diagram presents a model for understanding how an AI system, likely a large language model, allocates different types of information across its architecture. It argues that not all knowledge is stored equally.

* **"Trivia" (low-frequency facts)** is treated like an external reference. It's costly to index ("Write cost") but can be looked up when needed ("Retrieved text"), similar to a database or retrieval-augmented generation (RAG) system.

* **"Expertise" (structured, mid-frequency knowledge)** is stored in a more accessible but still distinct format ("Explicit memory"), akin to a knowledge graph or curated fact base. The "Read cost" suggests querying this system is non-trivial.

* **"Common phrases" (high-frequency linguistic patterns)** are the most efficiently utilized. They are compressed and internalized directly into the model's weights ("Model parameter"), making them instantly available during inference with minimal access latency.

The overarching insight is that efficient AI systems employ a **hybrid memory architecture**. They balance the high capacity but slow access of external retrieval (for trivia) with the fast, integrated access of model parameters (for common patterns), using intermediate explicit memory for structured expertise. The diagram visually argues that the "cost" of knowledge (in compute terms) is a function of both its usage frequency and the chosen storage format.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Knowledge Processing Architecture

### Overview

This diagram illustrates the flow of specific knowledge from raw data to inference through three memory formats: Retrieved text, Explicit memory, and Model parameter. It categorizes knowledge by usage frequency (Trivia, Expertise, Common phrases) and visualizes associated write/read costs.

### Components/Axes

- **Key Elements**:

- **Knowledge Types** (Trivia, Expertise, Common phrases) with usage count bars (pink, teal, blue)

- **Memory Formats**:

- Retrieved text (pink)

- Explicit memory (teal)

- Model parameter (blue)

- **Knowledge States**:

- Specific knowledge in raw data (left)

- Specific knowledge in inference (right)

- **Cost Indicators**:

- Write cost (pink arrow)

- Read cost (teal arrow)

- **Spatial Layout**:

- Top: Knowledge types with usage count bars

- Middle: Three memory format boxes connected by bidirectional arrows

- Bottom: Memory format labels

- Arrows color-coded to match knowledge types

### Detailed Analysis

1. **Knowledge Usage Distribution**:

- Trivia (pink): Smallest usage count bar

- Expertise (teal): Medium-sized bar

- Common phrases (blue): Largest bar

2. **Memory Format Connections**:

- **Retrieved text** (pink):

- Receives Trivia knowledge via write cost

- Feeds into inference via read cost

- **Explicit memory** (teal):

- Receives Expertise knowledge via write cost

- Feeds into inference via read cost

- **Model parameter** (blue):

- Receives Common phrases knowledge via write cost

- Feeds into inference via read cost

3. **Cost Relationships**:

- Write costs (arrows from raw data to memory formats)

- Read costs (arrows from memory formats to inference)

- Color-coded arrows match knowledge type colors

### Key Observations

- Common phrases dominate usage (largest bar) and directly influence model parameters

- Trivia knowledge has minimal usage but still requires both write/read pathways

- Expertise knowledge shows balanced usage and memory engagement

- All knowledge types converge on "specific knowledge in inference"

### Interpretation

This architecture suggests:

1. **Hierarchical Knowledge Processing**: More frequently used knowledge (Common phrases) becomes embedded in model parameters, while less common knowledge (Trivia) remains in retrieved text

2. **Cost Efficiency**: Explicit memory appears optimized for Expertise knowledge, balancing write/read costs

3. **Inference Integration**: All knowledge types ultimately contribute to inference, but through different pathways

4. **Scalability Implications**: The system design accommodates varying knowledge frequencies through specialized memory formats

The diagram emphasizes that knowledge processing efficiency depends on both usage frequency and memory format suitability, with model parameters serving as the primary repository for high-frequency knowledge.

DECODING INTELLIGENCE...