\n

## Diagram: Biased vs. Debiased Visual Question Answering (VQA)

### Overview

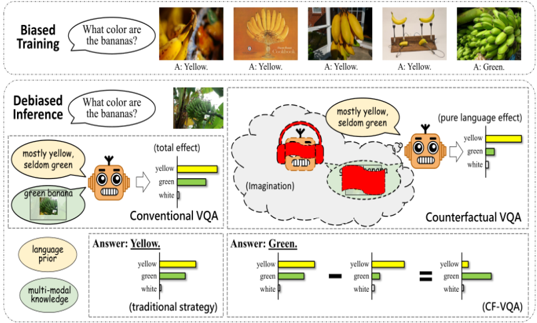

This diagram illustrates the difference between conventional Visual Question Answering (VQA) and a Counterfactual VQA (CF-VQA) approach, highlighting how biases in training data can affect inference and how CF-VQA attempts to mitigate these biases. The diagram uses images of bananas and a robot to demonstrate the concept.

### Components/Axes

The diagram is divided into three main sections: "Biased Training", "Debiased Inference", and a comparison of "Conventional VQA" vs. "Counterfactual VQA". Each section contains images, text, and bar charts.

* **Text:** "What color are the bananas?" appears in both the "Biased Training" and "Debiased Inference" sections.

* **Images:** Several images of bananas are shown, varying in color and ripeness. A robot head is also featured in the "Debiased Inference" section.

* **Bar Charts:** Bar charts are used to represent the distribution of predicted colors (yellow, green, white) for the bananas. The charts are color-coded: yellow (yellow), green (green), and white (white).

* **Labels:** "A: Yellow", "A: Green", "mostly yellow, seldom green", "total effect", "pure language effect", "language knowledge", "multi-modal knowledge", "traditional strategy", "CF-VQA".

* **Annotations:** Speech bubbles, thought bubbles, and dashed lines are used to indicate the flow of information and the reasoning process.

### Detailed Analysis or Content Details

**Biased Training:**

* Five images of bananas are shown. The first four images predominantly feature yellow bananas, and the answers provided are all "A: Yellow". The fifth image shows green bananas, and the answer is "A: Green".

* This section demonstrates how the model is trained on a dataset where yellow bananas are overrepresented, leading to a bias towards predicting "yellow" even when the bananas are green.

**Debiased Inference:**

* An image of green bananas is shown.

* A robot head is depicted with headphones and a thought bubble containing a red rectangle. The thought bubble is labeled "Imagination".

* The text "mostly yellow, seldom green" appears near the robot head.

* A bar chart labeled "total effect" shows a distribution of colors: yellow (approximately 70%), green (approximately 20%), and white (approximately 10%).

* A bar chart labeled "pure language effect" shows a distribution of colors: yellow (approximately 60%), green (approximately 30%), and white (approximately 10%).

**Conventional VQA vs. Counterfactual VQA:**

* **Conventional VQA:** A robot head is shown with a bar chart labeled "traditional strategy". The chart shows a distribution of colors: yellow (approximately 70%), green (approximately 20%), and white (approximately 10%). The answer is "Answer: Yellow".

* **Counterfactual VQA:** A robot head is shown with a bar chart labeled "CF-VQA". The chart shows a distribution of colors: yellow (approximately 30%), green (approximately 60%), and white (approximately 10%). The answer is "Answer: Green".

* A dashed line connects the "traditional strategy" bar chart to the "CF-VQA" bar chart, indicating a transformation or adjustment.

* Two leaf-shaped icons are shown, labeled "language knowledge" and "multi-modal knowledge".

### Key Observations

* The "Biased Training" section clearly shows the model learning to associate bananas with the color yellow due to the imbalanced dataset.

* The "Debiased Inference" section suggests that the CF-VQA approach attempts to account for the bias by considering counterfactual scenarios (imagining the bananas as different colors).

* The comparison between "Conventional VQA" and "Counterfactual VQA" demonstrates that CF-VQA can provide a more accurate answer ("Green") by mitigating the bias.

* The bar charts visually represent the shift in predicted color distributions, with CF-VQA showing a higher probability of predicting "Green" for green bananas.

### Interpretation

The diagram illustrates a critical problem in machine learning: bias in training data can lead to inaccurate and unfair predictions. The CF-VQA approach presented here is a novel attempt to address this problem by incorporating counterfactual reasoning. By imagining alternative scenarios, the model can reduce its reliance on biased associations and provide more accurate answers. The use of bar charts effectively communicates the shift in probability distributions, highlighting the impact of the debiasing technique. The robot head serves as a visual metaphor for the VQA system, and the thought bubble represents the internal reasoning process. The diagram suggests that combining language knowledge and multi-modal knowledge is crucial for achieving robust and unbiased VQA performance. The dashed line between the traditional and CF-VQA charts indicates a process of correction or refinement, suggesting that the CF-VQA approach builds upon the conventional VQA framework. The overall message is that careful consideration of bias and the development of debiasing techniques are essential for building reliable and trustworthy AI systems.