TECHNICAL ASSET FINGERPRINT

bf21edc27eb8bb87107bd48f

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

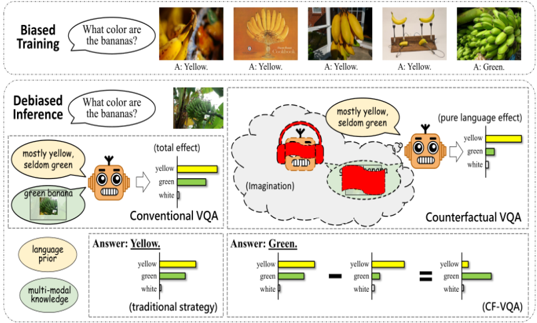

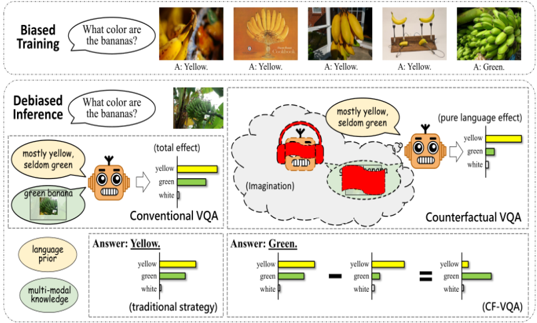

## Diagram: AI Bias Mitigation in Visual Question Answering (VQA)

### Overview

This image is a conceptual diagram illustrating the problem of bias in AI training data and a proposed method for "debiased inference." It contrasts a conventional VQA (Visual Question Answering) approach with a "Counterfactual VQA" (CF-VQA) approach. The diagram uses the example of asking an AI model, "What color are the bananas?" to demonstrate how training data bias leads to stereotypical answers and how a debiasing strategy can correct for it.

### Components/Axes

The diagram is divided into two primary horizontal panels:

1. **Top Panel: "Biased Training"**

* **Content:** A sequence of five images of bananas.

* **Text Bubble:** "What color are the bananas?"

* **Answers:** Below each image is an answer label.

* Image 1 (yellow bananas on tree): `A: Yellow.`

* Image 2 (single yellow banana): `A: Yellow.`

* Image 3 (bunch of yellow bananas): `A: Yellow.`

* Image 4 (bananas in a glass): `A: Yellow.`

* Image 5 (green, unripe bananas): `A: Green.`

2. **Bottom Panel: "Debiased Inference"**

* **Central Question:** "What color are the bananas?" next to an image of a banana tree with both yellow and green bananas.

* This panel splits into two conceptual pathways:

* **Left Pathway: "Conventional VQA"**

* **Inputs:** Two thought bubbles feed into a robot icon (representing the AI model).

* Bubble 1: `mostly yellow, seldom green` (labeled as "language prior").

* Bubble 2: `green bananas` with a small image (labeled as "multi-modal knowledge").

* **Process:** An arrow labeled `(total effect)` points from the robot to a horizontal bar chart.

* **Output Bar Chart:** Shows the distribution of color answers.

* `yellow`: Longest bar.

* `green`: Medium bar.

* `white`: Very short bar.

* **Final Answer:** `Answer: Yellow.` (underlined), with the note `(traditional strategy)`.

* **Right Pathway: "Counterfactual VQA"**

* **Process:** The robot icon is shown with a thought bubble labeled `(imagination)`. Inside the bubble is a red, stylized image of bananas, representing a counterfactual or imagined scenario.

* **Text:** `mostly yellow, seldom green` appears above the thought bubble. To the right, `(pure language effect)` points to a small bar chart showing the language prior distribution (`yellow` > `green` > `white`).

* **Mathematical Operation:** A subtraction symbol (`-`) is shown between two bar charts.

* **Left Chart (Total Effect):** Identical to the output chart from the Conventional VQA pathway (`yellow` > `green` > `white`).

* **Right Chart (Language Prior):** Identical to the small `(pure language effect)` chart.

* **Result:** An equals sign (`=`) leads to a final bar chart.

* **Final Chart:** The `green` bar is now the longest, followed by `yellow`, then `white`.

* **Final Answer:** `Answer: Green.` (underlined), with the note `(CF-VQA)`.

### Detailed Analysis

* **Text Transcription:** All text in the image is in English.

* **Bias Demonstration (Top Panel):** The "Biased Training" sequence shows that 4 out of 5 training examples feature yellow bananas, with only one showing green bananas. This creates a statistical bias in the training data.

* **Conventional VQA Analysis (Bottom Left):**

* **Trend:** The model's output is dominated by the `yellow` answer.

* **Data Points (Approximate Bar Lengths):**

* `yellow`: ~80% of the bar length.

* `green`: ~40% of the bar length.

* `white`: ~5% of the bar length.

* **Logic:** The model combines its learned "language prior" (the statistical bias that bananas are usually described as yellow) with the actual "multi-modal knowledge" from the image (seeing green bananas). The bias from the language prior overwhelms the visual evidence, leading to the incorrect answer "Yellow."

* **Counterfactual VQA Analysis (Bottom Right):**

* **Trend:** The process subtracts the influence of the biased language prior from the total model output.

* **Data Points (Approximate Bar Lengths):**

* **Total Effect Chart:** `yellow` ~80%, `green` ~40%, `white` ~5%.

* **Language Prior Chart:** `yellow` ~70%, `green` ~30%, `white` ~5%.

* **Final (CF-VQA) Chart:** `green` ~50%, `yellow` ~30%, `white` ~5%.

* **Logic:** By mathematically removing the estimated effect of the biased language prior (`pure language effect`) from the model's total output, the remaining signal more accurately reflects the visual content of the image. This results in the correct answer, "Green."

### Key Observations

1. **Spatial Grounding:** The legend for the bar charts (colors: yellow, green, white) is consistently placed to the right of each chart. The bar colors correspond directly to the answer labels.

2. **Visual Metaphor:** The "imagination" of red bananas is a key visual element. It represents the model generating a counterfactual scenario to isolate and estimate the bias inherent in its language processing.

3. **Component Isolation:** The diagram clearly segments the problem (Biased Training), the flawed conventional solution, and the proposed debiased solution into distinct visual regions.

4. **Trend Verification:** In the Conventional VQA path, the `yellow` bar is always the longest. In the final CF-VQA output, the `green` bar becomes the longest, visually confirming the shift in the model's conclusion.

### Interpretation

This diagram presents a Peircean investigation into AI bias, moving from the **sign** (the biased training data of yellow bananas) to the **interpretant** (the model's biased answer "Yellow") and finally to a new **interpretant** (the corrected answer "Green").

The data suggests that standard VQA models are not purely visual; their outputs are a composite of visual input and deeply ingrained statistical biases from their language training. The "Conventional VQA" pathway demonstrates how this leads to errors when visual evidence contradicts the bias.

The "Counterfactual VQA" method is presented as a corrective lens. It doesn't retrain the model but instead performs a kind of "bias subtraction" during inference. The core insight is that the model's biased language prior can be estimated and then removed from its final output, allowing the true visual signal to dominate. This is significant because it offers a potential post-hoc method to improve fairness and accuracy in AI systems without requiring complete retraining, which is often resource-intensive. The anomaly here is the very concept of using an "imagination" of a counterfactual (red bananas) to solve a real-world problem, highlighting the creative, abstract reasoning required to debug complex AI systems.

DECODING INTELLIGENCE...