TECHNICAL ASSET FINGERPRINT

bf244720df238a811cdeefce

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

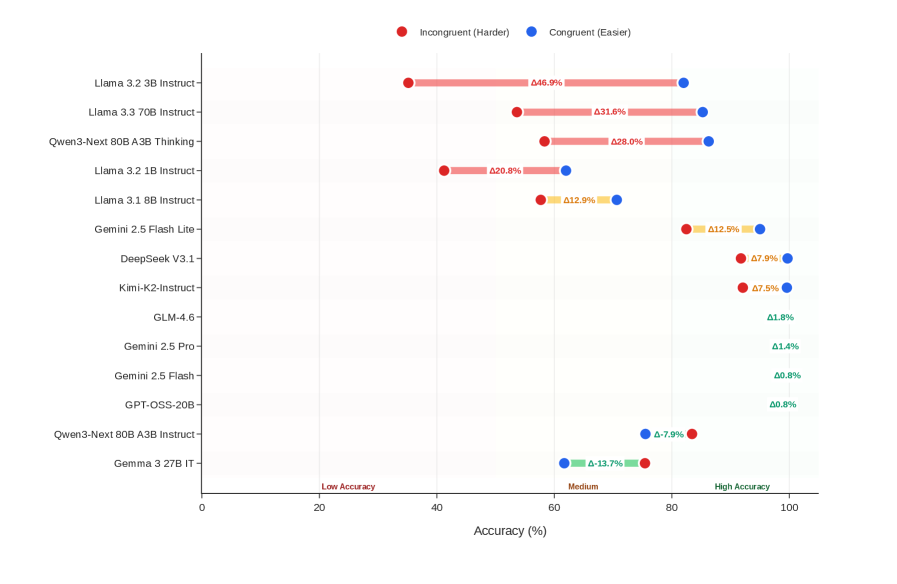

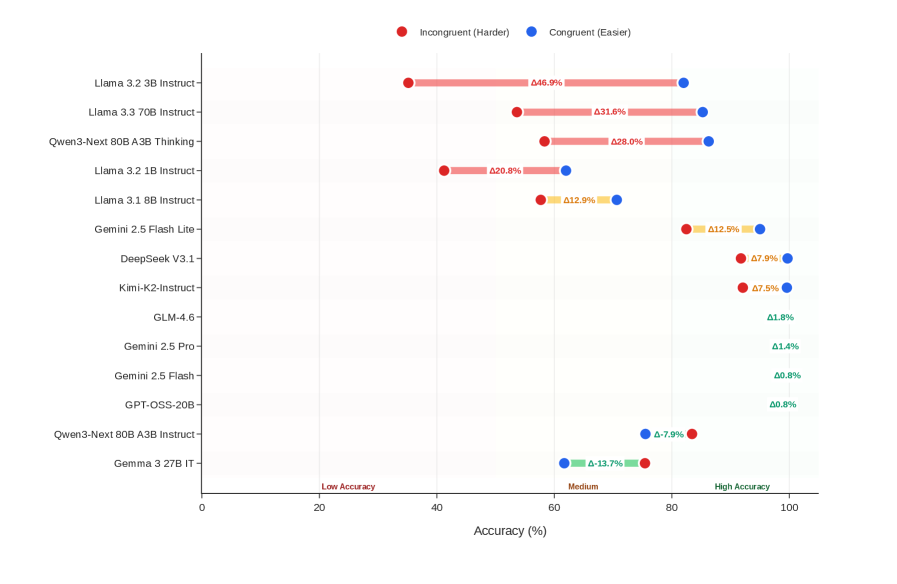

## Dot Plot: Model Accuracy Comparison

### Overview

The image is a dot plot comparing the accuracy of various language models under two conditions: "Incongruent (Harder)" and "Congruent (Easier)". The plot displays the accuracy percentage for each model under each condition, with the difference between the two conditions labeled as "Δ". The models are listed on the vertical axis, and the accuracy percentage is displayed on the horizontal axis.

### Components/Axes

* **Title:** None

* **X-axis:** "Accuracy (%)" with markers at 0, 20, 40, 60, 80, and 100. Labels "Low Accuracy", "Medium", and "High Accuracy" are positioned approximately at 20, 60, and 90 respectively.

* **Y-axis:** List of language models:

* Gemma 3 27B IT

* Qwen3-Next 80B A3B Instruct-

* GPT-OSS-20B-

* Gemini 2.5 Flash -

* Gemini 2.5 Pro-

* GLM-4.6-

* Kimi-K2-Instruct-

* DeepSeek V3.1-

* Gemini 2.5 Flash Lite -

* Llama 3.1 8B Instruct-

* Llama 3.2 1B Instruct-

* Qwen3-Next 80B A3B Thinking-

* Llama 3.3 70B Instruct-

* Llama 3.2 3B Instruct-

* **Legend:** Located at the top of the chart.

* Red dot: "Incongruent (Harder)"

* Blue dot: "Congruent (Easier)"

* Yellow: Difference between Incongruent and Congruent

### Detailed Analysis or ### Content Details

Here's a breakdown of the data for each model, including the accuracy for both conditions and the difference (Δ):

* **Llama 3.2 3B Instruct-**:

* Incongruent (Harder): Approximately 40%

* Congruent (Easier): Approximately 87%

* Δ: 46.9%

* **Llama 3.3 70B Instruct-**:

* Incongruent (Harder): Approximately 50%

* Congruent (Easier): Approximately 82%

* Δ: 31.6%

* **Qwen3-Next 80B A3B Thinking-**:

* Incongruent (Harder): Approximately 50%

* Congruent (Easier): Approximately 78%

* Δ: 28.0%

* **Llama 3.2 1B Instruct-**:

* Incongruent (Harder): Approximately 55%

* Congruent (Easier): Approximately 75%

* Δ: 20.8%

* **Llama 3.1 8B Instruct-**:

* Incongruent (Harder): Approximately 65%

* Congruent (Easier): Approximately 78%

* Δ: 12.9%

* **Gemini 2.5 Flash Lite -**:

* Incongruent (Harder): Approximately 87%

* Congruent (Easier): Approximately 75%

* Δ: 12.9%

* **DeepSeek V3.1-**:

* Incongruent (Harder): Approximately 92%

* Congruent (Easier): Approximately 84%

* Δ: 7.9%

* **Kimi-K2-Instruct-**:

* Incongruent (Harder): Approximately 92.5%

* Congruent (Easier): Approximately 85%

* Δ: 7.5%

* **GLM-4.6-**:

* Incongruent (Harder): Approximately 98%

* Congruent (Easier): Approximately 96%

* Δ: 1.8%

* **Gemini 2.5 Pro-**:

* Incongruent (Harder): Approximately 98.5%

* Congruent (Easier): Approximately 97%

* Δ: 1.4%

* **Gemini 2.5 Flash -**:

* Incongruent (Harder): Approximately 99%

* Congruent (Easier): Approximately 98%

* Δ: 0.8%

* **GPT-OSS-20B-**:

* Incongruent (Harder): Approximately 99%

* Congruent (Easier): Approximately 98%

* Δ: 0.8%

* **Qwen3-Next 80B A3B Instruct-**:

* Incongruent (Harder): Approximately 82%

* Congruent (Easier): Approximately 74%

* Δ: -7.9%

* **Gemma 3 27B IT**:

* Incongruent (Harder): Approximately 62%

* Congruent (Easier): Approximately 48%

* Δ: -13.7%

### Key Observations

* The models "Llama 3.2 3B Instruct-", "Llama 3.3 70B Instruct-", "Qwen3-Next 80B A3B Thinking-", and "Llama 3.2 1B Instruct-" show a significant difference in accuracy between the "Incongruent (Harder)" and "Congruent (Easier)" conditions, with the "Congruent (Easier)" condition resulting in higher accuracy.

* The models "Qwen3-Next 80B A3B Instruct-" and "Gemma 3 27B IT" show a negative difference, indicating that they perform better in the "Incongruent (Harder)" condition.

* The models "Gemini 2.5 Flash Lite -", "DeepSeek V3.1-", "Kimi-K2-Instruct-", "GLM-4.6-", "Gemini 2.5 Pro-", "Gemini 2.5 Flash -", and "GPT-OSS-20B-" all have relatively high accuracy in both conditions, with only a small difference between them.

### Interpretation

The dot plot illustrates the performance of different language models under varying conditions of difficulty ("Incongruent" vs. "Congruent"). The large positive Δ values for some models (e.g., Llama variants) suggest that these models are more sensitive to the difficulty of the task, performing significantly better when the task is easier. Conversely, the negative Δ values for "Qwen3-Next 80B A3B Instruct-" and "Gemma 3 27B IT" indicate a potential robustness to task difficulty, or perhaps a specialization in handling more complex or "incongruent" scenarios. The models with small Δ values and high overall accuracy appear to be consistently high-performing across both conditions. This data could be used to select models based on the specific demands of the application, choosing models robust to difficulty or those that excel in easier, more "congruent" tasks.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Chart: Model Accuracy Comparison (Incongruent vs. Congruent)

### Overview

This chart compares the accuracy of several large language models (LLMs) on two different tasks: an "Incongruent (Harder)" task and a "Congruent (Easier)" task. Accuracy is represented as a percentage, with each model having two data points – one for each task – displayed as horizontal bars. The chart is a horizontal bar chart with models listed on the y-axis and accuracy percentage on the x-axis.

### Components/Axes

* **Y-axis (Vertical):** Lists the names of the LLMs being compared. The models are:

* Llama 3.2 3B Instruct

* Llama 3.3 70B Instruct

* Qwen-3 80B A3B Thinking

* Llama 3.2 1B Instruct

* Llama 3.1 8B Instruct

* Gemini 2.5 Flash Lite

* DeepSeek V3.1

* Kimi-K2-Instruct

* GLM-4.6

* Gemini 2.5 Pro

* Gemini 2.5 Flash

* GPT-QSS-20B

* Qwen-3-Next 80B A3B Instruct

* Gemma 3.2B IT

* **X-axis (Horizontal):** Represents Accuracy (%) ranging from 0 to 100. The axis is divided into three regions: "Low Accuracy" (0-40), "Medium" (40-80), and "High Accuracy" (80-100).

* **Legend (Top-Right):**

* Red Circle: Incongruent (Harder)

* Blue Circle: Congruent (Easier)

### Detailed Analysis

The chart displays accuracy percentages for each model on both tasks. The red bars represent the "Incongruent (Harder)" task, and the blue bars represent the "Congruent (Easier)" task.

Here's a breakdown of the accuracy values, reading from top to bottom:

* **Llama 3.2 3B Instruct:** Incongruent: ~66.9%, Congruent: ~93.6%

* **Llama 3.3 70B Instruct:** Incongruent: ~81.6%, Congruent: ~93.1%

* **Qwen-3 80B A3B Thinking:** Incongruent: ~68.0%, Congruent: ~92.8%

* **Llama 3.2 1B Instruct:** Incongruent: ~60.8%, Congruent: ~82.0%

* **Llama 3.1 8B Instruct:** Incongruent: ~62.9%, Congruent: ~81.2%

* **Gemini 2.5 Flash Lite:** Incongruent: ~62.5%, Congruent: ~81.2%

* **DeepSeek V3.1:** Incongruent: ~67.9%, Congruent: ~87.5%

* **Kimi-K2-Instruct:** Incongruent: ~67.5%, Congruent: ~87.8%

* **GLM-4.6:** Incongruent: ~61.8%, Congruent: ~81.8%

* **Gemini 2.5 Pro:** Incongruent: ~61.4%, Congruent: ~80.8%

* **Gemini 2.5 Flash:** Incongruent: ~50.8%, Congruent: ~80.8%

* **GPT-QSS-20B:** Incongruent: ~49.8%, Congruent: ~80.8%

* **Qwen-3-Next 80B A3B Instruct:** Incongruent: ~53.7%, Congruent: ~80.9%

* **Gemma 3.2B IT:** Incongruent: ~53.7%, Congruent: ~81.3%

### Key Observations

* All models perform significantly better on the "Congruent (Easier)" task than on the "Incongruent (Harder)" task.

* Llama 3.3 70B Instruct exhibits the highest accuracy on the "Incongruent (Harder)" task (~81.6%).

* The models with the lowest accuracy on the "Incongruent (Harder)" task are GPT-QSS-20B and Qwen-3-Next 80B A3B Instruct (~50%).

* The accuracy spread between the "Incongruent" and "Congruent" tasks appears relatively consistent across most models.

### Interpretation

The chart demonstrates a clear difference in performance between the LLMs depending on the task complexity. The "Incongruent (Harder)" task presents a greater challenge, resulting in lower accuracy scores across the board. This suggests that the models are more sensitive to task formulation or require more robust reasoning capabilities to handle incongruent information.

The consistent gap in accuracy between the two tasks indicates that the difficulty level is a primary driver of performance. The models that excel on the harder task (e.g., Llama 3.3 70B Instruct) likely possess stronger reasoning or problem-solving abilities.

The relatively narrow range of accuracy on the "Congruent (Easier)" task suggests that most of these models have reached a level of proficiency where they can handle simpler tasks with high accuracy. The focus for improvement may therefore be on enhancing their ability to tackle more complex and nuanced challenges, as represented by the "Incongruent (Harder)" task. The chart provides a valuable comparative analysis of these models, highlighting their strengths and weaknesses in different scenarios.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Horizontal Dumbbell Chart: AI Model Accuracy on Congruent vs. Incongruent Tasks

### Overview

This image is a horizontal dumbbell chart comparing the performance of 14 different large language models (LLMs) on two types of tasks: "Congruent (Easier)" and "Incongruent (Harder)". The chart visualizes the accuracy percentage for each task type per model and highlights the performance gap (delta) between them.

### Components/Axes

* **Chart Type:** Horizontal Dumbbell Chart (also known as a Connected Dot Plot).

* **Y-Axis (Vertical):** Lists 14 AI model names. From top to bottom:

1. Llama 3.2 3B Instruct

2. Llama 3.3 70B Instruct

3. Qwen3-Next 80B A3B Thinking

4. Llama 3.2 1B Instruct

5. Llama 3.1 8B Instruct

6. Gemini 2.5 Flash Lite

7. DeepSeek V3.1

8. Kimi-K2-Instruct

9. GLM-4.6

10. Gemini 2.5 Pro

11. Gemini 2.5 Flash

12. GPT-OSS-20B

13. Qwen3-Next 80B A3B Instruct

14. Gemma 3 27B IT

* **X-Axis (Horizontal):** Labeled "Accuracy (%)". Scale runs from 0 to 100 with major tick marks at 0, 20, 40, 60, 80, and 100. Text labels "Low Accuracy", "Medium", and "High Accuracy" are placed below the axis at approximately the 10%, 50%, and 90% positions, respectively.

* **Legend:** Positioned at the top center.

* A red circle is labeled "Incongruent (Harder)".

* A blue circle is labeled "Congruent (Easier)".

* **Data Representation:** For each model, a red dot (Incongruent) and a blue dot (Congruent) are plotted on the accuracy scale. A horizontal bar connects the two dots. The numerical difference (delta, Δ) between the two accuracy values is displayed in the middle of this bar.

* **Delta Color Coding:** The delta values are colored:

* **Red:** Indicates the Congruent (blue dot) accuracy is higher than the Incongruent (red dot) accuracy. This is the case for the top 8 models.

* **Green:** Indicates the Incongruent (red dot) accuracy is higher than the Congruent (blue dot) accuracy. This is the case for the bottom 2 models (Qwen3-Next 80B A3B Instruct and Gemma 3 27B IT).

* **Yellow/Orange:** Used for models with very small deltas (Gemini 2.5 Flash Lite, DeepSeek V3.1, Kimi-K2-Instruct).

* **Teal:** Used for models with extremely small deltas (GLM-4.6, Gemini 2.5 Pro, Gemini 2.5 Flash, GPT-OSS-20B).

### Detailed Analysis

**Data Series & Values (Approximate, read from chart):**

| Model | Incongruent (Red Dot) Accuracy | Congruent (Blue Dot) Accuracy | Delta (Δ) | Delta Color |

| :--- | :--- | :--- | :--- | :--- |

| Llama 3.2 3B Instruct | ~35% | ~82% | Δ46.9% | Red |

| Llama 3.3 70B Instruct | ~53% | ~85% | Δ31.6% | Red |

| Qwen3-Next 80B A3B Thinking | ~58% | ~86% | Δ28.0% | Red |

| Llama 3.2 1B Instruct | ~42% | ~63% | Δ20.8% | Red |

| Llama 3.1 8B Instruct | ~57% | ~70% | Δ12.9% | Red |

| Gemini 2.5 Flash Lite | ~83% | ~96% | Δ12.5% | Yellow |

| DeepSeek V3.1 | ~92% | ~99% | Δ7.0% | Yellow |

| Kimi-K2-Instruct | ~91% | ~98% | Δ7.5% | Yellow |

| GLM-4.6 | ~97% | ~98% | Δ1.8% | Teal |

| Gemini 2.5 Pro | ~97% | ~98% | Δ1.4% | Teal |

| Gemini 2.5 Flash | ~97% | ~98% | Δ0.8% | Teal |

| GPT-OSS-20B | ~97% | ~98% | Δ0.8% | Teal |

| Qwen3-Next 80B A3B Instruct | ~85% | ~77% | Δ-7.9% | Green |

| Gemma 3 27B IT | ~74% | ~60% | Δ-13.7% | Green |

**Trend Verification:**

* **General Trend (Top 12 Models):** The blue dot (Congruent) is consistently to the right of the red dot (Incongruent), indicating higher accuracy on easier tasks. The connecting bar slopes upward from left (red) to right (blue). The size of the gap (delta) generally decreases as overall model accuracy increases.

* **Exception Trend (Bottom 2 Models):** For Qwen3-Next 80B A3B Instruct and Gemma 3 27B IT, the red dot (Incongruent) is to the right of the blue dot (Congruent), indicating higher accuracy on the harder task. The connecting bar slopes downward from left (blue) to right (red).

### Key Observations

1. **Performance Gap Correlation:** Models with lower overall accuracy (e.g., Llama 3.2 3B Instruct) exhibit the largest performance gaps between congruent and incongruent tasks (Δ46.9%). The highest-performing models (e.g., Gemini 2.5 Pro, GPT-OSS-20B) show near-negligible gaps (Δ<2%).

2. **High-Performance Cluster:** A cluster of models (GLM-4.6, Gemini 2.5 Pro/Flash, GPT-OSS-20B) all achieve very high accuracy (>95%) on both task types with minimal difference between them.

3. **Notable Outliers:**

* **Qwen3-Next 80B A3B Instruct** and **Gemma 3 27B IT** are the only models where performance on the "Harder" incongruent task is better than on the "Easier" congruent task.

* **Llama 3.2 3B Instruct** shows the most dramatic performance drop-off from congruent to incongruent tasks.

4. **Model Family Patterns:** Within the listed Llama models, the performance gap decreases as model size/capability increases (3B > 1B > 8B > 70B).

### Interpretation

This chart provides a nuanced view of model capability beyond simple accuracy benchmarks. It suggests that:

1. **Task Congruence is a Key Differentiator:** The "easiness" of a task (congruence) significantly impacts performance, especially for smaller or less capable models. This implies these models may rely more on pattern matching or surface-level cues that are disrupted in incongruent settings.

2. **Advanced Models Generalize Better:** The top-tier models demonstrate robust performance regardless of task congruence. Their minimal delta indicates a deeper, more flexible understanding that isn't as easily fooled by incongruent framing. This is a hallmark of more advanced reasoning capabilities.

3. **The Anomaly of Inverse Performance:** The two models that perform better on harder tasks present a fascinating anomaly. This could indicate specialized training data or architectural biases that inadvertently make them more adept at handling the specific type of incongruity tested, or it could suggest the "congruent" task formulation is somehow problematic for them. This warrants further investigation into the specific nature of the tasks.

4. **Benchmarking Implications:** Evaluating models solely on aggregate accuracy may mask important weaknesses. A model like Llama 3.2 3B Instruct appears to have reasonable accuracy on easier tasks (~82%) but fails significantly on harder ones (~35%), a critical flaw for real-world applications where inputs are often messy or contradictory. This chart argues for multi-faceted evaluation suites that stress-test model reasoning under different conditions.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Horizontal Bar Chart: AI Model Accuracy Comparison Across Task Difficulty

### Overview

The chart compares the accuracy of various AI models on incongruent (harder) and congruent (easier) tasks, with some models showing intermediate "Medium" performance. Bars are color-coded: red for incongruent tasks, blue for congruent tasks, and yellow for medium performance. Delta (Δ) values represent accuracy percentages, with spatial positioning indicating task difficulty gradients.

### Components/Axes

- **X-Axis**: Accuracy (%) ranging from 0 to 100 in 20% increments.

- **Y-Axis**: AI model names (e.g., Llama 3.2 3B Instruct, Gemini 2.5 Flash).

- **Legend**:

- Red: Incongruent (Harder)

- Blue: Congruent (Easier)

- Yellow: Medium

- **Spatial Layout**:

- Legend centered at the top.

- Bars aligned horizontally, with red bars left-aligned, blue bars right-aligned, and yellow bars overlapping both for medium performance.

### Detailed Analysis

1. **Llama 3.2 3B Instruct**:

- Incongruent: Δ46.9% (red)

- Congruent: Δ31.6% (blue)

2. **Llama 3.3 70B Instruct**:

- Incongruent: Δ31.6% (red)

- Congruent: Δ28.0% (blue)

3. **Qwen3-Next 80B A3B Thinking**:

- Incongruent: Δ28.0% (red)

- Congruent: Δ20.8% (blue)

4. **Llama 3.2 1B Instruct**:

- Incongruent: Δ20.8% (red)

- Congruent: Δ12.9% (yellow)

5. **Llama 3.1 8B Instruct**:

- Incongruent: Δ12.9% (yellow)

- Congruent: Δ7.9% (blue)

6. **Gemini 2.5 Flash Lite**:

- Incongruent: Δ12.5% (red)

- Congruent: Δ7.9% (yellow)

7. **DeepSeek V3.1**:

- Incongruent: Δ7.9% (red)

- Congruent: Δ7.5% (blue)

8. **Kimi-K2-Instruct**:

- Incongruent: Δ7.5% (red)

- Congruent: Δ7.5% (blue)

9. **GLM-4.6**:

- Incongruent: Δ7.5% (red)

- Congruent: Δ7.5% (blue)

10. **Gemini 2.5 Pro**:

- Incongruent: Δ7.5% (red)

- Congruent: Δ7.5% (blue)

11. **Gemini 2.5 Flash**:

- Incongruent: Δ7.5% (red)

- Congruent: Δ7.5% (blue)

12. **GPT-OSS-20B**:

- Incongruent: Δ7.5% (red)

- Congruent: Δ7.5% (blue)

13. **Qwen3-Next 80B A3B Instruct**:

- Incongruent: Δ7.9% (red)

- Congruent: Δ7.9% (blue)

14. **Gemma 3 27B IT**:

- Incongruent: Δ13.7% (yellow)

- Congruent: Δ7.9% (blue)

### Key Observations

- **Largest Delta**: Llama 3.2 3B Instruct shows the greatest disparity (Δ15.3%) between incongruent (46.9%) and congruent (31.6%) tasks.

- **Smallest Delta**: Gemini 2.5 Flash Lite (Δ4.6%) and Kimi-K2-Instruct/GLM-4.6 (Δ0%) exhibit minimal performance differences.

- **Medium Performance**: Models like Llama 3.1 8B Instruct and Gemma 3 27B IT use yellow bars, suggesting intermediate task handling.

- **Consistent Performance**: Models with identical red/blue deltas (e.g., Gemini 2.5 Pro, GPT-OSS-20B) show no accuracy drop between task types.

### Interpretation

The chart demonstrates that most models struggle more with incongruent tasks, as evidenced by larger red bars and higher Δ values. Models with smaller deltas (e.g., Gemini 2.5 Flash Lite) maintain near-parity between task difficulties, suggesting robust adaptability. The yellow "Medium" category highlights models with balanced but suboptimal performance across both task types. Notably, larger models (e.g., Llama 3.3 70B) do not consistently outperform smaller variants, indicating that scale alone does not guarantee task difficulty resilience. The Gemma 3 27B IT’s Δ13.7% incongruent accuracy suggests even high-capacity models face challenges with harder tasks.

DECODING INTELLIGENCE...