## Bar Chart: Accuracy Comparison of Defaults vs. Relational Tasks

### Overview

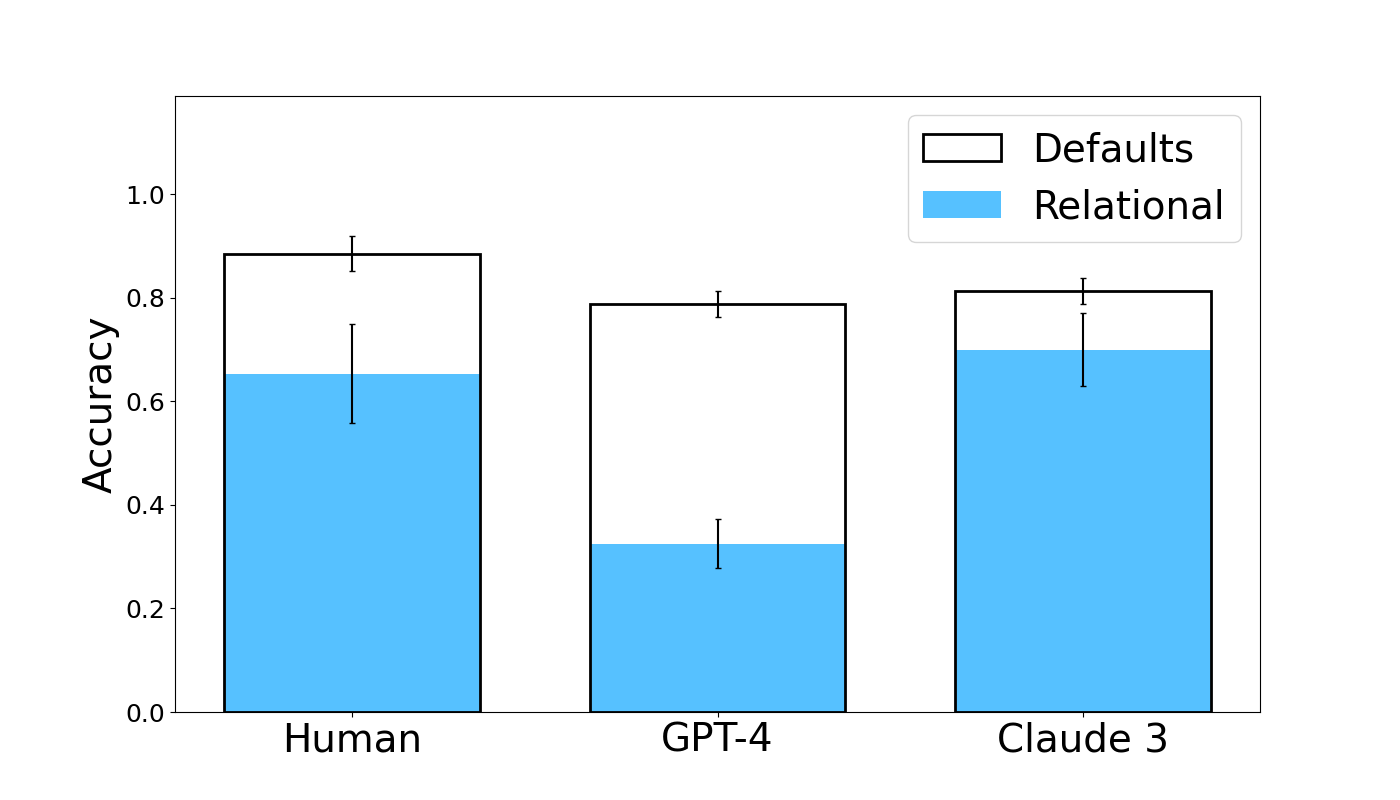

The image is a grouped bar chart comparing the accuracy of three entities—Human, GPT-4, and Claude 3—on two types of tasks: "Defaults" and "Relational." The chart includes error bars for each data point, indicating variability or confidence intervals. The overall visual suggests a performance comparison between human and AI model capabilities on different cognitive task types.

### Components/Axes

* **Y-Axis:** Labeled "Accuracy." The scale runs from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:** Categorical, listing three entities: "Human," "GPT-4," and "Claude 3."

* **Legend:** Located in the top-right corner of the chart area.

* **Defaults:** Represented by a white bar with a black outline.

* **Relational:** Represented by a solid light blue bar.

* **Data Series:** For each entity on the x-axis, there are two adjacent bars: a white "Defaults" bar and a blue "Relational" bar. Each bar has a vertical error bar extending above and below its top edge.

### Detailed Analysis

**1. Human:**

* **Defaults (White Bar):** The bar height is approximately **0.88**. The error bar extends from roughly **0.85 to 0.92**.

* **Relational (Blue Bar):** The bar height is approximately **0.65**. The error bar is larger, extending from roughly **0.56 to 0.75**.

* **Trend:** Human accuracy is significantly higher on Defaults tasks than on Relational tasks.

**2. GPT-4:**

* **Defaults (White Bar):** The bar height is approximately **0.79**. The error bar is relatively small, extending from roughly **0.77 to 0.81**.

* **Relational (Blue Bar):** The bar height is approximately **0.32**. The error bar extends from roughly **0.28 to 0.37**.

* **Trend:** GPT-4 shows a very large performance drop from Defaults to Relational tasks, with Relational accuracy being less than half of its Defaults accuracy.

**3. Claude 3:**

* **Defaults (White Bar):** The bar height is approximately **0.81**. The error bar extends from roughly **0.79 to 0.83**.

* **Relational (Blue Bar):** The bar height is approximately **0.70**. The error bar extends from roughly **0.63 to 0.77**.

* **Trend:** Claude 3 also performs better on Defaults than Relational tasks, but the gap is smaller than that observed for GPT-4.

### Key Observations

1. **Universal Performance Gap:** All three entities (Human, GPT-4, Claude 3) achieve higher accuracy on "Defaults" tasks compared to "Relational" tasks.

2. **Magnitude of Gap Varies:** The performance gap between task types is most extreme for GPT-4, moderate for Humans, and smallest for Claude 3.

3. **Relative Performance:**

* On **Defaults** tasks, Humans (~0.88) have the highest accuracy, followed closely by Claude 3 (~0.81) and then GPT-4 (~0.79).

* On **Relational** tasks, Claude 3 (~0.70) has the highest accuracy, followed by Humans (~0.65), with GPT-4 (~0.32) performing substantially worse.

4. **Error Bar Variability:** The error bars for "Relational" tasks are generally larger than those for "Defaults" tasks, particularly for Human and Claude 3, suggesting greater variability or uncertainty in performance on relational reasoning.

### Interpretation

The data suggests a fundamental distinction in capability between "Defaults" (likely factual recall or common-sense knowledge) and "Relational" (likely involving reasoning about relationships between entities or concepts) tasks.

* **Human Performance:** Humans show a robust but not perfect ability in both domains, with a notable drop in accuracy when relational reasoning is required. The larger error bar on the relational task indicates this is a more variable skill among humans.

* **AI Model Divergence:** The two AI models exhibit starkly different profiles. **GPT-4** demonstrates strong performance on Defaults, nearly matching humans, but fails dramatically on Relational tasks. This implies its knowledge base is extensive, but its capacity for structured relational reasoning is a significant weakness.

* **Claude 3's Profile:** **Claude 3** shows a more balanced profile. While slightly less accurate than humans on Defaults, it outperforms humans on the Relational task in this sample and maintains a much smaller performance gap between the two task types. This suggests a stronger architectural or training emphasis on relational reasoning compared to GPT-4.

* **Overall Implication:** The chart highlights that "accuracy" is not a monolithic metric. An AI's performance is highly dependent on the *type* of cognitive task. Claude 3 appears more robust for tasks requiring relational understanding, while GPT-4's strength lies in default knowledge retrieval. The human benchmark provides a reference point for a balanced, albeit imperfect, integration of both capabilities.