## Bar Chart: KV Cache Length Comparison Between Transformers and DynTS

### Overview

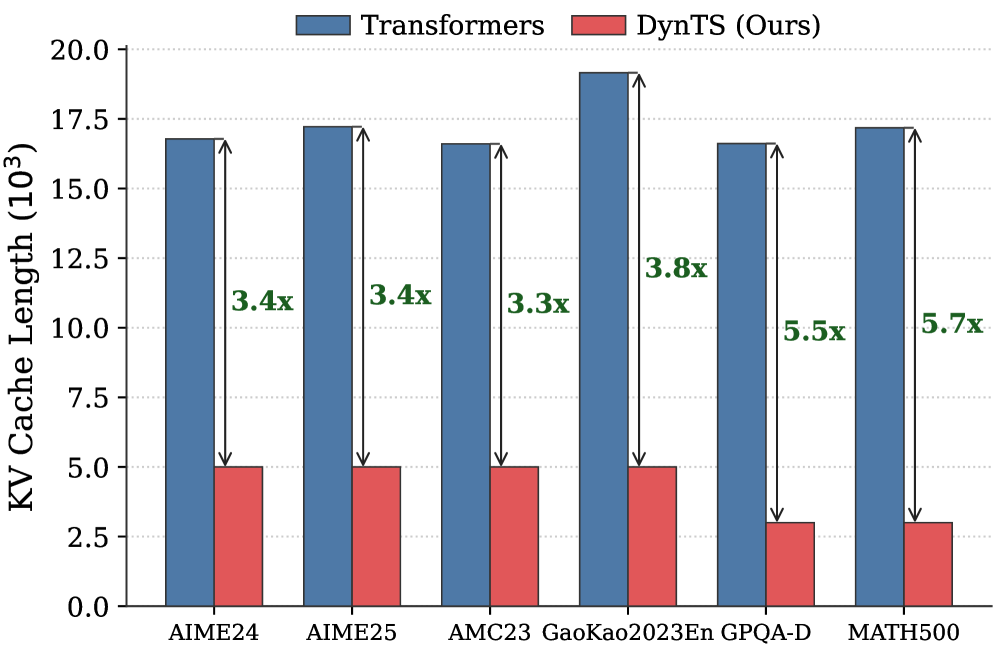

This is a vertical bar chart comparing the Key-Value (KV) cache length (in thousands) of two model architectures—"Transformers" and "DynTS (Ours)"—across six different benchmark datasets. The chart visually demonstrates the reduction in KV cache length achieved by the DynTS method.

### Components/Axes

* **Chart Type:** Grouped bar chart.

* **Title/Legend:** Located at the top center. It defines two data series:

* **Blue Bar:** "Transformers"

* **Red Bar:** "DynTS (Ours)"

* **Y-Axis:**

* **Label:** "KV Cache Length (10³)" - This indicates the values are in thousands.

* **Scale:** Linear scale from 0.0 to 20.0, with major tick marks every 2.5 units (0.0, 2.5, 5.0, 7.5, 10.0, 12.5, 15.0, 17.5, 20.0).

* **X-Axis:**

* **Categories (Datasets):** Six distinct benchmark datasets are listed from left to right:

1. AIME24

2. AIME25

3. AMC23

4. GaoKao2023En

5. GPQA-D

6. MATH500

* **Data Annotations:** For each dataset pair, a vertical double-headed arrow connects the top of the blue bar to the top of the red bar. Next to each arrow, a green text label indicates the multiplicative reduction factor (e.g., "3.4x").

### Detailed Analysis

**Data Series & Approximate Values:**

The chart presents paired bars for each dataset. The blue "Transformers" bars are consistently much taller than the red "DynTS (Ours)" bars.

1. **AIME24:**

* Transformers (Blue): ~16.8 (thousand)

* DynTS (Red): ~5.0 (thousand)

* **Reduction Factor:** 3.4x (as annotated).

2. **AIME25:**

* Transformers (Blue): ~17.2 (thousand)

* DynTS (Red): ~5.0 (thousand)

* **Reduction Factor:** 3.4x (as annotated).

3. **AMC23:**

* Transformers (Blue): ~16.5 (thousand)

* DynTS (Red): ~5.0 (thousand)

* **Reduction Factor:** 3.3x (as annotated).

4. **GaoKao2023En:**

* Transformers (Blue): ~19.2 (thousand) - *This is the highest value for Transformers.*

* DynTS (Red): ~5.0 (thousand)

* **Reduction Factor:** 3.8x (as annotated).

5. **GPQA-D:**

* Transformers (Blue): ~16.5 (thousand)

* DynTS (Red): ~3.0 (thousand)

* **Reduction Factor:** 5.5x (as annotated).

6. **MATH500:**

* Transformers (Blue): ~17.2 (thousand)

* DynTS (Red): ~3.0 (thousand)

* **Reduction Factor:** 5.7x (as annotated) - *This is the highest reduction factor.*

**Trend Verification:**

* **Transformers Series (Blue):** The bars show relatively stable, high KV cache lengths across all datasets, fluctuating between approximately 16.5 and 19.2 thousand. There is no strong upward or downward trend across the dataset order.

* **DynTS Series (Red):** The bars show two distinct levels. For the first four datasets (AIME24, AIME25, AMC23, GaoKao2023En), the value is stable at ~5.0 thousand. For the last two datasets (GPQA-D, MATH500), the value drops to a stable ~3.0 thousand.

* **Reduction Factor Trend:** The annotated reduction factor generally increases from left to right, starting at 3.3x-3.4x for the first three datasets and rising to 5.5x-5.7x for the last two.

### Key Observations

1. **Consistent Superiority:** The DynTS method results in a substantially lower KV cache length than the standard Transformer across all six benchmarks.

2. **Magnitude of Reduction:** The reduction is significant, ranging from a factor of 3.3x to 5.7x.

3. **Dataset-Dependent Performance:** The efficiency gain (reduction factor) is not uniform. DynTS shows its greatest relative improvement on the GPQA-D and MATH500 datasets (5.5x and 5.7x reduction), where its absolute KV cache length is also lowest (~3.0k).

4. **Stability of Baseline:** The KV cache length for the standard Transformer model is remarkably consistent across diverse benchmarks, suggesting a fundamental characteristic of the architecture under these test conditions.

### Interpretation

This chart provides strong empirical evidence for the memory efficiency of the proposed DynTS architecture. The KV cache is a critical component in autoregressive models like Transformers, directly impacting memory usage and inference cost, especially for long sequences.

* **What the data suggests:** DynTS successfully reduces the memory footprint (as proxied by KV cache length) by a factor of 3 to nearly 6, depending on the task. This implies that DynTS could enable the processing of longer contexts or larger batch sizes within the same hardware memory constraints compared to a standard Transformer.

* **Relationship between elements:** The direct pairing of bars and the explicit reduction factor annotations create a clear, immediate comparison. The increasing reduction factor from left to right hints that DynTS's advantages may be more pronounced on certain types of tasks or data distributions represented by GPQA-D and MATH500.

* **Notable implications:** The most striking finding is the dichotomy in DynTS's performance: it maintains a cache length of ~5k for four datasets but drops to ~3k for two others. This suggests the method's compression or caching mechanism may be particularly effective for the characteristics of the latter tasks. The consistent high values for the Transformer baseline underscore the memory challenge that DynTS aims to solve. The chart effectively argues that DynTS is a promising approach for making large-scale models more memory-efficient without, presumably, sacrificing performance (though performance metrics are not shown here).