\n

## Bar Chart: Jailbreak Evaluations

### Overview

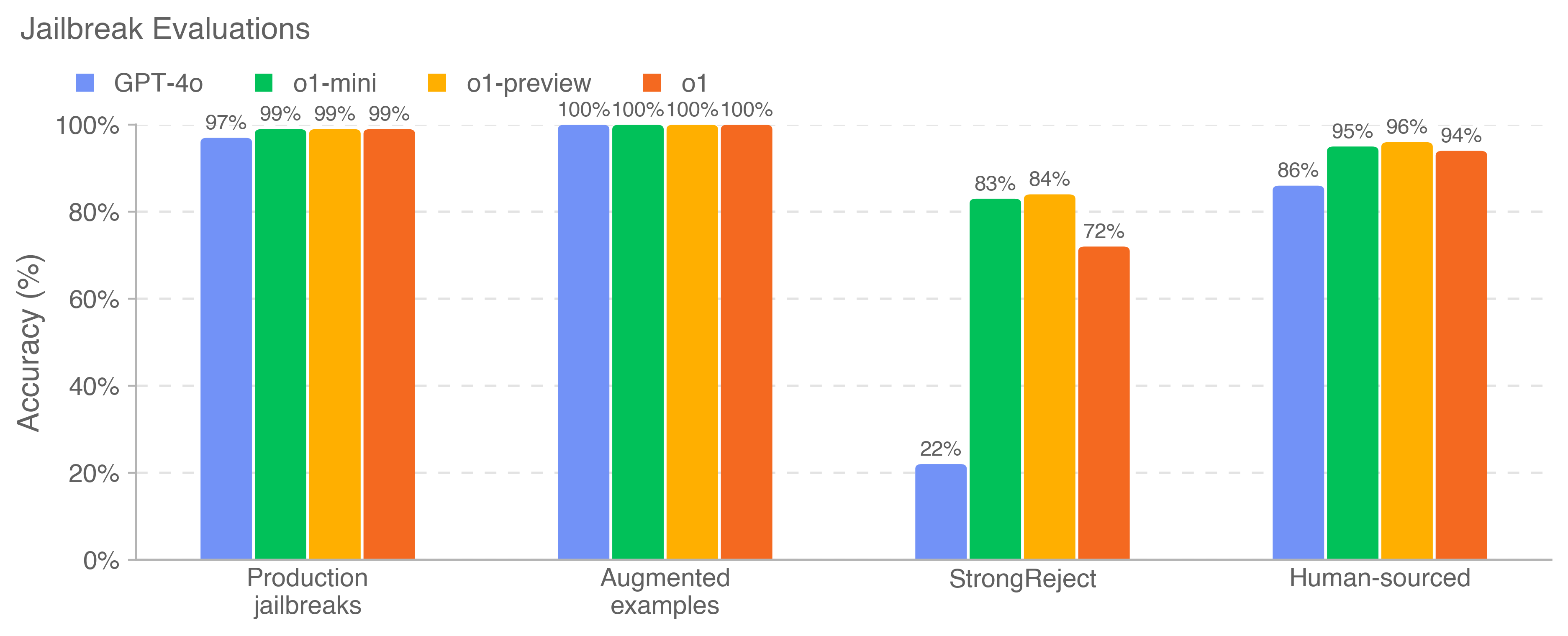

The image displays a grouped bar chart titled "Jailbreak Evaluations." It compares the performance of four different AI models across four distinct evaluation datasets or scenarios. The performance metric is "Accuracy (%)," indicating the success rate of the models in correctly handling or resisting "jailbreak" attempts.

### Components/Axes

* **Chart Title:** "Jailbreak Evaluations" (top-left).

* **Y-Axis:** Labeled "Accuracy (%)". The scale runs from 0% to 100% in increments of 20% (0%, 20%, 40%, 60%, 80%, 100%).

* **X-Axis:** Represents four evaluation categories:

1. Production jailbreaks

2. Augmented examples

3. StrongReject

4. Human-sourced

* **Legend:** Positioned at the top-left, below the title. It defines four data series by color:

* **Blue Square:** GPT-4o

* **Green Square:** o1-mini

* **Yellow Square:** o1-preview

* **Orange Square:** o1

* **Data Labels:** The exact accuracy percentage is printed above each bar.

### Detailed Analysis

The chart presents accuracy percentages for each model within each evaluation category. The data is as follows:

**1. Production jailbreaks:**

* GPT-4o (Blue): 97%

* o1-mini (Green): 99%

* o1-preview (Yellow): 99%

* o1 (Orange): 99%

*Trend:* All models perform very highly, with the o1 series models achieving near-perfect scores.

**2. Augmented examples:**

* GPT-4o (Blue): 100%

* o1-mini (Green): 100%

* o1-preview (Yellow): 100%

* o1 (Orange): 100%

*Trend:* All four models achieve a perfect 100% accuracy score on this dataset.

**3. StrongReject:**

* GPT-4o (Blue): 22%

* o1-mini (Green): 83%

* o1-preview (Yellow): 84%

* o1 (Orange): 72%

*Trend:* This category shows the most significant performance divergence. GPT-4o's accuracy drops drastically to 22%. The o1 series models maintain much higher accuracy, with o1-preview (84%) and o1-mini (83%) performing similarly, while o1 (72%) scores notably lower than its siblings but still far above GPT-4o.

**4. Human-sourced:**

* GPT-4o (Blue): 86%

* o1-mini (Green): 95%

* o1-preview (Yellow): 96%

* o1 (Orange): 94%

*Trend:* All models perform well, with the o1 series again outperforming GPT-4o by a margin of 8-10 percentage points. o1-preview has the highest score in this group.

### Key Observations

1. **Consistent Superiority of o1 Series:** Across all four evaluation categories, the models from the o1 family (mini, preview, and the base model) consistently achieve higher accuracy scores than GPT-4o.

2. **The "StrongReject" Anomaly:** The "StrongReject" dataset is a clear outlier, causing a severe performance degradation for GPT-4o (22%) and a moderate one for the o1 models (72-84%). This suggests this evaluation set contains particularly challenging or differently structured jailbreak attempts.

3. **Perfect Scores on Augmented Examples:** All models flawlessly handle the "Augmented examples" dataset, indicating these examples may be less sophisticated or that the models are highly robust to this specific type of augmentation.

4. **o1-preview as Top Performer:** The o1-preview model (yellow bar) achieves the highest or ties for the highest score in three out of four categories (Production jailbreaks, StrongReject, Human-sourced).

### Interpretation

This chart evaluates the robustness of different large language models against adversarial "jailbreak" prompts designed to bypass their safety protocols. The data suggests a clear generational or architectural improvement in the o1 series models over GPT-4o in this specific domain of safety alignment.

The dramatic failure of GPT-4o on the "StrongReject" benchmark is the most critical finding. It implies that while GPT-4o is robust against common or production-level jailbreaks, it has a significant vulnerability to the specific attack vectors represented in the StrongReject dataset. In contrast, the o1 models demonstrate more consistent and resilient safety performance across diverse threat models.

The perfect scores on "Augmented examples" could indicate one of two things: either the augmentation method used to create these examples is not effective against modern models, or the models have been specifically trained to recognize and reject such augmented patterns. The high performance on "Human-sourced" jailbreaks suggests the models are generally effective against attacks crafted by people, though the o1 series holds a clear advantage.

Overall, the chart communicates that the newer o1 model family offers a substantial upgrade in jailbreak resistance compared to GPT-4o, particularly against sophisticated or specialized attack sets like StrongReject.